Article Content

1 Introduction

Artificial Intelligence (AI) has been applied widely in the architectural design process (Vissers-Similon et al., 2024). For example, prediction algorithms allow performative optimisation (Castro Pena et al., 2021) and cost optimisation (Momade et al., 2021) during the design modelling process. After 2022, generative AIs, especially multimodal AIs, which are the focus of this research, capable of processing both verbal and visual inputs, have demonstrated significant promise in the early stages of architectural design for exploration, ideation, and conception (Enjellina et al., 2023). For instance, AI can create inspirational images, iterate on design images with controlled variations (Zhong et al., 2024) and analyse text inputs such as project briefs and design intentions (Pouliou et al., 2023). However, several issues may limit their effectiveness in practical situations. Three key research gaps are identified in the AI-enhanced design process, including (1) linear interaction, (2) user-friendliness (steep learning curve) and (3) lack of context awareness in AI response.

Current AI use in architectural design tends to follow a linear “input–output” model, leading to a reductionist approach (Bolojan et al., 2024). Instead, the AI-enhanced design process should be a more divergent, open-ended process (Vermillion, 2022), where researchers described the interaction between designers and AI tools are “negotiation” (Ibarrola et al., 2024) or “deliberation” progress (Homayounirad, 2023). They match the idea that the design process is conversational in nature (Schön, 2017), where conversation refers to a mutual-learning process between humans and computational tools (Pangaro & Dubberly, 2014). Second, despite the awareness and experimentation of implementing a conversational loop between AIs and human designers during the architectural process, has been raised (Bolojan et al., 2024), this requires advanced knowledge of deep learning. Also, frequent switching between different AI applications poses a steep learning curve for designers (Andreou et al., 2023; Homayounirad, 2023). Finally, it is widely recognised that outputs from generative AIs often lack relevance to the site context. Despite design workflows that integrate context awareness with performative optimisation, alongside the exploratory capabilities of generative AIs have been explored (Zhong et al., 2024), the interactions between performative optimisation and AI applications are limited, resulting in a one-directional design process.

Therefore, this paper presents a conversational AI-enhanced design framework for early-stage architectural exploration to tackle these limitations. An AI bot has been specifically developed and implemented in this workflow to provide active, multi-perspective responses. This bot serves as a suggestive design partner rather than a predictive or automatic “fast draftsman,” as described by Nicholas Negroponte (1967). It addresses the research gaps, including the lack of context awareness in AI responses and the underexplored potential of multimodal, agentic AI applications. In addition, the AI bot is implemented in a group messaging environment, enabling a collective discussion between designers and the AI with an intuitive user interface, tames research gaps of steep learning curve, lack of user-friendliness, and limited exploration of collaborative design scenarios. The main aim of the research is to propose and verify a possible AI-design framework, rather than a replacement for traditional design methods. This paper first discusses the conversational design process from the computational-aided architectural design point of view. In parallel, this paper investigates how AI has been applied to human-AI collaborations in architectural design scenarios from a more technical perspective regarding AI development. With the findings, this research proposes a conversational design framework and elaborates on how it provides insights for developing architectural-focus AI tools. Then, the methodology section introduces and demonstrates the proposed framework and exploration with the AI tools to understand the feasibility and challenges. A case study of a design workshop within an educational scenario is presented after the demonstration, followed by quantitative and qualitative evaluations. Finally, the paper concludes with implications for research in AI-enhanced design processes, envisioning potential applications in real-world practices and future research directions.

2 Background

Computer-aided architectural design (CAAD) has been established since the 1960 s (Sutherland, 1963). Instead of purely serving more complex design drawings, the role of computers in design ideation and exploration has been investigated since then. Early examples include Sketchpad, which illustrates how computers could be used to create graphics for architectural design. In this context, the computer acts as a design partner within a computer design program. The Architecture Machine Group (AMG), which later evolved into the MIT Media Lab, developed URBAN5. This program engaged in natural language conversations with designers on-screen, responding to the drawings created by architects in contrast to pre-set design requirements (N. Negroponte, 1967). This highlighted the potential for computers to function as designers more than merely gimmick image generators (N. Negroponte, 1976).

2.1 Conversational CAAD process

As CAAD becomes increasingly common among designers, architectural theorists have expressed concerns regarding the interaction between human designers and computers. For instance, architectural cybernetician Ranulph Glanville shared his”worst computing nightmare”, warning that designers might be unaware of being overshadowed by computers due to viewing them solely as tools (Glanville, 1992). More critically, with the swift advancement of AI, it is observed that the misunderstanding and misuse of AI have led to social problems and a gradual decline in human purpose (AiTech – TU Delft, 2020; Cheung et al., 2024). From a practical perspective in an architectural design context, using an AI image generation tool as a means of transferring design style is risky, as it focuses solely on visual aspects while ignoring essential factors like space and environment (DigitalFUTURES world, 2022a, 2022b). Taming the mentioned complex and “wicked” nature of design problems (Rittel & Webber, 1973), enhancing the conversation between human designers and computers is essential to address these challenges (Glanville, 2007; Goodbun & Sweeting, 2021; Stralen, 2015). This concept of conversational design can be traced back to architectural cybernetician Gordon Pask’s Conversation Theory (Pask, 1980) and Donald Schön’s notion of design as a reflective conversation (Schön, 2017), where “conversation” involves a learning process where humans and computers engage in iterative dialogues to reach agreements (Pangaro & Dubberly, 2014). Interaction between designers, stakeholders and AI is considered as a “deliberation” process (Homayounirad, 2023) and a “human–machine collaboration” process (Bank et al., 2022). With the emergence of generative AI tools in recent years, this idea indicates that the use of AI image generation tools should be a collaborative, iterative and conversational process (Stojanovski et al., 2022; Vermillion, 2022; Yildirim, 2022). The conversational design process between human designers and AI should value novelty over quality or prediction accuracy (Ibarrola et al., 2024), where AI acts as a “negotiation and coordinator” (Gerber et al., 2015), or a “mediator” which “provides an informed choice from acceptable options” (Andreou et al., 2023), to achieve a collaborative “meaning-making” process (Wu et al., 2021), which distinguishes the conversational design process from the traditional prediction accuracy-based AI application.

2.2 Generative AI for design collaborations

Currently, most AI applications in the architectural design process focus primarily on Computer Vision (CV) and Natural Language Processing (NLP) (Zhong et al., 2024). Tools like Midjourney, Stable Diffusion, and DALLE-3 have demonstrated their potential to provide inspiring and relevant content for architectural design. However, these applications often prioritise generating visually appealing images over facilitating the design process itself (Cheung & Dall’Asta, 2023). Apart from CV tools, the potential of Natural Language Processing (NLP) tools in the design process has also been recognised, but most of them have not been fully explored (Galanos et al., 2023; Huang et al., 2021). To bridge this gap, the importance of multimodal AI, which combines both CV and NLP, should be explored (Bolojan et al., 2022). Combining them will promote mutual interaction between humans and computers, verbally and visually, and facilitate a more intuitive collaboration between designers and AI tools in the architectural design process. However, current multimodal AI-enhanced frameworks for architects require extensive learning on AI development (Ulberg et al., 2020), entail long learning curves for applications (Bolojan et al., 2024), and are mostly limited to one-to-one human-AI interaction, which limits the possibility of a team collaboration scenario. Despite the potential of how agentic AI systems can improve the collaborative design process between humans and AI has been discussed (Abedin et al., 2022; Ibarrola et al., 2024), empirical studies are underexplored. Andrew Ng (Sequoia Capital, 2024) defines agentic AI systems as having four components: reflection, tool use, planning, and multi-agent collaboration, which are capable of planning workflows that respond to users comprehensively and effectively. While reflection and tool use are already well-developed in AI applications, planning and multi-agent collaboration are still emerging technologies.

In recent years, such experiments with multimodal AIs and agentic AIs have been performed in the architecture discipline in three common directions: (1) AI as an image generator, (2) AI as a design drawing generator and (3) AI as a design partner. For example, LLMs, such as Llama and GPT, are applied to revise prompts for image generation with the mentioned AI image generation tools, where the multimodal AI serve as an inspiration design image generator (Shi et al., 2024). For the second type of application, most research used agentic LLMs, which act as “controllers” to translate complex input, such as designers’ verbal descriptions, into executable computer codes for structural design (Qin et al., 2024) and layout design (Gaier et al., 2024) for vector drawing generations. However, this approach still needs to be explored in more intuitive architectural design workflows, such as 3D modelling and sketching processes. Finally, LLMs are explored as design assistants, involved in processing the complexity of design project, such as analysing design brief (Homayounirad, 2023), providing verbal and visual design advice (Cheung et al., 2023; Sabah et al., 2024; Zhong et al., 2024), or commenting on design based on an architecture database (Ferguson et al., 2015).

In summary, the idea of a human–computer conversational design process has been established, and AI technology development shows potential in enhancing the conversation potential in CAAD. In recent AI-integrated design research, challenges such as long learning curves and limitations of one-to-one interaction have been observed. Although the direction of intuitive AI application, collaborative environment development, and the capability of agentic AI have been known, they are underexplored in the architectural design process discipline. CV tools such as AI image generators have been mostly used as mere inspiration generators, and although NLP has shown potential, intuitive applications are lacking.

2.3 Research gaps, aims and research questions

Based on the discussion above, five research gaps are identified: (1) linear interaction in AI-enhanced design process, (2) steep learning curve and lack of user-friendliness, (3) lack of context awareness in AI responses, (4) underexplored multimodal, agentic AI application and (5) limited exploration of collaborative design scenarios. In response, this study is focused on addressing these gaps with three specific aims. First, this research aims to establish a novel conversational framework that supports iterative and collaborative human-AI interaction in the early stages of architectural design. Second, this research seeks to design an AI system that designers can apply with minimal learning curves. Third, this research aims to investigate how multimodal, agentic AI can tackle complex design discussions in architectural design processes, such as providing contextual evaluation and suggestions by providing verbal and visual outputs. Then, three research questions are raised:

- 1.How can an AI-enhanced design framework for an early-stage architectural design process address the limitations of linear interaction and foster a more conversational workflow?

- 2.What strategies can be employed to minimise the learning curve and improve the accessibility of AI tools for architects in collaborative design scenarios?

- 3.How can multimodal and agentic AI systems be integrated into architectural design workflows to enable intuitive and context-aware collaboration?

The primary hypothesis is that a conversational AI-enhanced design framework that incorporates a multimodal, agentic AI can enhance the early-stage architectural design process by enabling an iterative, intuitive design collaboration between designers and AI. Three sub-hypotheses are made in response to the three research questions:

- H1: Implementing a conversational loop in an AI-enhanced design system can lead to an iterative design process compared to traditional linear input-output models.

- H2: Deployment of AI tools in a user-friendly medium can minimise learning curves for designers.

- H3: Integrating multimodal and agentic AI can be applied in complex scenarios such as group collaboration and providing context-aware AI responses.

In conclusion, this research seeks to establish a novel conversational framework for AI-assisted design processes. Instead of the recent commonly seen AI applications, which require steep learning curves or are developed for limited single functions such as image generation, a multimodal, agentic AI system is developed that can be utilised with minimal learning curves for intuitive applications in group design scenarios.

3 Methodology

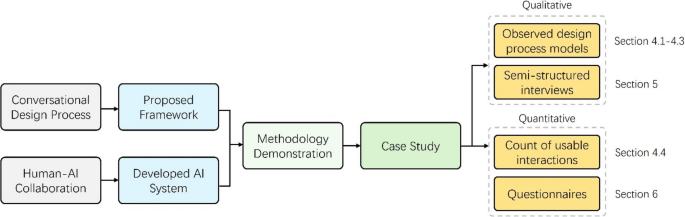

Figure 1 shows the research framework of this paper. In the previous section, the conversational design process from the CAAD perspective and human-AI collaborations in the architecture discipline are discussed (the grey part of Fig. 1). Since generative AIs have developed rapidly in recent years, more researchers have developed AI tools on user-friendly platforms such as web-based user interfaces and organised workshops for participants to verify the proposed workflow rather than self-demonstration in code-based environments. For example, Paananen et al. (2024) tested the developed AI tools with 5 architecture students, and Zhang et al. (2023) experimented with the proposed workflow with 11 architecture students. Therefore, using the insights from the previous studies, the methodology section proposes a design framework and a developed AI system (the blue part of Fig. 1). After demonstrating how the developed AI system could be used under the proposed framework, a case study of a design workshop with three undergraduate architecture students is presented (the green part of Fig. 1).

Research structure from the proposed framework and AI system, demonstration, case study to evaluation

Despite there is a tendency of scientific research to analyse design workflows in a step-by-step manner for statistical studies (Menzies & Shull, 2010). Framing design processes by a rational scientific foundation has the risk of diminishing the complexity of design processes (Daniel, 2013; Sweeting, 2017). Therefore, we employed a multi-perspective post-workshop evaluation approach for design processes analysis, which is ill-defined in nature (Creswell & Clark, 2017). It is composed of four parts (the orange part of Fig. 1). There are common quantitative analyses used in recent AI-enhanced design process research, such as the count of usable AI interaction percentage and the successful image generation rate (Dortheimer et al., 2023; Maksoud et al., 2024). However, such a scoring approach has been criticised because it cannot accurately reflect the relevance between AI-generated images and design intent (Shi et al., 2024). Also, this research did not conduct a comparison study with a control group setup due to the complexity of design processes, making such comparisons challenging in nature. Therefore, participant surveys or questionnaires can be used to quantify feedback on the effectiveness of AI applications in the design process (Agkathidis et al., 2024; Dortheimer et al., 2023). In summary, this research leverages the capability of counting usable AI interactions for understanding the usefulness of multimodal AIs, followed by user surveys on quantifying the effectiveness of the AI-enhanced design process.

For qualitative approaches, observations and interviews (Zhang et al., 2023) are conducted. Therefore, this research evaluates the workshop process by a hybrid approach, including (1) a count of usable interaction, (2) questionnaires, (3) semi-structured interviews with the three participants, and (4) observing the three different design process models. Finally, the paper concludes with implications for research in AI-enhanced design processes, envisioning potential applications in real-world practices and future research directions.

In summary, Sects. 4.1–4.3 are observation-based to understand whether the proposed framework facilitates a variety of conversational design processes rather than the traditional linear input–output design process constraints. Section 4.4 includes a count-based analysis for reflection on the use of the AI system. Sections 5 and 6, which are semi-structured interviews and questionnaires, respond to the 3 sub-hypotheses qualitatively and quantitatively. The questionnaires aim to address several observed dilemmas from interview results.

3.1 Conversational design framework

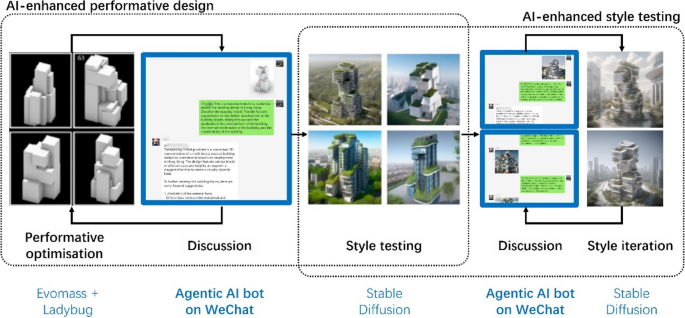

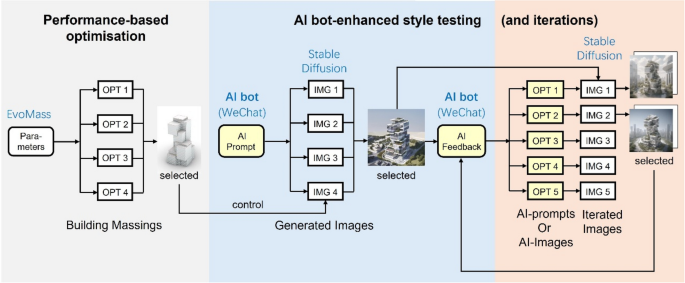

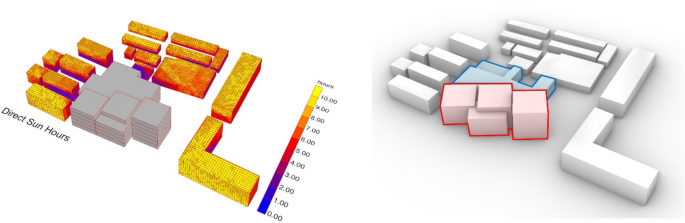

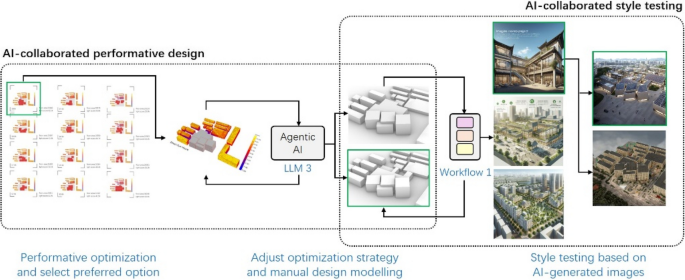

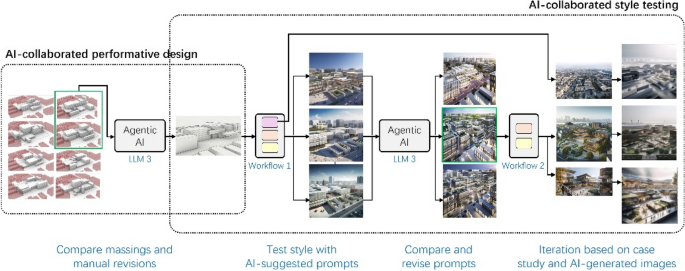

This section proposes a conversational AI-enhanced design framework, which is aimed at enhancing the design process with the developed agentic AI bot as a design partner. Figure 2 depicts an overall design workflow consisting of two components: AI-enhanced performative optimisation-based design and AI-enhanced style testing. This workflow is particularly useful for performative design optimization as the optimisation is often only able to produce abstract building design options without details associated with architectural styles and façade languages, which often hinder designers from effectively assessing the feasibility of the high-performing solutions in regard to architectural design. Thus, the workflow begins with the performative design optimization focusing on environmental and/or energy performance. Performative design optimisation is performed by a building massing design optimisation tool called EvoMass (Wang, 2022). Noted that, in this workflow, EvoMass can be replaced by another tool that can generate performance-related design options or parametric models manually created by the designer.

Proposed 2-stage workflow: (1) AI-enhanced performative design and (2) AI-enhanced style testing

After the completion of the performative optimisation stage, designers can choose preferred options from the optimisation result, in which both optimal and suboptimal options can be selected to enhance the subsequent design exploration assisted by the agentic AI. The selected design options are further exported as massing model images, which would serve as the input, along with the user-defined text inputs, for the discussion with the agentic AI. The discussion is aimed at improving the evaluation and comparison of selected options, suggesting ways to enhance optimisation, and exploring potential styles for experimentation in the next stage. Once styles are tested using AI image generation tools, the selected images can be sent to the AI bot for style iteration discussions. The AI bot can provide verbal feedback and generate reference images to support further iterations. For AI collaboration, the agentic AI functions as a chatbot on WeChat, a popular messaging application in mainland China, allowing human designers to engage in discussions with the AI in a group chat setting with minimal learning barriers.

3.2 Conversational multimodal, agentic AI system

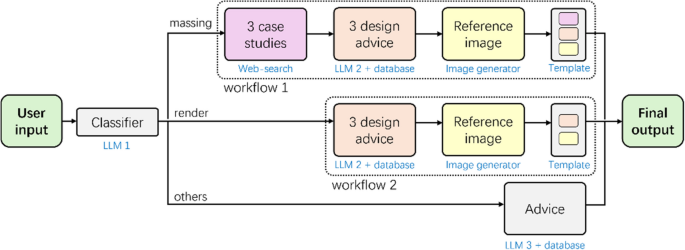

The agentic multimodal AI has been developed as a design partner specifically for architectural applications; the schematic of this proposed system is shown in Fig. 3. Upon receiving inputs from the designer, which may include text and images, a multimodal large language model (LLM 1) serves as a question classifier to determine if the inquiry contains massing model images or renders. If a massing model image is included, it indicates that the designer is in the early stages of the design process; thus, the preset workflow will be most beneficial. The AI system is used as a chatbot on WeChat. The AI bot is also incorporated into a group chat environment, allowing it to communicate with all group members.

The proposed AI system is composed of a classifier, two workflows and an agentic AI

It first conducts a web search using Google to collect relevant case studies based on the geometrical features of the massing model. Three case studies are gathered and summarised, considering the balance between the speed of web searching and the cognitive load on designers. LLM 2 has access to a pre-defined project brief and a knowledge base that includes the EvoMass manual and research articles related to the performative architectural design process. The summarised search results are sent to LLM 2 as a reference, along with the knowledge base, to provide design advice in three different directions and suggest a preferred direction, followed by a prompt for the chosen option. This prompt is forwarded to the image generator to create a reference image. The outcomes from these steps are compiled into the final output. If the designer’s inputs include a render, it is assumed that the design progress is more advanced, and as a result, no case studies are searched. However, different advice directions are still provided, and a reference image is generated based on the inquiries and preferred design direction. Suppose the inputs include either a massing image or a rendering. In that case, the inquiry is processed by LLM 3, a multimodal LLM equipped with a project brief and knowledge base, which can decide which tools to use, including web searching and image generation.

The entire agentic multimodal AI system is developed using the LinkAI platform (LinkAI, n.d.). Question-answering with the database is based on Retrieval Augmented Generation techniques embedded in the same LinkAI platform. In this configuration, all LLMs are based on OpenAI’s GPT-4, and the image generator is based on OpenAI’s DALLE-3, chosen for its cost, speed, functionality (vision-enabled), and effectiveness. The system prompts are provided in Online Resource 1 (Sect. 1).

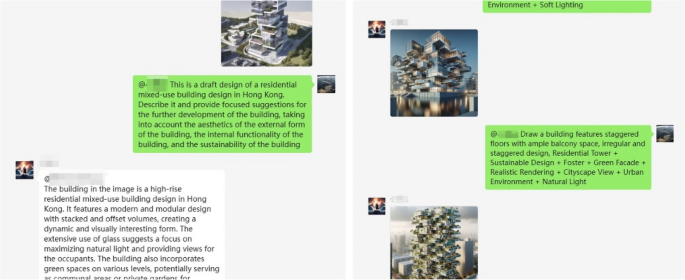

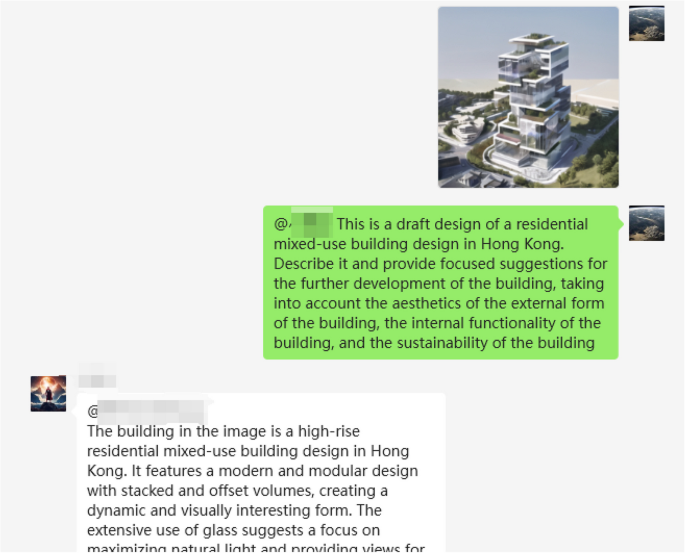

3.3 Collaborative environment integration

The developed AI bot can communicate with designers both verbally and visually in a group chat environment through WeChat, a mobile messaging application (Fig. 4), by integrating ChatGPT-on-WeChat, an open-source project (zhayujie, 2022/2024). It provides seamless integration into user-friendly communication methods, such as voice messages and on-device use (e.g., mobile phones). Together with the group chat function, it simulates real-world collaborative design practice scenarios. It not only generates inspirational images but also provides valuable feedback back and forth on progress.

Screenshot of conversation with the developed AI on WeChat (a messaging application)

3.4 Style testing and methodology demonstration

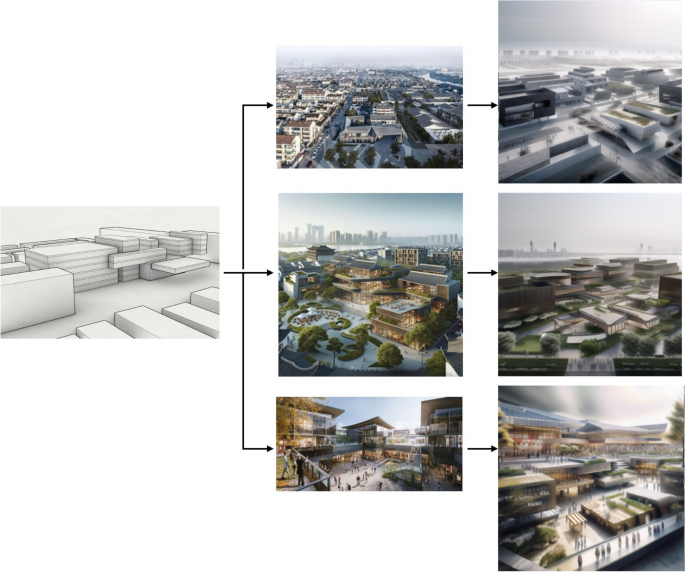

In this section, a demonstration is conducted by the authors as a feasibility study, as well as serving as an example to the students on how they might use the AIs in a conversational architectural design process. The interaction focuses on how designers might use the AI tools, rather than focusing on the student-supervisor interaction in this section. Figure 5 shows an example of a 3-step conversational design workflow that could happen between performative optimisation, an AI bot and an AI drawing tool (Stable Diffusion) for style testing.

A proposed conversational design workflow for the demonstration, including (1) performative optimisation, AI-enhanced style testing and (3) AI-enhanced style testing iterations

First, after building massing models generated from EvoMass, designers could select a preferred option and use AI prompts suggested by the AI bot to be used in Stable Diffusion (with web user interface) for quick style testing. The selected building massing is then fed into the AI bot, which generates prompts for Stable Diffusion to create visual representations (blue part of Fig. 5). Then, a preferred style image could be fed to the AI bot in WeChat again, asking for further feedback, either in texts (AI prompts) or reference images (generated by the AI bot). Then, the feedback obtained from the AI bot could be applied in Stable Diffusion again to obtain an iterative style test (pink part of Fig. 5).

Table 1 recorded the tools being used and their respective functions and observed design implications and mediums between the human-AI interactions for the three stages in the demonstration.

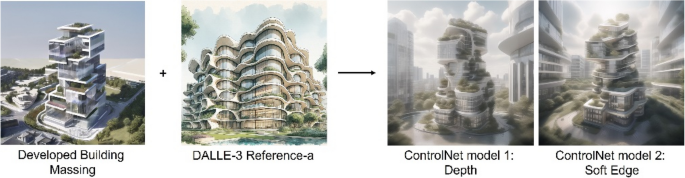

After receiving prompts or images from the AI bot in the WeChat environment, the designer uses Stable Diffusion with the ControlNet plugin for style testing. ControlNet, an open-source AI image generation tool, allows for controlled output by referencing the outlines or depth of input images. Various ControlNet models will be tested, evaluated, and analysed to generate inspiring images for designers, aiming to establish guidelines for selecting the appropriate models and weights to enhance image quality for specific design needs.

The demonstration is divided into two sections. The first involves a three-step exploration for the initial round of style testing (blue part of Fig. 5): 1. Understand the attributes of ControlNet models; 2. Conduct style tests with different models; and 3. Test different ControlNet parameters. The second section includes a two-step iteration process (pink part of Fig. 5): 1. Discuss the test results with the AI bot; and 2. Iterate the style tests.

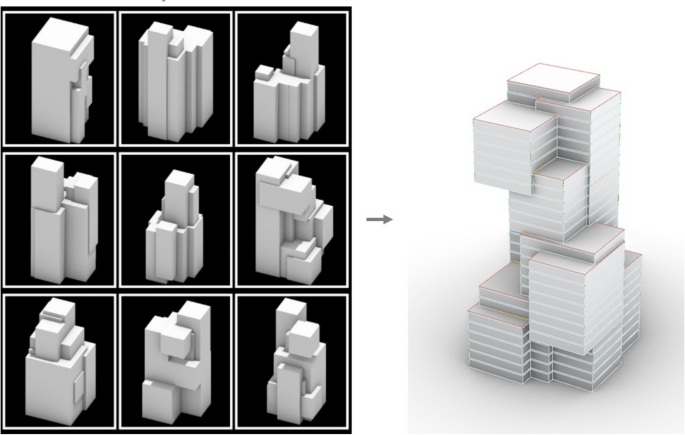

In Fig. 6, after optimising a high-rise mixed-use building design in Hong Kong, a preferred option is selected. Initial communication with the AI bot provided prompts for style testing specific to Hong Kong’s high-rise buildings. The prompt used in the demonstration is: “Apartment building, Zaha Hadid Architects, Sustainable Design Group, Vertical garden facade, Stone exterior, City skyline view, Passive solar energy utilisation, Soft lighting”. The use of the prompt is the same throughout this demonstration for easy comparison between different Stable Diffusion parameters.

Selected nine performative-optimised massing models generated by EvoMass (left) and the selected option (right)

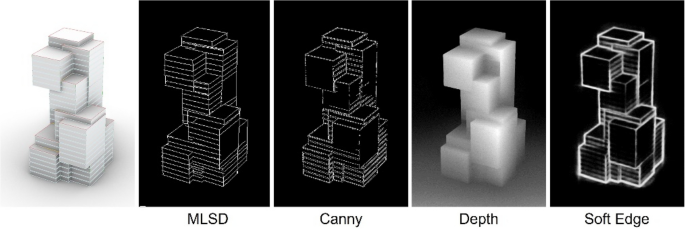

The first step is to select various ControlNet models for extracting information from the chosen massing image. Figure 6 displays four common ControlNet models, each with three weight options. Stable Diffusion uses these models for image control through edge and depth detection methods. Figure 7 visually compares how these models convert raw images into filtered layers. The models include MLSD (straight line detection), Canny (edge detection), Depth (3D depth detection), and Soft Edge (line weight detection). The images indicate that, apart from the Depth model, the other models emphasise linear features, particularly MLSD, Canny, and Soft Edge, which show strong control over straight lines.

The selected massing model image (left) and filtered images of the ControlNet models

In step two, the differences between the four introduced ControlNet models become evident in image generation (Fig. 8). The MLSD and Canny models result in building designs restricted to straight, continuous lines, making it challenging for architects to explore unique design ideas. Consequently, the Depth and Soft Edge models are favoured, with the Soft Edge model providing more detail and ultimately selected for further iteration.

Style-tested image variations with four different ControlNets (MLSD, Canny, Depth and Soft Edge)

In step three, the parameter setting of ControlNet weights is also experimented with. The MLSD model, which provides precise detection through straight lines, combining the previous prompt generated by AI, is taken as an example to compare three main weights between the prompt and the ControlNet model, namely 1)”balanced”, 2)”the prompt is more important”, and 3)”the ControlNet is more important”. When using “balanced”, the generation image maintains a high consistency of the input building blocks but insufficiently responds to given prompts (Fig. 9).

Style-tested image variations with three different parameter settings (Balanced, “My prompt is more important”, and “ControlNet is more important”)

When prioritising “the prompt is more important”, the image shows less-controlled variations, like curving facades and breaking floor fragments. In contrast, emphasising”the ControlNet is more important”results in simplistic massing models that lack environmental context. This highlights the significance of keywords in image generation. The focus is on variations provided by the prompt for further exploration of architectural language and style. In the first step of the iteration, the selected image is input into an AI bot in the WeChat environment to gather suggestions for enhancing the building’s external aesthetics (Fig. 10). The AI identifies features like “stacked and offset volumes” and “green spaces at different levels“. Subsequently, it generates five reference images based on these insights for further iterations.

Example of multimodal AI suggestion based on the style-tested design image and question on WeChat

References 1 and 2 (Fig. 11), displaying twisted facade lines and rounded corners, are selected as reference images for iteration. In step two of the iteration, the designer again switches to Stable Diffusion on the web browser and adds an additional ControlNet model “IP adapter” for transferring the style from reference images 1 and 2 to the design image. Figures 12 and 13 show that the curvy facade reference and interlocking language are reflected and merged into the final generated images while controlling the building shape. It is observed that the iteration using the Depth model creates a higher degree of variation. In contrast, the Soft Edge model is more inclined to produce images with more design fixation according to the original image. Thus, instead of selecting the “best” ControlNet model for the design process, designers can adapt the diversity of ControlNet models according to the design needs.

First batch of five reference images generated by the AI bot

Iterations by mapping the style of reference-1 into the first selected style test image

Iterations by mapping the style of reference-2 into the first selected style test image

The proposed workflow enables a detailed exploration of architectural form by integrating natural language processing (NLP), computer vision (CV), and multimodal AI tools with performance-based design optimization. By using multiple AI tools in the post-optimisation phase, the framework enhances control over the design process, facilitating meaningful interactions through verbal and visual means. The multimodal AI tool within the messenger application fosters intuitive communication between AI tools and designers. This conversational design workflow allows iterative use of the AI bot and Stable Diffusion with optimised massing model images while accommodating various designers’ preferences in ideation and exploration. The next section will present a real-world design scenario to illustrate how this workflow can be integrated into different design development processes and strategies.

4 Case study

A design workshop for undergraduate architecture students was held at Xi’an Jiaotong-Liverpool University to showcase the proposed design workflow and agentic AI system. The design project involved renovating a mixed-use building cluster in Gusu Old Town, Suzhou, China. Many renovations in Chinese old towns tend to focus on façade repainting, often neglecting improvements to architectural typology or the preservation of traditional Chinese features. Additionally, residents often lack public spaces at the neighbourhood scale, and side streets are dim due to overgrown trees and nearby buildings (Fig. 14). Therefore, students were encouraged to propose a new typology that considers urban daylight performance and local activities while integrating traditional architectural styles.

Site plan and photos of renovation block and side road for the case study design workshop project scenario, from Baidu map

At the start of the design workshop, all tutors and three students formed a WeChat group with the AI bot as a design team. Each student is asked to develop a different concept design. The various AI-collaborative approaches and key conversations influencing the design process are detailed below. Students could also observe how their peers utilised the AI chatbot within the group chat environment, providing additional inspiration for their designs.

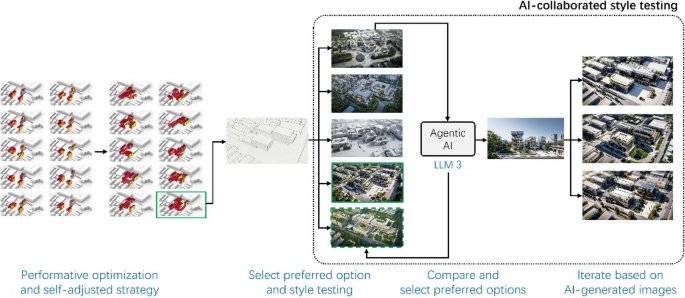

4.1 Case 1: performance-based

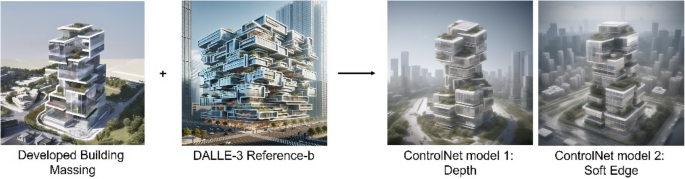

Student 1 proposed that the newly designed building could improve the neighbourhood’s quality of life by maximising sunlight exposure for surrounding structures. He began using EvoMass to intuitively understand how the building’s location and orientation could achieve this performance goal. After the initial round of performative optimisation, he manually selected a preferred option to engage in a design conversation with the agentic AI system, seeking further development advice.

Since the input was neither solely a massing model image nor a render, it activated LLM 3 of the agentic AI system. The AI explained the simulation results and offered five design suggestions. The student found two suggestions particularly interesting:”Step-like setback design reduces blockage on the south and west sides“and”using the rotation function in EvoMass to minimise shading effects on surrounding houses.”The second suggestion was especially noteworthy for the student, as he had yet to realise that such a function existed in EvoMass. The AI also highlighted its relevance to the design intent of reducing shading for nearby homes. Subsequently, one of the tutors posed a follow-up question to the AI, asking how the student might utilise the EvoMass rotation function.

Given the site boundary constraints, the rotation that could be applied was limited; therefore, Student 1 manually rotated the road-facing modules, annotated in red in Fig. 15 (right). For the southwest-facing modules, while the AI did not specify the techniques to achieve this, its earlier suggestion regarding EvoMass’s rotation function prompted Student 1 to consider switching from the Additive generation mode to the Subtractive generation mode in EvoMass, annotated in blue in Fig. 15 (right). After further manual modelling of the selected option, he sent the massing model back to the AI for additional development advice.

Sunlight simulation screenshot for AI (left) and modified massing model (right)

As the second round of conversation began with a massing model image, it activated Workflow 1, which provided web-searched case studies, design advice, and reference images. The student found the response comprehensive and relevant, but felt it was too general. A tutor then posed a follow-up question:”Regarding the comment on how the stepped design could enhance views while protecting user privacy, please elaborate and provide specific design advice“to foster a more conversational discussion. It was noted that the student subsequently initiated rounds of follow-up questions to request a greater variety of reference image generations and inquired about the reasoning behind the AI’s suggestions. Ultimately, the student selected a desired reference image and edited the prompts using AI to conduct multiple style tests with Stable Diffusion.

Figure 16 summarises the design workflow. The conversation primarily focused on the performative design process; the agentic AI system demonstrated its ability to connect design advice with optimisation tools. This interaction inspired Student 1 to enhance his performative design strategies by utilising EvoMass’s rotation function for street-facing massing and subdivision optimisation for southwest-facing massing. As the first student to engage with the AI system, Student 1 exhibited confusion when the AI’s responses were unexpected or overly general, reflecting the unconventional nature of this mode of AI collaboration. In such cases, more experienced designers (the tutors) could serve as”student assistants”by asking follow-up questions to facilitate AI-human collaboration.

Performance-based AI-enhanced design process model by Student 1

4.2 Case 2: evaluation-based

Student 2 approached design optimisation from a practical standpoint, focusing on total floor area and sunlight hours as the main objectives. Noticing an intention for the massing to remain distant from its surroundings while close to the main road, the strategy was revised to include an objective for diagonal internal roads, inspired by Student 3. After the second round of optimisation, Student 2 felt uncertain about selecting a desired option for further development. The tutors suggested sending both options to the AI for feedback. However, as the design of the agentic AI system did not account for the comparison of multiple designs, the inquiries activated LLM 3. Drawing from Student 1’s experience, specific questions were posed, such as,”Provide an explicit comparison of the massing models, including potential challenges and opportunities, and how to further develop them in terms of massing models and style testing.”However, Student 2 did not receive useful feedback. The screenshot was taken from a lower angle, and since Student 2 was focused on the pedestrian eye-level view, the AI struggled to distinguish between the massing model and the surrounding buildings. To address this, Student 2 added floor slab lines to the massing and instructed the AI that the model with slab lines represented the current design proposal, which resolved the issue. After several rounds of conversation, a massing model was selected, manually revised, and sent to the AI for style testing advice.

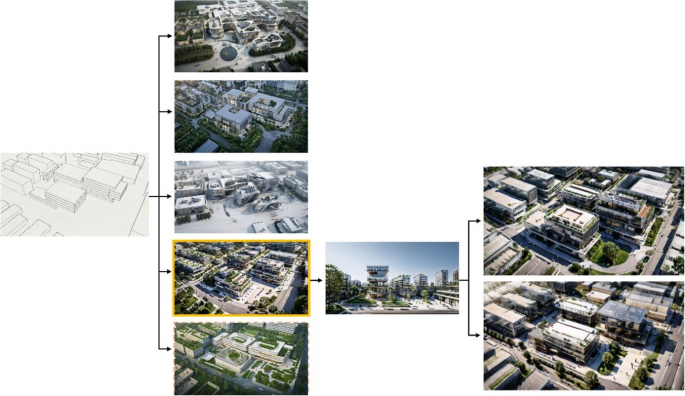

This interaction activated Workflow 1, providing a comprehensive response. Since Student 2 was hesitant to directly use the generated image as a reference for style testing, he manually compiled abstracts of verbal advice into different prompts for the first round of style testing. Following a successful discussion process regarding the massing models with the AI, Student 2 then sent various renders to the AI for comparison. After multiple conversations, instead of manually compiling prompts for the next round of style testing, Student 2 requested the AI to compile prompts with specific design directions automatically. He viewed the agentic AI system as a design team member. After completing the second round of style tests, Student 2 gained a better understanding of the agentic AI mechanism. For the next round of style tests, he decided to send the three compiled images to the AI for comparison and submit them one at a time to trigger Workflow 2 for more in-depth analysis. In the final design iteration, Student 2 selected an AI-sourced case study from a previous conversation, two AI-generated images and human-AI-compiled prompts for the final three style tests. Figure 17 illustrates key moments where various AI outputs (case study, reference image, prompt) facilitated style testing with parallel design variations.

Development from the massing model to three different references for parallel style testing by Student 1

The AI-enhanced design process of Student 2 is illustrated in Fig. 18. In contrast to other students, Student 2 was more receptive to various design directions, resulting in more comprehensive conversations with the AI, which primarily evaluated and compared different options. The flexibility of the AI-enhanced conversation was evident as Student 2 developed methods to transfer information from the AI to style testing, utilising case studies, verbal analyses, and reference images as sources for style tests. Throughout this process, Student 2 demonstrated that the design conversation could be enhanced by exploring diverse design possibilities rather than solely seeking the best solutions.

Evaluation-based AI-enhanced design process model by Student 2

4.3 Case 3: designerly-based

Student 3 had a clear design vision of creating open internal streets for the local community and demonstrated a strong interest and experience in AI image generation using Stable Diffusion. The initial two rounds of performative optimisations focused on direct sunlight hours, visibility, and openness of internal streets, and progressed quickly. A preferred massing model was then selected and tested in various styles using different AI models with self-created prompts. Throughout this process, there was minimal engagement with the agentic AI system, and little interest in AI experimentation was evident initially. However, after observing Student 2’s experience with AI’s ability to compare design options, Student 3 sought to have the AI evaluate different renders. Drawing on the experiences of other students, Student 3 quickly adapted by actively asking the AI for clarification and elaboration without tutor intervention.

For instance, when the AI suggested the”best”option among five selected renders, Student 3 expressed doubt. They followed up with,”What if we consider local residents’living quality as the first priority?“to uncover any underlying design judgments from the AI. When confusion arose in the AI’s response, Student 3 posed focused questions like,”How would you balance ‘newly designed open street life’ and’disruption of traditional lifestyles’as you suggested? Please advise explicit design methods to achieve this.” Ultimately, useful comments from the AI were compiled into prompts, and the AI was asked to generate reference images, which were then integrated into the design for quick style experiment iterations.

Figure 19 illustrates Student 3’s progression from massing to style-testing stages, featuring five preferred options. After choosing a design option through several rounds of discussion, the AI suggested a reference image highlighting additional green space between the building and the main roads. Student 3 quickly adapted and tested different variations of this green space.

Key moments of the design process by Student 3

As shown in Fig. 20, designers with strong design intentions, like Student 3, may initially underestimate and overlook unfamiliar tools, such as AI chatbots. However, once clear examples of effective AI-human collaboration became apparent, Student 3 swiftly adjusted its design process. Throughout this phase, Student 3 engaged exclusively with LLM 3, as their inquiries concentrated on very specific design elements, which makes the conversation designerly-focus. This suggests that while pre-planned workflows can offer comprehensive information, a highly flexible and responsive AI agent like LLM 3 is essential for a meaningful and in-depth conversation.

Designerly-based AI-enhanced design process model by Student 3

All the key interactions are extracted in Online Resource 1 (Sect. 2).

4.4 Count of usable interactions

Table 2 summarises the count of usable interactions and the compositions, including how many multimodal inputs (visual-question-answering), multimodal outputs (reference image generation), which AI system is triggered, and how often tutors are involved during the conversations. Instead of filtering “usable” interaction counts based on pre-set criteria, which is argued to be biased (Shi et al., 2024), all interactions without technical errors (e.g. no response from AI) are included as usable interactions. Regarding the inclusion criteria of tutor and student involvement, when an individual is discussing his/her design project with the AI bot, any other’s enquiry regarding the same project is considered part of a collaborative discussion.

There are several findings. First, it shows that multimodal inputs from designers occupied over 60% of the useful interactions, which indicates the necessity of image-processing capability for AI and only one-third involved multimodal outputs (AI generation of reference images), which potentially due to most image iteration in this workshop was proceeded through Stable Diffusion, instead of the AI bot. Therefore, it validates the need for multimodal AIs to receive multimodal inputs. With the proposed agentic AI system, nearly 80% contributed to the agentic LLM 3, rather than pre-planned workflows 1 and 2, indicating the flexibility of agentic LLM potentially has a more general adaptivity to different design scenarios. However, it does not mean pre-planned workflows could be eliminated; for instance, for the performance-based approach by Student 1, one-third of the usable interactions were based on workflow 1, which specialised in massing model analysis, which provided more in-depth guidance in such a scenario. Similar to Student 3, his designerly-based approaches required Workflow 2 for almost 20% to evaluate and advise on his rendered images comprehensively. Finally, the involvement of others (tutors or students) during a human-AI design conversation occupied about one-third of the usable interactions, potentially implying that the value of AI deployment in a collaborative environment enhances effective design discussions. However, we cannot conclude whether there is an advantage of agentic AI over pre-planned workflows, or whether the collaborative medium enhances design conversation, due to our lack of a comparison setup, such as a control group. Therefore, further analysis is conducted through semi-structured interviews and questionnaires in Sects. 5 and 6.

5 Semi-structured interviews

The interview aims to collect the three students’ feedback on the proposed framework and tools according to their experience during the workshop. The feedback covers the effectiveness of the conversational framework, developed AI tools and collaborative environment. The identified potential and challenges will be referenced for future improvement. Three separate 30-min one-on-one interviews were conducted with the students. Six questions were prepared to address the three aspects of the research, including the effectiveness of the conversational design framework, group messaging environment and the developed AI system, as listed below:

- Q1: What are the differences between this AI-enhanced method and traditional methods?

- Q2: What are the differences between your expected and actual design experience?

- Q3: How do you feel about using a collaborative environment (WeChat) compared to a 1-to-1 scenario?

- Q4: What are the biggest challenges?

- Q5: What were the biggest, ideal improvements for the next version of the AI bot?

- Q6: How do you view the role of the AI bot in your creative process?

5.1 Question 1 response (difference with traditional design)

There is a common comment from all three students that AI assisted in decision-making by providing “objective” advice, which previously dominantly relied on human designers’ discussion and subjective judgment in the traditional design process. Student 2 mentioned it helps “loosen the cognitive burden of decision making when there are too many available options”. On the other hand, Student 1 compared his experience using Stable Diffusion to support quick style tests, which he called the “traditional AI-assisted design”. He felt that the biggest difference is that when AI is integrated with chat and image-processing functions, it is the first time he felt AI could be more than a drawing tool, but working on the design process together. Despite the advantages mentioned, Students 2 and 3 found new drawbacks compared to the traditional human discussion method. When human designers discuss, they naturally explain their preferences logically, but AI sometimes shows un-promising or lacks logical clarification.

It is important to note that there are common comments stating AI helped in providing objective comments, but scholars such as Inie and Derczynski (2021) have stated, “Numbers and code offer the illusion of objectivity”, as Students 2 and 3 observed that the AI response potentially lacks logic in reasoning. Hence, mechanisms such as explainable AI (XAI) or Chain of Thought (Wei et al., 2022) shall be integrated into the AI bot to enhance its reliability.

5.2 Question 2 response (expectation vs reality)

Before participating in the workshop, Student 1 had anticipated that the AI would operate simply like a typical image generator, similar to Midjourney, just using WeChat as the interface. The AI bot’s capability to engage in continuous conversations to explore ideas more deeply was a pleasant surprise. Similarly, Student 2 expected the AI bot to function like a standard text-to-image generator. She was surprised by the extensive information provided by the AI and its ability to engage in ongoing dialogue while interpreting images. This surprise was largely due to her previous experience with AI when OpenAI first released ChatGPT in 2022, when its performance was less advanced than it is now.

On the other hand, Student 3 had high expectations about the AI bot that could automatically modify massing model images, similar to Stable Diffusion with the ControlNet function. However, the AI’s image feedback often seemed general and not sufficiently targeted. This highlighted a contrast between the convenience of AI platforms, such as WeChat, and the inconsistency of their responses. Students’expectations are closely tied to their prior AI experiences, directly influencing their willingness to use AI tools. Therefore, setting reasonable expectations for designers before starting design tasks is important.

5.3 Question 3 response (WeChat group environment)

All three students expressed different attitudes to this question. Student 1 had positive feedback; he found the WeChat group environment beneficial as it allowed getting real-time inspiration from classmates. Student 2 felt neutral about designing an AI bot in a group chat environment. She was comfortable sharing her design process with others and sometimes gained insights from observing her peers’approaches. She also found the AI bot on WeChat more convenient than the web version, such as ChatGPT. Opposingly, Student 3 preferred a one-on-one private chat mode for the design process, as it helped him avoid cognitive overload and distractions from seeing others’ conversations. He suggested that if an AI were included in a group chat, it could function as a secretary to facilitate discussions while serving as a design partner in private conversations. In summary, the differences in opinions suggest that even if the WeChat platform is convenient and intuitive to use, roles of AI bots (e.g. AI as a designer or a secretary, etc.) could be introduced flexibly according to different settings (one-to-one private chat or group chat) that fits the designers’ preferences. Therefore, multi-agent system techniques could be investigated in future developments; as such, the role of the AI bot is changeable according to user enquiries.

5.4 Question 4 response (biggest challenge)

All three students stated that the biggest challenge was finding the AI bot’s response in general and exploring prompt engineering techniques to have effective conversations. Both Students 2 and 3 pointed out that AI was not “architectural focus” enough while understanding the design enquiries. For example, AI advised Suzhou garden styles with stereotyped Chinese gardens. When the AI bot is asked about future development, AI suggests structural system development or fire safety, where the students refer to “future development” to massing design iterations and style testing. Student 3 especially mentioned that it would be much better if the AI bot could respond in a more human-like manner, offering multi-faceted answers akin to those of a teacher addressing simple problems; it would represent a significant improvement. Regarding the introduced workflows, Student 1 specifically expressed being overwhelmed by the lengthy replies generated from the workflows. He had to sift through extensive, multi-perspective answers to extract relevant information. These comments then set the starting point for Question 5.

5.5 Question 5 response (ideal AI function)

There is common advice from all three students that if the AI bot integrates with the Stable Diffusion function (with ControlNet), it could greatly benefit the seamlessness of the conversation, as this methodology was becoming increasingly common that almost all designers could use during early-stage design exploration. Responding to the comments received from Question 3, Student 1 suggested that the AI bot could guide the designers in understanding how to ask effectively (prompt engineering techniques) when their questions are too general. Students 2 and 3 expressed a strong interest in the AI bot capable of simultaneously processing multiple images. Student 2 suggested it because she believed she could gain more useful insights and inspiration presented logically if the AI could explain its thought process through a sequence of images (e.g., from massing models to renderings) or sequences of texts in a storytelling presentation manner. She also mentioned that multiple-image generation could be used in parallel instead of sequentially, offering design variations as divergent suggestions. On the other hand, Student 3 considered multi-image processing from the user-friendliness perspective. He stated that if AI could process multiple images simultaneously for comparison, it would be more efficient when comparing different options, since he needed to manually compile multiple renders into one image for the AI to compare and evaluate.

5.6 Question 6 response (role of AI)

Student 1 viewed the AI bot as a teaching assistant capable of suggesting ideas designers might not have considered. Student 2 viewed the AI bot as a design assistant, especially during the ongoing discussions in the design workshop. However, she expressed a desire for the AI to be further developed as a teaching assistant, offering guidance throughout the design process to enhance productivity, which she described as “I wish AI could provide useful guidance even if I asked too-general questions”. For Student 3, the AI served as both a design assistant and a teaching assistant, providing feedback and supporting the thinking process. This was particularly beneficial in improving efficiency when the designer had a clear intent or concept that involved convergent questioning. He hoped that the future AI would analyse the characteristics of the designer’s challenges, such as design concepts, technical inquiries, or style testing, hence fine-tuning its role automatically as a design assistant, teaching assistant or secretary.

In summary, students highlighted their feelings about AI’s roles as a design partner and teaching assistant while expecting it to be capable of being a multi-role AI bot. Echoing the Question 5 summary, it emphasised again the potential need for exploration for integrating multi-agent systems where AI roles can be flexible based on the users’ enquiries.

Interview findings, key insights and potential future development of the six semi-structured interviews are summarised in Table 3 below.

In conclusion, students’ satisfaction with how the proposed framework allows “continuous conversation” and “loosening the cognitive burden of decision making” through discussion validates that conversational design processes are enabled by the proposed framework. Despite comments for improvement collected such as the AI response was “lack of reasoning”, the suggestions also indicate students’ desire to know more about the AI bot’s logic behind its decision making, that they treat the discussion with the AI bot exceed a simple, linear application of an image-generation software, but an AI bot that they would like to have a deeper design discussion with. Although students expressed different opinions on whether 1-to-1 or group chat environments are more suitable for design conversations with AI bots, they agreed the deployment of AI bots on WeChat, a common messaging platform, allows immediate and intuitive application with a minimum learning curve. Finally, there is also different feedback on the developed AI system, such as the usefulness provided by the comprehensive response against the extra effort required of sifting through a long response. Further quantitative analysis of such a dilemma is explored in Sect. 6.

6 Questionnaires

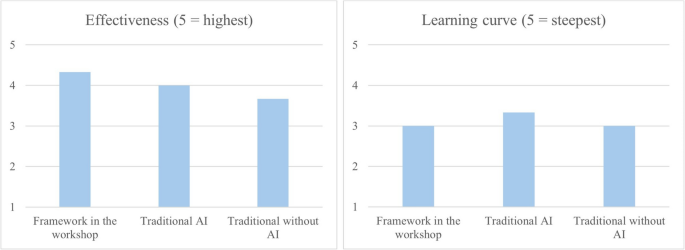

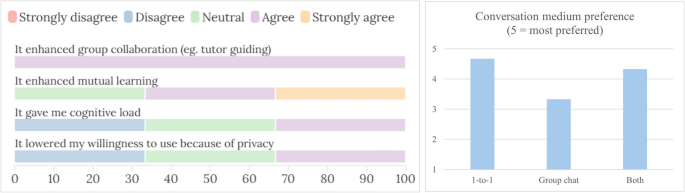

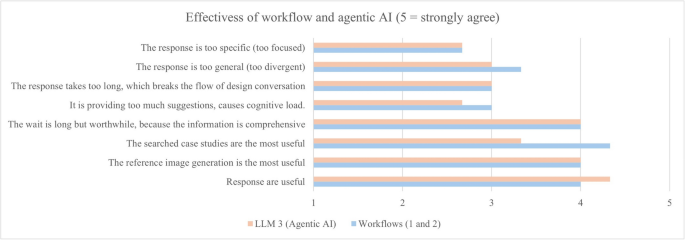

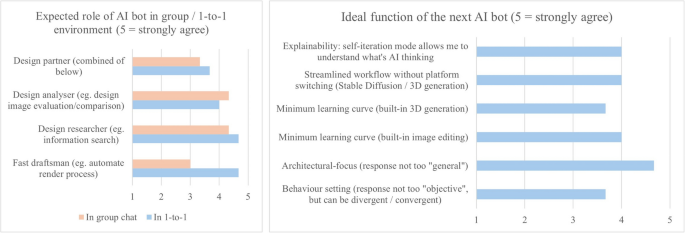

Seven questions are designed for the students regarding the effectiveness of the proposed framework and AI system. Figure 21 shows the proposed framework has slightly higher effectiveness compared to using traditional AI tools (e.g. Midjourney, ChatGPT) and without AI while maintaining the same level of learning curves. Although the advantage is not significant, it displays the potential of the proposed framework as an alternative design framework that can be applied by designers. Regarding the group chat environment (Fig. 22), the result shows while it provides opportunities for group collaboration and enables mutual learning, the drawbacks, such as cognitive load and privacy concerns, are subjective to the designer’s preference and design habit. Also, instead of having group chat as the only option, they preferred to have 1-to-1 or both options of individual and group chat environments available. Regarding the relative usefulness of the developed workflows and agentic AI, students expressed workflows are more effective than agentic AI in providing searched case studies, agentic AI outperforms workflows in several aspects, including the usefulness of response, which causes less cognitive load (Fig. 23). The roles of AI are considered to be different according to collaboration modes. An AI bot is mostly expected to be a design analyser for evaluating and comparing design proposals in a group chat environment, while its roles as a design researcher and draftsman are much needed in an individual environment (Fig. 24). For future development, the AI bot is expected to be more architectural-focused by streamlining the existing AI applications such as image editing and enhancing AI’s explainability to facilitate deeper design discussions.

Survey results comparing the effectiveness and learning curve between the proposed framework and traditional frameworks (with and without AI)

Survey results regarding design experience using WeChat as a medium, and medium preferences

Survey result of effectiveness comparison between the workflows and agentic AI

Survey results of expected role of AI bot, and ideal function for the next AI bot development

In conclusion, the questionnaire findings verify that the proposed design framework is a feasible design method with a similar learning curve to traditional design methods. The group chat environment enhances mutual learning and design collaboration. However, drawbacks like cognitive load and privacy concerns vary among individuals, which leads to future potential settings of having both individual and group chat options available. Finally, future development should focus on streamlining common AI-enhanced design exploration, such as image editing, to be more architectural-focused.

7 Discussion

The three demonstrations illustrate the practicality of integrating an agentic multimodal AI system into a collaborative design environment during the early stages of architectural design exploration. The proposed framework and the developed AI tool address three common challenges in current AI-enhanced design processes: one-to-one communication limitation, the steep learning curve associated with advanced AI systems, and AI’s limited understanding of architectural concepts. It is important to note that the intention of this research is to experiment with alternative design approaches by designing with AI, rather than replacing or speeding up traditional design workflows. Therefore, the analyses focus on understanding the effectiveness by identifying the opportunities and challenges of the proposed conversational design framework.

There are three main contributions, (1) validation of the proposed framework allows conversational, collaborative design process, rather than input–output interaction, (2) potential immediate application in educational and practice context because of the shown small learning curve throughout the process and (3). and (3) deeper understanding of the effectiveness of multimodal, agentic AI system for design process. First, three undergraduate architecture students showcased three distinct approaches: performance-based, evaluation-based, and designerly-based. Under the proposed framework, the distinctive approaches display how designers (students) could experiment and learn different ways of designing with the AI bot for design exploration, rather than design fixation. Students expressed in interviews and questionnaires that the proposed framework had higher effectiveness in the design process than common AI tools or traditional design methods with AI because the proposed framework allows continuous discussion with the AI bot. Such a reflective and learning nature observed from the design processes illustrates how AI can enhance the conversational design process, as suggested by Pask (1980) and Schön (2017).

Second, from the standpoint of research and AI education in architectural design, the proposed framework highlights minimal learning curves for using AI tools. The deployment of an AI bot on a common messaging platform allows the designers’ immediate application. Compared to existing research, such as Ferguson et al. (2015)’s knowledge-based system and several multi-modal LLM-based design analysers required the use of specific design software (Revit) or coding knowledge to train and use the proposed system (Homayounirad, 2023; Sabah et al., 2024). The proposed framework allows students to focus on the design process with minimal time mastering complex AI systems. The designed workflows demonstrate potential benefits for students, particularly those with less experience, who often pose fewer comprehensive questions for design guidance. The more flexible agent (LLM 3) enables a wider range of students to ask unexpected and follow-up questions, validating how agentic AI could enhance human-AI collaboration, as envisioned by Abedin et al. (2022) and Ibarrola et al. (2024). Integrating with the WeChat group, a collaborative environment permits tutors to participate in discussions by asking additional questions. Furthermore, mutual learning techniques and new AI-enhanced design methods can emerge among students, as observed in the workshop. The questionnaire indicates that, despite their drawbacks, such as cognitive overload with simultaneous conversations among multiple design members and concerns about privacy during design discussion, the advantages of mutual learning and design collaboration outweigh the concerns. More importantly, available choices of both 1-to-1 and group chat modes with AI bots are highly recommended. This approach can extend Bolojan’s vision of AI collaboration with other stakeholders, such as engineering consultants and clients, in real-world practice (Bolojan et al., 2024). A knowledge-based system could integrate project information and domain knowledge from various areas of expertise with AI agents.

Finally, questionnaires suggest the flexibility of agentic LLM outperforms pre-planned workflows in several aspects, such as providing more concise and useful responses. However, workflows provided dominantly more targeted case studies to the students. In a group chat environment, an AI bot is expected to be the best fit as a design analyser. Instead of establishing an expert AI analysis system as a design analyser, such as a self-trained NLP system (Homayounirad, 2023) or voting system (Gerber et al., 2015). The proposed LLM-based framework allows flexible adaptations by providing context to the AI system through natural language communication. In a 1-to-1 scenario, an AI bot is expected to be more flexible with various design exploration capabilities, such as information search and design image generation 1-to-1 scenarios. In future development, it is crucial to position “architectural-focus’ as the main aim, with aspects such as enhancing AI’s explainability by providing more logical reasoning behind its design decisions and further minimising the use of AI tools by allowing 2D and 3D design through natural language communication.

7.1 Limitations and future research

Several limitations and challenges present potential research directions, which can be examined from two perspectives: collaborative design environment and conversational design process.

In conversations with AI within a collaborative environment using WeChat, which allows for immediate interaction, designers’acceptance is influenced by concerns about exposing their design processes to others. Cognitive overloads were observed when highly iterative human-AI conversations containing multimodal information were visible to all designers, even though two participants found observing others’ interactions with AI beneficial. This necessitates careful consideration of the AI’s role in collaborative design settings. For instance, assigning one design-focused AI bot to each designer in a private chat might be beneficial, while a separate AI serving as a manager or secretary could be employed in a group chat environment, depending on designers’preferences. Although the case study was conducted in a collaborative setting, the students primarily developed their projects independently. Further experimentation with multiple designers working on a single project could better assess the effectiveness of the proposed design framework.

Regarding AI functionality during the design process, all three students mentioned that the AI’s responses were often general until specific follow-up questions were posed. The developed agentic AI system currently incorporates only two workflows and one agentic AI, simplifying the complex possibilities of design scenarios. A more generalised design approach for the agentic AI system is needed to enhance adaptability for various designers. The vision outlined by Andrew Ng (Sequoia Capital, 2024) reflects four design patterns for agentic AI models, integrating emergent techniques such as planning and multi-agent collaboration that could help address these challenges. For multimodal functions like basic image generation, the transition between AI conversation and other tools, such as Stable Diffusion, still presents a significant learning curve for students without prior AI experience. Therefore, the design of the agentic AI system could benefit from improved prompt engineering and the integration of commonly used AI tools like Stable Diffusion with ControlNet. Lastly, while the knowledge base enables the AI to respond with domain-specific knowledge, it often needs to make connections between abstract concepts, such as the relationship between design software functions and design ideas. More advanced techniques like knowledge graphs should be explored to enhance application effectiveness.

8 Conclusion

In conclusion, this paper proposes an AI-enhanced design framework tailored for a collaborative design environment. This framework is supported by a developed, architecture-focused, agentic multimodal AI system integrated into WeChat, a widely used messaging application that facilitates group discussions. The feasibility of this approach is demonstrated through a design workshop. Case studies of three students’ AI-enhanced design processes reveal three design strategies applicable to early-stage architectural design. This illustrated conversational properties between human designers and AI envisioned by the architectural cyberneticians could be enhanced with the proposed framework and AI application. A quantitative analysis of the usable human-AI interactions, questionnaires and a semi-structured interview were conducted to gain deeper insights into the students’ reflections on AI’s challenges and potential uses. This research contributes by validating the conversational design framework and the developed multimodal, agentic AI system in a collaborative environment, as well as displaying potential immediate applications in educational and practice context. Finally, the research implications are discussed alongside the identified challenges and future research opportunities.