Article Content

Abstract

Feedback is a complex dialogic, sociomaterial practice that is shaped by digital affordances, institutional norms and various contextual factors. Even though it is widely recognised as crucial to academic development, student perspectives on the practice of feedback remain underexplored. This study examines appraisal strategies in social media discourse and academic writing by analysing the use of attitude, engagement and graduation markers in over 5,000 posts and comments on r/college and 100 research articles on feedback. Using a mixed-methods approach that combines advanced statistical modelling and qualitative appraisal analysis, the study identifies prominent register-specific patterns. Students’ reflections and comments are predominantly attitude-driven due to the prolific use of attitude markers and upscaling to engage in joint emotion regulation when processing past feedback experiences. This appraisal strategy encourages affiliative alignment and reflects the interactive and affective nature of online discussions. In contrast, academic writing is characterised by engagement-driven variation, with scholars employing contractive and expansive appraisal resources to negotiate disciplinary interpretations. Educational researchers favour entertain, appreciation and focus markers to evaluate conceptualisations and processes of feedback. The findings confirm the emotional impact of critique and reveal students’ preference for clear, empathetic, actionable feedback, while frustration arises from vagueness, unfairness or excessive criticism. Academics view feedback as a structured, discursive process that should be constructive, evidence-based and compliant with disciplinary norms, recommending transparency, justification and cultural responsiveness. Recognising the students’ standpoint and suggestions can inform the co-design of more effective feedback practices that combine emotional responsiveness and goal-oriented guidance for self-development.

Explore related subjects

Discover the latest articles and news from researchers in related subjects, suggested using machine learning.

- Critical Psychology

- Discourse Research

- Discourse Analysis

- Pedagogy

- Research Methods in Education

- Research Methods in Language and Linguistics

Introduction

Providing and receiving feedback is an essential yet complex sociocultural practice in higher education that seeks to advance academic development and create inclusive, engaging learning environments (Carless, 2019; Hattie & Timperley, 2007). While feedback requires substantial educator input, it is increasingly viewed as a collaborative process that both improves academic skills and supports emotional self-regulation (Matthews et al., 2023; Nicol, 2020). However, feedback processes may not always meet students’ needs, especially when their expectations or learning preferences are not adequately considered (Carless & Boud, 2018). Miscommunication between educators and learners, for example, can prevent these processes from stimulating self-reflection. As a discursive practice, feedback is influenced by multiple factors, such as power relationships, communicative mode and cultural context.

The present study compares two contrasting feedback-related corpora: students’ online reflections on feedback in more than 5,000 posts and comments, extracted from the subreddit of r/college (301,687 words), and researchers’ conceptualisations of feedback, taken from the discussion and conclusion sections of 100 peer-reviewed journal articles (174,773 words). The r/college corpus represents learners’ spontaneous responses to feedback in an oral-like register, while the research corpus includes more formally structured, academic discourse. By analysing both datasets, the investigation mainly hopes to foreground the differences between how feedback is experienced and evaluated by students and how it is theorised and communicated by scholars, which may lead to the co-design of more effective feedback practices.

Appraisal theory has been previously applied to analyse evaluative language in feedback provision in diverse research areas, such as healthcare communication, higher education policy and student engagement. For example, Baker et al. (2019) investigate how patients express their attitude and emotions in online feedback. Davies (2023) examines how appraisal resources shape feedback discourse in UK university policy documents. Zhang and Hyland (2022) analyse students’ evaluative stances and engagement with feedback in a higher education context. Yet, corpus-assisted appraisal studies remain scarce.

Martin and White’s (2007) Appraisal framework accounts for interpersonal meaning-making through Attitude (emotional evaluations), Engagement (negotiating standpoints) and Graduation (force and focus). While predominantly concerned with tenor, it also acknowledges the influence of field and mode and how situational contexts may shape evaluative language choices. The present study extends the framework to register, recognising that appraisal markers encode communicative goals and wider discourse contexts (Egbert et al., 2024). Unlike studies on grammatical stance (Biber, 1988; Larsson et al., 2024), which tend to focus on (lexico)grammatical features, this paper examines the variability of evaluative meaning, thus providing a complementary perspective.

Using a mixed-methods Corpus-Assisted Discourse Studies (CADS) approach, the analysis draws on both exploratory multivariate statistical techniques, such as k-means clustering, and close qualitative analysis to uncover salient appraisal patterns in the two registers which reveal how students and academics employ appraisal resources to construe meaning and illuminate noteworthy contrasts in terms of interpersonal focus and rhetorical objectives. More specifically, the study explores how learners and scholars appraise feedback and how their standpoints and strategies differ across registers. It further contrasts the interpersonal, hybrid nature of Reddit discussions, where opinion- and information-sharing intertwine (Biber & Horowitz, 2023), with the more formal tone of academic research articles. The analysis attends to internal variation within each register through its focus on how participants construe attitudinal and dialogic meanings around the topic of feedback.

The remainder of the paper is structured as follows. The “Literature Review” section reviews previous research. The “Methodology” section outlines the methodology, including statistical methods. The “Quantitative Findings” section presents quantitative results. The “Student Standpoint on Feedback” section explores students’ appraisal strategies, while the “Academic Standpoint on Feedback” section examines the academic standpoint on feedback. The “Further Discussion and Conclusion” section discusses implications, especially with regard to the impact of register and shifting conceptualisations of feedback, and the “Conclusion” concludes the study.

Literature Review

Previous Studies

Feedback research has expanded greatly in recent years and predominantly studies the role of critiquing as an interpersonal dialogic process (Ajjawi & Boud, 2017; Carless, 2019; Cunningham & Link, 2021; Raaper, 2018). Effective feedback is said to cater for learners’ emotional and cognitive needs. However, its uptake may be hindered by limited student agency (Winstone et al., 2016). Molloy et al. (2019) argue that students should actively seek, use and generate feedback. Educators also need to understand how the practice is construed and conceptualised by researchers to be able to deliver useful feedback, as Boud and Dawson (2021) explain.

Context and mode significantly impact learner engagement with feedback, as seen in Zhang and Hyland’s (2018) study on automated comments. Traditional, critique-driven feedback practices that lack sensitivity to students’ circumstances or cultural background may result in rejection or poor integration (Carless & Boud, 2018). Investigations of learners’ appraisal strategies, such as emotion regulation after negative feedback experiences or, in the case of research, sharing new insights on feedback, that account for student and educator perspectives may contribute new insight into how this could be prevented. Gravett (2020) adopts a sociomaterial view regarding the concept and underlines the need to examine student engagement with feedback outside of the binary dialogical interaction with educators. They recommend looking into issues that relate to students’ diverse backgrounds and other non-human factors, such as institutional context or technological tools and recognise that feedback is more than merely providing comments or discussing assessments. It also has a considerable emotional impact, especially negative critique, which may greatly affect dyadic relationships, as described by Alam and Singh (2021) in their study of emotion regulation strategies adopted by supervisors and employees. This affective impact tends to manifest through the use of evaluative language, while the expression of individual and collective perspectives on the topic involves the employment of various engagement strategies.

Negotiating Interpersonal Meanings

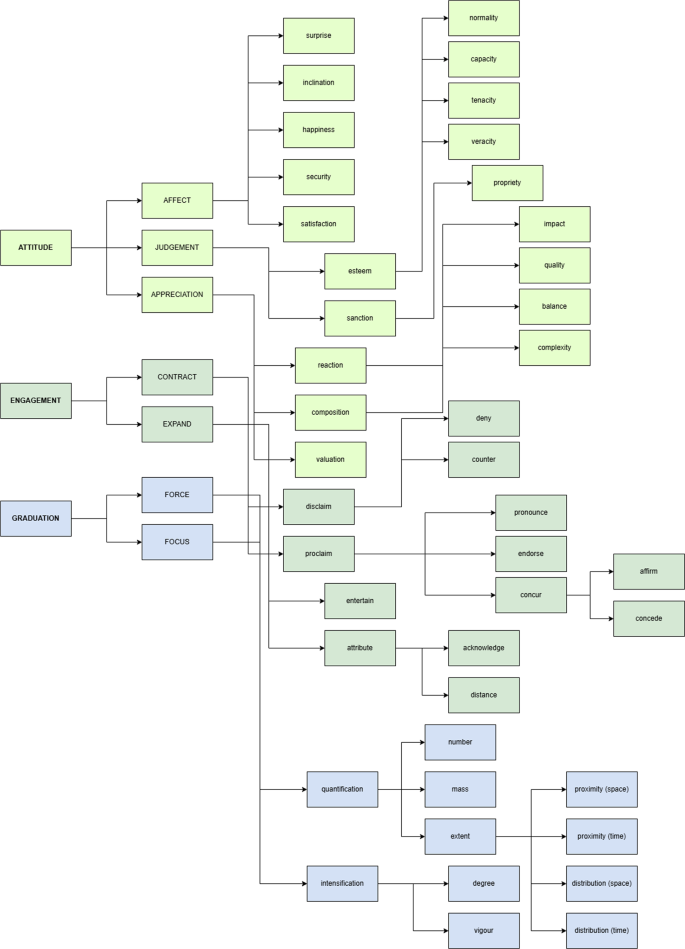

Grounded in SFL, Appraisal theory describes how language encodes emotion, evaluation and intersubjective positioning (Martin & White, 2007). The taxonomy includes the systems of Attitude (emotions, moral evaluations and aesthetic judgments), Engagement (contractive or expansive dialogic positioning) and Graduation (gradability or scaling) (Fig. 1). Feedback relies heavily on evaluative language use. Attitude markers, for example, can convey criticism or praise (e.g. “You will most likely get a “looks good” and a few sentences more”) (Hyland & Hyland, 2019).

Ideally, effective feedback maintains a right balance between criticism and reinforcement to sustain motivation and positive relationships (Carless, 2019). While studies such as Cunningham (2019) or Lander (2015) have applied the Appraisal framework to feedback, ongoing research into evaluative strategies, assessing feedback as a practice, is needed since the large majority of Appraisal investigations in higher education have explored other areas, such as online instruction during the COVID-19 pandemic (Meishar-Tal & Levenberg, 2021), collaborations with learning advisors (Macnaught et al., 2022) or inequality in second language teaching (Llinares & Evnitskaya, 2021).

The Appraisal framework, based on Read and Carroll (2012)

Computational Tools in Discourse Analysis

The field of corpus linguistics has long complemented Appraisal theory and SFL in exploring meaningful discourse patterns, while machine learning and statistical methods are increasingly expanding the scope of such analyses (Matthiessen, 2019). For example, Hunston (2013) reveals how evaluative language may reveal ideological positioning, adopting a corpus approach, and O’Halloran et al. (2018) demonstrate the usefulness of digital tools such as data mining for multimodal analysis. Argamon (2019) and Schweinberger (2024) further show how computational methods can help uncover prominent patterns in linguistic variation, which are then contextualised through qualitative interpretation.

In CADS, log-likelihood ratio (LLR)-based keyword analysis is frequently employed, as it can identify statistically significant lexicogrammatical or appraisal markers in a target corpus compared to a reference corpus and facilitate data-driven exploration of the features and their possible functions (Gabrielatos, 2018). However, as Baker (2018) notes, LLR calculations should be carefully interpreted due to possible uneven marker distributions, which may affect representativeness, and complemented with other quantitative measures and qualitative analysis.

Despite their usefulness, unsupervised machine learning tools such as Principal Component Analysis (PCA) and k-means clustering are less well-known techniques in CADS. PCA reduces dimensionality in multivariate datasets, retaining key variance while uncovering latent patterns of variation (Biber, 1991; Jolliffe & Cadima, 2016). According to Bro and Smilde (2014), PCA is a “powerful and versatile method capable of providing an overview of complex multivariate data” (p. 2829). Biber’s (1988) multidimensional analysis of register variation used Factor Analysis, which is closely related to PCA (Levshina, 2015).

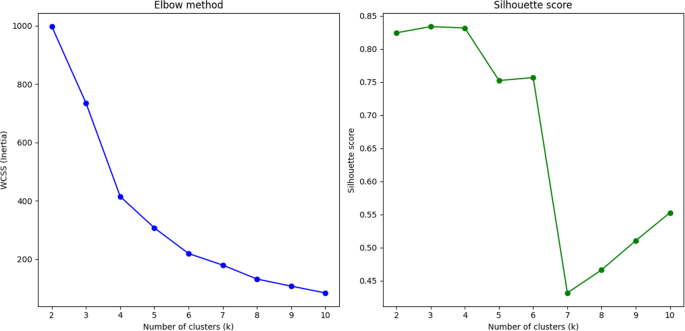

Hierarchical k-means clustering, a more widely used data mining technique, partitions datasets into clusters that minimise internal variance (Lloyd, 1982). This method is particularly helpful for grouping appraisal resources into dominant clusters for further qualitative investigation. For example, clusters may reveal contrasts between attitudinal and engagement markers, enabling a clearer understanding of marker distribution and co-occurrence. However, the interpretative stage is critical, as it ascertains that clusters represent register-specific meanings, depending on one’s research objectives. Subjectivity in describing clustering outcomes may be resolved through the use of theoretically established appraisal categories and functions (Martin & White, 2007) and the addition of systematic validation methods, such as the Elbow method (Thorndike, 1953) and silhouette score (Rousseeuw, 1987). Biber et al. (2021) apply k-means clustering to identify “new bottom-up text-type categories” (p. 35), which they deem to better reflect situational characteristics than predefined registers.

PCA and k-means clustering fulfil complementary roles. While reducing the data’s dimensionality can help identify general patterns of variability, clustering points to specific groupings of appraisal markers, which facilitates further investigation of co-occurrences and the markers’ specific functions in the discourse according to theory-defined categories. By supplementing the PCA and cluster results with sufficient contextualisation, these statistical tools may offer suitable methods for generating an initial hypothesis with regard to the use of appraisal in the two registers. Nevertheless, to ensure the relevance and validity of the obtained patterns, statistical biases and interpreter subjectivity need to be taken into consideration and addressed. Accurate data annotation is hereby essential to guarantee high-quality output.

Validation and Subjective Bias

While unsupervised machine learning may provide a powerful means for pattern detection based on frequency distribution, statistical testing and appropriate validation techniques are also needed to make sure the quantitative results are reliable. A Chi-square test, for example, can confirm statistically significant differences in terms of appraisal marker use. However, this only provides a broad indication of significance, without detailing the strength or nature of relationships (Sharpe, 2015). Subsequent residual analysis can overcome this limitation by pinpointing markers that deviate from expected distributions. Another valuable test is ANOVA, which may be performed to determine significant divergences in terms of principal component scores across clusters and theoretical categories, such as, in this case, Attitude, Engagement and Graduation (Field, 2024).

Even though quantitative results may provide a solid foundation for identifying patterns and testing initial hypotheses, the patterns still require close narrative analysis to fully understand how appraisal resources function in their textual and social contexts (Bednarek, 2024). Various registerial variables, such as topic, audience, communicative purpose and participants’ rhetorical goals, continuously shape discourse and must be accounted for. For example, social media platforms tend to encourage interpersonal alignment through sharing reflections, emotions and personal experiences, which leads to the frequent use of entertain (e.g. think) and attitude (e.g. good) resources.

While the aforementioned popular statistical tools may validate divergences in appraisal choices between two registers, Pearson residual analysis can further illuminate over- or under-represented appraisal markers, revealing internal variation (Desagulier, 2017). For example, think may exhibit high residual values for social media data due to its role in expressing subjective viewpoints, while showing lower values for academic registers (Van Poucke, 2025). This method moves beyond frequency counts to uncover meaningful deviations and may offer insights into how the markers are deployed in divergent contexts. Rooted in Gauss’ early 19th-century regression model, residual analysis is especially effective when combined with PCA, as it can help identify key appraisal markers systematically without arbitrary, subjective selection. By firmly anchoring such statistical methods in the Appraisal framework, quantitative results remain theory-informed, addressing possible concerns regarding ambiguous use.

It is clear that computational tools are great at detecting statistically significant appraisal markers, but the analysis still needs to be contextualised through rigorous annotation and qualitative interpretation. K-means clustering, for example, can partition markers into meaningful clusters, which subsequently need to be analysed in their immediate textual context to address the limitations of so-called ‘bag-of-words’ models. A mixed-methods approach can explain how appraisal resources vary across registers while mitigating researcher subjectivity, enabling the identification of meaningful patterns and the exploration of rhetorical strategies and effects, while increasing the credibility and relevance of findings. Halliday’s (1993) visonary weather analogy already implied that the frequencies in a corpus may provide insight into the system’s probabilistic tendencies. For example, a high frequency of attitude markers (e.g. useful) may suggest an increased systemic probability of attitudinal appraisal based on situational use of appraisal markers. However, as mentioned earlier, these probabilities always need to be linked to the corresponding appraisal functions through thematic analysis to elucidate how the markers shape writers’ communicative goals in each register.

Methodology

Data Collection

The r/college corpus consists of social media posts and comments from the subreddit of the same name, and contains 5,308 posts and 4,257 replies of 2,666 predominantly English-speaking undergraduate and postgraduate students (Table 1).Footnote1 The search queries included ‘feedback,’ ‘assessment’ and ‘higher education.’ Multilingual entries were excluded from the study. The online register is characterised by dialogic peer interactions, in which users share feelings, opinions and experiences informally to build solidarity. The research corpus includes the discussion and conclusion sections of 100 published articles on feedback in higher education since these are sections where authors tend to express their standpoint, evaluate findings and make recommendations, which is closely linked to appraisal. For example, appreciation markers may be used to assess the notion of feedback and graduation markers may reinforce findings (e.g. “An increasing awareness of the significance of dialogue within learning interactions is highly valuable”). Previous studies suggest that appraisal is highly concentrated in discussion and conclusion sections (Hyland, 2018). Formal academic registers predominantly target an audience of other researchers and teaching professionals. The term ‘feedback’ occurred in 490 posts (733 times).

Preprocessing involved converting text to lowercase, removing hyperlinks, correcting spelling errors and applying normalisation and standardisation to ensure compatibility with the clustering algorithm. Any identifying information, especially in the Reddit data, was removed to maintain anonymity.

Research Methods

Quantitative Analysis

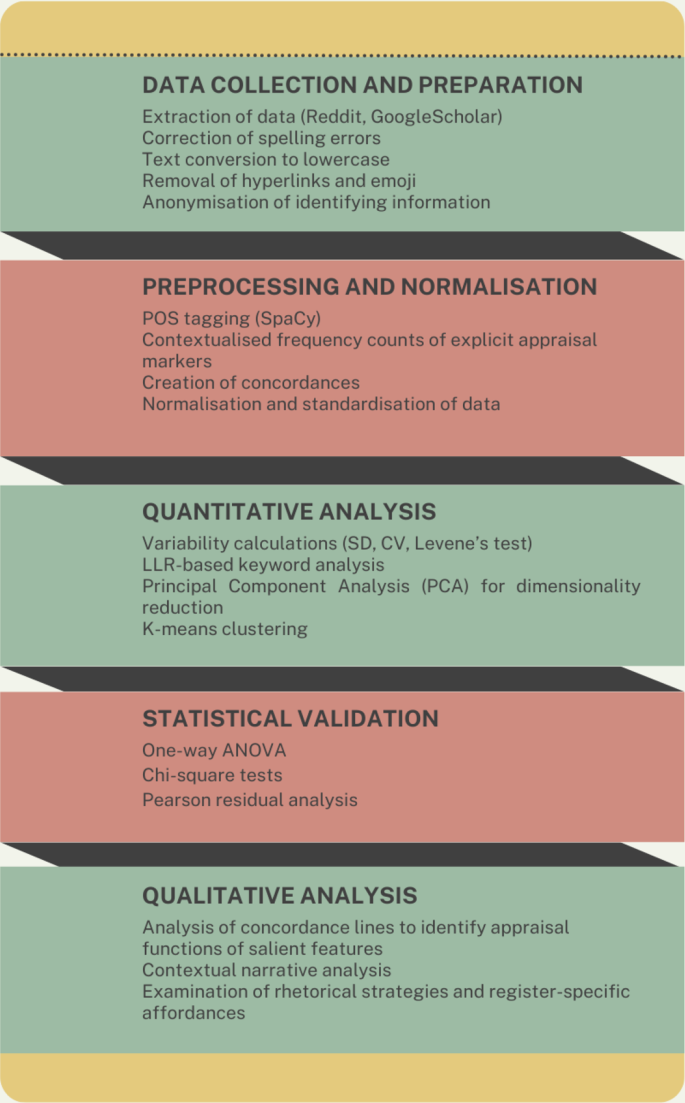

The primary aim of the quantitative analysis was to identify and validate the distribution of appraisal markers across the two datasets. Using the Appraisal framework as a guideline, explicit appraisal markers were retrieved in each corpus based on part-of-speech (POS) tagging with SpaCy and organised into CSV files (Fig. 2). Contextualised frequency counts enabled accurate identification, for example distinguishing between the verbal use of like (e.g. “I like the style of writing”) and its prepositional counterpart (e.g. “It’s like pulling teeth”).

Martin and White (2007) take both inscribed (explicit) or evoked (implicit) appraisal into account. However, only explicit appraisal markers, such as modal verbs or evaluative adjectives, which are easier to classify consistently, were included in the frequency count to ground the analysis in observable patterns that can be reliably measured (Fuoli & Hommerberg, 2015). Divjak (2019) agrees that this approach yields better results. The exclusion of implicit markers was mitigated by exploring contextual meanings during the qualitative stage. Separate columns were included in the coding for the normalised frequencies of each category so that the clustering would reveal appraisal patterns as clear-cut dimensions across the three categories, revealing how the categories interact numerically.

An LLR-based keyword analysis identified the top 30 appraisal markers with the highest log-likelihood values, distinguishing those significantly more frequent in one dataset compared to the other. Variability metrics, including standard deviation (SD) and coefficient of variation (CV), were applied to the normalised frequencies to explore lexical dispersion, considered more reliable for analysing variability than Juilland’s D (Biber et al., 2016). More specifically, CV was used to verify whether markers were characteristic of the dataset as a whole. According to Gries (2021), considering dispersion is important to understand how evenly a feature is distributed in a corpus.

Principal Component Analysis (PCA) was applied to reduce dimensionality and capture variance across data to show relationships between appraisal markers. The components’ top features provided insight into how the appraisal resources cluster within the three main theoretical categories of Attitude, Engagement and Graduation. Since appraisal markers tend to co-occur in everyday language use, this method can help identify co-occurrences and inform further interpretation. Consider the following example taken from the r/college corpus: “Your solutions simply aren’t [engagement] gonna work, and your attitude about it is really, really [graduation] shitty [-attitude].” A component dominated by engagement markers that contract the dialogic space (e.g. but) may indicate a tendency towards disalignment, as shown in this example from the same dataset: “My English teacher is nice [+ attitude], but [engagement] he’s a tough [-attitude] grader.”

K-means clustering complemented PCA and grouped appraisal markers based on their distribution patterns. The Elbow method and silhouette scores determined the optimal cluster number (Biber et al., 2021; Kassambara, 2017). All results were visualised through scatter plots. As Desagulier (2017) explains, clustering is useful to explore the data and generate a hypothesis. For example, clusters that are dominated by affect and upscaling may point towards exaggerated emotions (e.g. “I feel really bad”), whereas clusters that focus on entertain markers (e.g. think, may) may indicate a dominant engagement strategy that seeks to promote reflection or speculation, as illustrated in this student comment: “Given the lack of syllabus or any way to regularly see your grades, I think you may [engagement] have some grounds to protest.”

PCA offers an overview of the underlying relationships between markers and contextual analysis of outliers identifies unexpected marker behaviour (Jolliffe & Cadima, 2016).

ANOVA was used to test differences in normalised frequencies between the datasets and validate statistical significance in marker distributions. A Chi-square test compared marker counts across the oral-like and written registers and verified divergences in terms of clusters and categories. Pearson residual analysis followed, pinpointing over- and under-represented markers that contributed most substantially to the Chi-square differences. Residual values exceeding ± 2 were considered significant (Sharpe, 2015).

Research method

Qualitative Analysis

The quantitative results informed the subsequent qualitative interpretation. To explain how prominent appraisal markers function across the two registers, this step focused on the over- and under-represented appraisal markers identified earlier. Over-represented markers were considered characteristic of the registers. Under-represented features, on the other hand, reveal which markers are being avoided by writers. For example, engagement markers are bound to overpopulate the research corpus since the academic writers’ main communicative goal is argumentation, rather than the expression of emotion. Focusing on statistically significant appraisal markers aids in comparing and contrasting the two registers, as it clearly shows similarities and differences in terms of appraisal use. The markers were linked to specific subcategories or functions (e.g. proclaim: pronounce) in the text and analysed within the situational and broader context, based on Appraisal theory (Martin & White, 2007). Neighbouring lines were examined to understand the narrative flow, rhetorical strategies and salient patterns. This process triangulates quantitative results with qualitative interpretation, addressing context-specific use while linking appraisal patterns to register-specific affordances and constraints. As such, it may clarify how contextualised appraisal use differs between the two registers and make meaningful connections between appraisal markers and their discourse-pragmatic functions, as recommended in Bednarek et al.’s (2024) guidelines.

Quantitative Findings

The quantitative analysis aimed to identify key appraisal markers in the datasets, which involved LLR-based keyword analysis for the identification of prominent markers, PCA and k-means clustering for the exploration of co-occurrence patterns and Pearson residual analysis for the examination of over- and under-represented markers.

Variability in Spoken Versus Written Registers

The oral-like r/college sub-register demonstrates greater absolute variability in appraisal marker use compared to the written research register (SD: 0.0064 vs. SD: 0.0042), which suggests that students tend to adapt appraisal resources based on emotion, audience and context in the social media environment. Conversely, the research dataset displays lower absolute variability but higher relative variability (CV: 2.95 vs. CV: 3.19), likely due to adherence to discipline-specific conventions. Levene’s test indicates that the differences are statistically significant, F(1, 298) = 5.22, p =.023, confirming that appraisal markers in the speech-like sub-register show greater dispersion, which supports the idea that this type of discourse adapts to communicative contexts. Although prior studies have examined variability in grammatical stance constructions (Biber, 1991; Larsson et al., 2024), appraisal variability is interpreted here as a rhetorical, evaluative phenomenon, as appraisal markers function beyond syntactic constraints, shaping interpersonal alignment, affinity and epistemic positioning in discourse. The results confirm Biber’s (2006) observation that stance expressions are more common in spoken registers than in academic writing. However, as mentioned earlier, the present study concentrates on appraisal strategies within Martin and White’s (2007) framework. The results suggest that greater absolute variability in appraisal also reflects the oral-like nature of social media discourse, with students specifically tailoring appraisal resources to other users and the platform’s affordances. In contrast, researchers seem to adopt more constrained yet strategically varied engagement styles that mainly aim to reinforce epistemic authority.

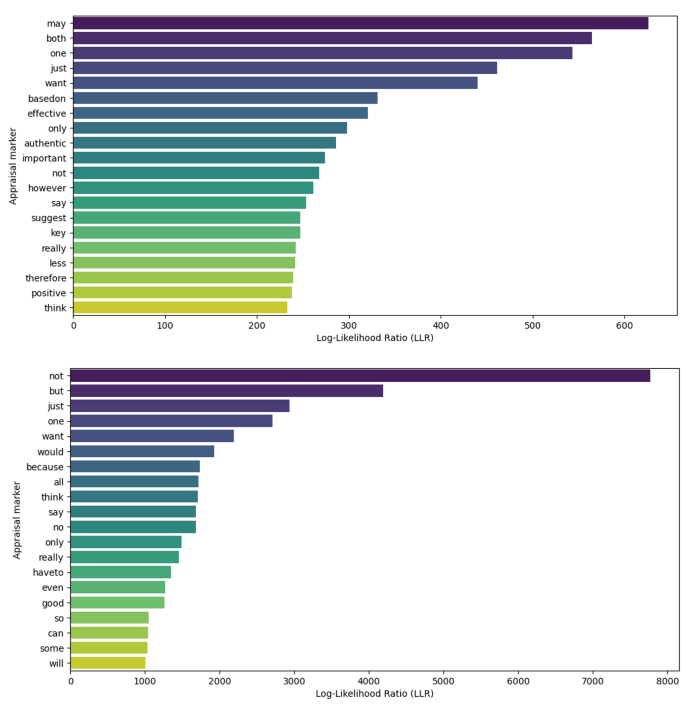

LLR-Based Keyness

After establishing the variability of appraisal markers, comparing the two registers, the next step explored salient markers in each dataset through LLR-based keyword analysis (see Fig. 3a and b) (Gabrielatos, 2018). Figure 3a displays the top 20 appraisal markers in the r/college dataset, revealing a blend of informal markers typical of online opinion-based registers, such as just and really, which function as graduation resources and indicate intensity (e.g. “Would it be worth asking or would it just annoy her?”), or think, which is an engagement resource that is commonly employed to express subjective evaluation (e.g. “I think the concept of self-plagiarism is absurd”).

In contrast, Fig. 3b shows that the research dataset features more formal appraisal markers, such as one, based on or therefore, corresponding to different appraisal strategies. For example, the academic discourse regularly includes statements such as “Based on these results, we suggest that teachers implement more technology enhanced feedback-assisted collaborative writing” or “Therefore, we suggest that educators should consider how assessment can orient students towards a future self in a digital world.”

While both corpora share several engagement resources, such as may, not, say or think, the academic dataset shows a wider range of this type of marker, confirming Larsson et al.’s (2024) findings. A typical example is the following: “However, in some unfortunate situations, learners may not always receive adequate feedback,” which demonstrates hedging and formal argumentation.

The prevalence of markers such as want and think in the r/college dataset contributes to a dialogical style that is highly characteristic of social media interactions, as in “I just want to know why it marked me down, and what I did wrong,” whereas effective and authentic are associated with subjective evaluations: “Since the comments are on specific parts of it, they are much more authentic and deep.” Markers such as say suggest informal referencing practices or hearsay evidence: “They say this professor is the worst.” The researcher corpus, on the other hand, reveals an evidence-based communication style that tends to privilege objectivity, logical reasoning and critical discussion since key markers include not, but and because for structured argumentation (e.g. “Self-assessment without explicit feedback is problematic not only because it is difficult to notice its influence, but also because it is subject to biases”), would for hypothesising (e.g. “Using generative AI, instead of teachers, to frequently provide feedback would be especially problematic”), have to for recommendations (e.g. “The formulation of questions with model answers has to be carefully considered”) and can for exploring possible solutions (e.g. “When the proposed suggestions are specific, students’ attention can be focused and feedback can be more directed”).

(a) Top 20 appraisal markers by LLR r/college dataset. (b) Top 20 appraisal markers by LLR research dataset

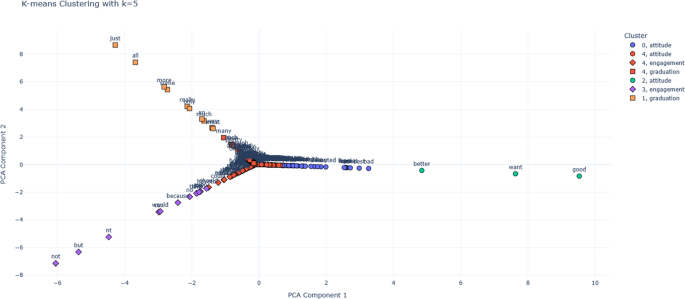

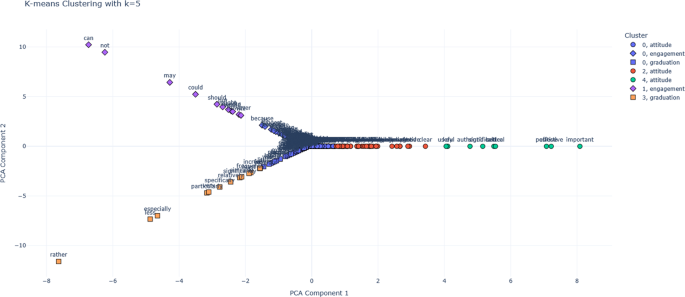

PCA and Clustering Results

Prominent Co-Occurrence Patterns

The PCA reveals two primary components that account for significant proportions of the variance. In the r/college dataset, PC1 is driven by Attitude (loading: 0.96), reflecting strong emotional and evaluative meanings, while PC2 has captured Engagement and Graduation (loadings: 0.71 and 0.70), indicating practical, action-oriented aspects. Together, PC1 and PC2 account for 85.09% of the variance (PC1: 50.36%; PC2: 34.73%). In the research dataset, Attitude also heavily loads on PC1 (0.97), while PC2 reflects Engagement and Graduation (loadings: 0.68 and 0.70). Combined, PC1 and PC2 explain 74.08% of the variance (PC1: 40.95%; PC2: 33.13%). These results illustrate the functional differences between the two datasets, with PCA capturing 70–90% of the variance, which is generally regarded as an acceptable outcome (Desagulier, 2017).

K-Means Cluster Analysis

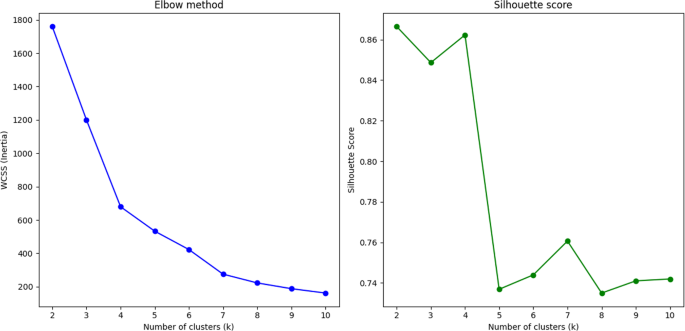

Clustering, informed by PCA dimensions, shows register-specific preferences for certain appraisal markers, which are explained below. The Elbow method determined k = 5 as the optimal number of clusters, supported by the silhouette analysis (Figs. 4 and 5). K-means clustering segmented the data into five clusters based on appraisal co-occurrence patterns and PCA contributions. These clusters group appraisal markers by shared features, which are to be interpreted based on Appraisal theory (Fig. 1).

Elbow method and silhouette score r/college dataset

Elbow method and silhouette score research dataset

Figures 6 and 7 show the cluster boundaries. In the r/college dataset, Cluster 0 is attitude-dominant and encompasses both positive and negative evaluations (bad, best, hate, love, like), revealing strong mixed emotions about feedback (Fig. 6). Cluster 1 focuses on graduation resources of force (really, so) and quantification (all, most), indicating exaggerated expressions regarding feedback’s impact. Cluster 2 is a small, positive-attitude cluster (good, want, better) that suggests a constructive, future-oriented perspective. Cluster 3 focuses on engagement markers, including entertain (think, can, would), attributive (say), proclaim (have to, will) and disclaim (not, but) resources, which help develop a critical, argumentative standpoint. Cluster 4 blends affective markers (wish, glad, confused, annoying) with positive appreciation (positive, important), accentuating emotional reactions to feedback. In sum, clusters 0, 2, and 4 emphasise Attitude, while clusters 1 and 3 prioritise Graduation and Engagement. The clustering shows that the social media discourse tends to express and exaggerate emotion. However, it must be reemphasised that these results constitute a preliminary hypothesis regarding the possible functions of the appraisal markers.

Clustering of r/college appraisal markers. An interactive version of this graph is available from: https://margolvp.github.io/appraisal-clusters-feedback/college_clusters_plot.html

For the research dataset (Fig. 7), Cluster 0 mixes moderately evaluative attitudinal resources (dynamic, desirable, passive, poor) and shows critical evaluation of feedback practices. Cluster 1 is dominated by engagement resources and distinguishes possibility modals (might, could) from necessity (semi-)modals (should, need to), indicating hedging and evidence-based recommendations. Cluster 2 highlights appreciation markers (good, clear, complex) and thus appears to focus on various qualities. These markers play an important role in the evaluation of findings and in convincing the reader to adopt a similar standpoint. Cluster 3 features graduation resources of force (increasingly, significantly, relatively) and focus (particularly), used to grade evaluations and modulate precision. Cluster 4 emphasises strong positive appreciation, with valuation resources (important, effective, significant) evaluating key research findings. The clusters accentuate critical evaluation and precision, which are typical of evidence-based reasoning and compliance with scholarly conventions, such as objectivity. This pattern seems to reflect common tendencies in academic writing.

Clustering of research appraisal markers. An interactive version of this graph is available from: https://margolvp.github.io/appraisal-clusters-feedback/research_clusters_plot.html

ANOVA and Chi-Square Tests

A one-way ANOVA confirmed statistically significant differences across clusters for attitude, engagement and graduation markers in both datasets. For the r/college dataset, differences were observed for Attitude F(4,447) = 646.25, p <.001, Engagement F(4,447) = 265.36, p <.001, and Graduation F(4,447) = 189.87, p <.001. The research dataset showed similar distinctions: Attitude F(4,755) = 1941.51, p <.001, Engagement F(4,755) = 282.52, p <.001, and Graduation F(4,755) = 129.43, p <.001. The findings validate the clusters as meaningful groupings of appraisal markers.

Chi-square tests further confirmed statistically significant relationships between the clusters and appraisal categories for the r/college dataset (χ2(8) = 124.62, p <.001 and research dataset (χ2(8) = 201.40, p <.001, indicating that the distribution of attitude, engagement and graduation markers across clusters is non-random and supporting the hypothesis of divergent appraisal resource use in each dataset. Even though the ANOVA and Chi-square tests have already confirmed the statistically significant patterns, a residual analysis may now reveal which markers drive the patterns.

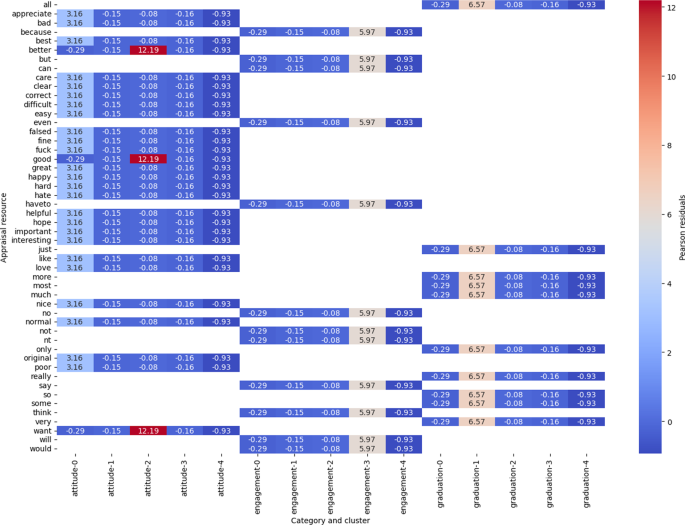

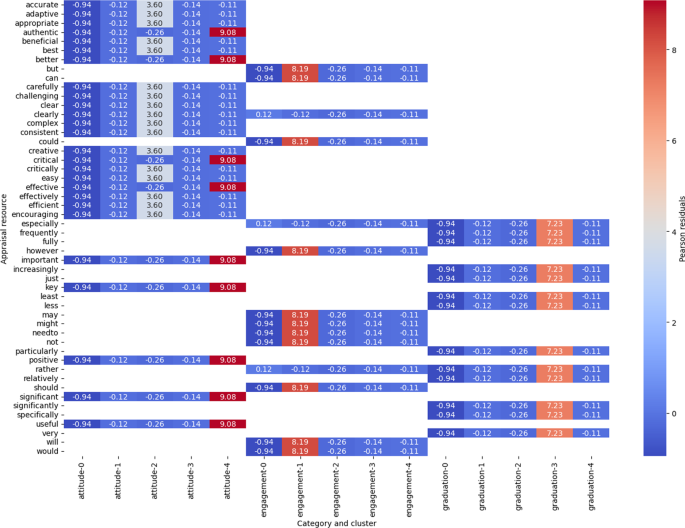

Pearson Residual Analysis

Residual analysis was conducted to identify deviations from expected values, with large residuals (> 2) identifying clusters and categories contributing significantly to the Chi-square statistic. Figures 8 and 9 visualise the distribution of residuals across clusters in a heatmap, pinpointing markers with notable deviations from expected frequencies. Pearson residuals indicate where observed counts deviate from expected frequencies in specific clusters and categories, supported by Sharpe’s (2015) recommendations for the identification of salient outliers. The results show that 97% of residuals for the r/college dataset and 98% of residuals for the research dataset are below the threshold of 2, suggesting that the clustering and expected frequencies are reliable, with minimum random variation (Desagulier, 2017).

In the r/college dataset, residuals above 2 are observed in markers such as positive, significant and useful in cluster 4, or but and not in cluster 1 (Fig. 8). Outliers with high residuals include better (9.08), not (8.19) and just (7.23), demonstrating considerable contextual variability. Attitude markers display the highest positive residuals, which points to notable evaluative language use among students. Markers such as better or not denote a heightened emotional investment and strong views regarding feedback.

Top 50 appraisal markers based on the residual analysis (r/college dataset)

In the research dataset, prominent outliers include good (12.19), can (5.97) and very (6.57), revealing complex discourse patterns that require further contextual analysis (Fig. 9). Residuals close to zero, such as happy (0.65) and glad (0.53) in the r/college dataset, or according to (0.12) and unprecedented (0.12) in the research dataset, represent markers that are well-aligned with expected frequencies. The prominence of markers such as good or better suggests a predominant focus on critical evaluation among the researchers.

Top 50 appraisal markers based on the residual analysis (research dataset)

The results confirm previous findings from the LLR-based keyness analysis, PCA and k-means clustering, which identified overlapping appraisal markers. Nevertheless, it needs to be noted that each of these methods serves different purposes and reveals specific aspects of the data. The LLR keyword analysis has detected key appraisal markers that are strongly associated with the discourse domain of feedback, which may be linked to frequently discussed sub-topics (Gabrielatos, 2018). However, this might overlook less frequent yet contextually prominent markers. For example, critical in the research dataset could imply a certain type of feedback but might also reflect its common use in academic writing, thus demonstrating the need for further contextualisation within the wider discursive practices of the register.

PCA and k-means clustering, on the other hand, have revealed underlying patterns in terms of appraisal marker use, such as the clustering of (semi-)modals in the research dataset, which marks a preference for necessity and obligation modals (should, need to). Identical results, such as the prominence of just and want in the r/college dataset or not and but in the research dataset, further confirm the patterns revealed by the previously applied quantitative techniques. However, it is worth stressing that the statistical methods lead to complementary insights, with residual analysis revealing deviations in terms of marker distribution.

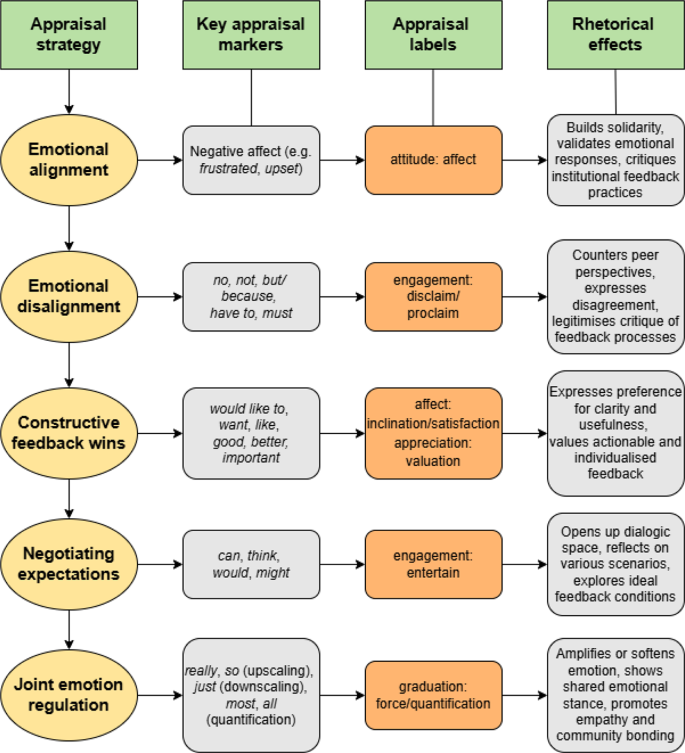

Student Standpoint on Feedback

Emotional Alignment

Most students who share posts and make comments on r/college appear to build solidarity with peers. Their main communicative goals include challenging established institutional feedback practices, demanding change, sharing past feedback experiences and expressing mixed feelings, while simultaneously exploring possibilities for improvement (Fig. 10). This shared appraisal strategy of emotional alignment is evident through a strong reliance on attitudinal resources, especially when discussing the emotional impact of feedback, which, according to Little et al. (2023), needs to be seen as “inseparable from the process itself,” underlining that “the goal should not be to overcome these feelings but to understand that they are resources from which students can learn” (p. 48). Concrete examples linking appraisal markers to students’ main rhetorical strategies are provided in the following subsections.

Emotional Disalignment

Students frequently select disclaim: deny and proclaim: pronounce resources (no, because, have to) to counter other users’ insights and criticise overly complex feedback processes, as also noted by Carless and Boud (2018). No appears in outright rejections or denials, such as “There has been no opportunity to contest or review any grades.” Because is used to justify perceived injustices, framing unmet expectations as legitimate complaints: “I don’t even want to do the assignments in this course because I know they’re going to be graded unfairly.” Have to is used to outline individual expectations: “I don’t think I have to set up an appointment with her for feedback.”

Constructive Feedback Wins

A strong desire for clarity and detail emerges through students’ use of affect: inclination (would like to, want) and affect: satisfaction (like) markers underlining the importance of what follows in terms of preferences: “I love feedback and I need it because I want to improve my papers.” This shows a clear penchant for academic self-development, which is a crucial skill for students (Nicol & McCallum, 2022). Like expresses satisfaction with some of the existing feedback practices: “I like when the professor takes the time to mention a specific about *why* it was a 100.” Appreciation: valuation markers (good) also frequently appear and reveal a need for feedback that is actionable. Positive evaluations of feedback are consistently situated within wider educational goals. One student states: “The feedback is generally good and shows the professor has an understanding of what you need to improve,” which reiterates the value the learners seem to attach to detailed guidance. Even though positive evaluations abound, many students regularly critique existing feedback practices, especially regarding the lack of explicitness: “It’s better to ask for more specific feedback so you can improve on future assignments.” This demonstrates a pronounced preference for individually tailored feedback over vague, generic comments.

Negotiating Possibilities and Expectations

Apart from expressing emotion and using evaluative language, the students also engage dialogically with others and seem to favour entertain resources (can, think, would, will). Notably, most of the learners actively seek feedback and acknowledge its importance for academic success. Their mixed standpoint, which appears to alternate between critique and idealisation, becomes especially evident in more expansive engagement contexts, where they reflect upon possible outcomes and hypothetical scenarios: “I hear audio feedback can help students get a sense of what the professor is saying.” Many students seem to adopt a proactive approach to enhancing feedback quality through the use of entertain markers such as think or would to engage with external viewpoints and speculate about educators’ emotional behaviour and responses: “I think most instructors would be happy to receive a short note of appreciation.” Will frequently articulates a sense of unmet promise: “In our syllabus, it states that the professor will return all assignments with feedback within 3 weeks.”

Joint Emotion Regulation

Students continuously adjust their attitudinal and engagement stances through the selection of upscaling (really, so) and downscaling (just) resources. Graduation markers of the sub-type of force are commonly used to amplify the intensity of evaluations: “It was so good she thought a student wouldn’t be able to pull it off.” Students’ emotions tend to escalate over plagiarism accusations. Their negative evaluations mostly concentrate on perceived shortcomings in existing feedback practices. Upscaling devices (really) aid in airing frustration and reveal the emotional burden of negative or undesirable feedback: “The fact that I can’t even ask one question without being continually admonished by my professor is really discouraging for me.” Frequent use of upscaling resources also reinforce the students’ demand for change. Hedges (just), on the other hand, help avoid direct confrontation and stimulate further discussion and bonding (Zappavigna & Martin, 2018): “There’s just this satisfying feeling we get whenever we read their feedback…” Just reduces the emotional weight of this personal statement and the use of we builds inclusivity. Force: quantification resources (most, all) serve a similar purpose and validate a shared emotional standpoint: “I would love to thank him, but my classmates all say I should not respond to feedback like that.”

Main appraisal strategies adopted by students

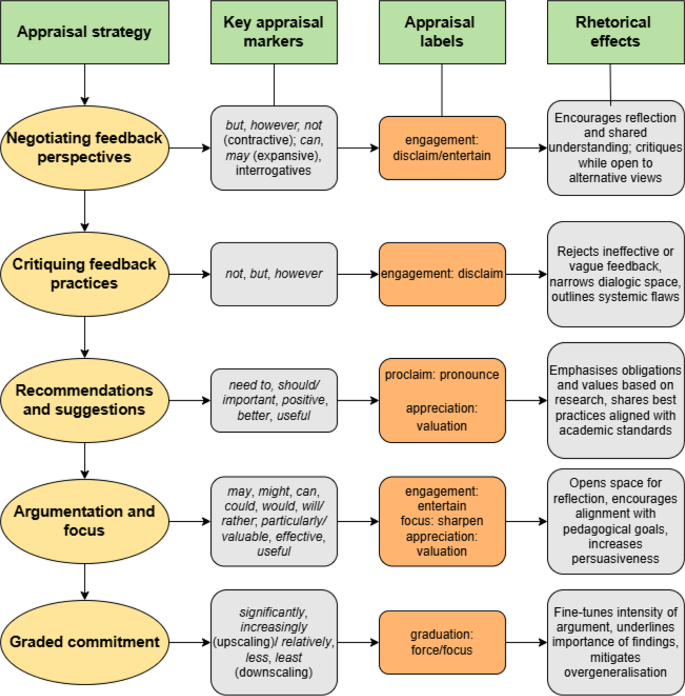

Academic Standpoint on Feedback

Negotiating Feedback Perspectives

Researchers position themselves regarding feedback literacy through discussing effective and ineffective feedback approaches with other scholars (and readers) while encouraging further reflection and consensus within the academic community (Fig. 11). They achieve this by combining both dialogically contractive (e.g. but, however) and expansive (e.g. can, may) engagement resources, carefully balancing critical evaluation of external perspectives with the assertion of their own views: “This can lead to better clarity of results, as discussing the whole concept of feedback literacy but only reporting on certain outcomes leaves room for confusion.” Scholars also frequently express uncertainty regarding current conceptualisations of feedback and present solutions for improvement based on shared pedagogical values: “Is feedback literacy a literacy? Perhaps the answer to this question might be found by exploring the boundaries between feedback literacy and other similar concepts.” The authors persuasively argue that feedback is relevant and conductive to students’ academic development, which happens to correlate with the students’ strong disposition for self-improvement: “A closer investigation of comparison processes afforded by ipsative feedback can refine our understanding of internal feedback.” In the following sections, more examples are provided for each of the researchers’ appraisal strategies.

Critiquing Feedback Practices

Researchers tend to select contractive engagement markers to reject vague, ineffective or misaligned feedback, narrowing the dialogic space, in an attempt to convince readers to adopt the same standpoint. Disclaim: deny resources (not) are used to explicitly deny the effectiveness of certain practices: “Thus, the feedback was not directive in a manner that informed the students of what points required correction.” Disclaim: counter devices (but, however) contrast prior research with proposed directions and accentuate a need for innovative methodological approaches. By combining these engagement resources, researchers seek to identify gaps or failures in existing feedback or research practices: “However, such a scenario is not only about ‘negative attitudes’ but about systemic discrimination.”

Recommendations and Suggestions

Proclaim: pronounce resources (need to, should) frequently co-occur with be and are followed by desired qualities and guidelines for refinement and alignment with contextual factors: “Feedback processes need to be understood and barriers to their use mitigated” or “Most importantly, it should be responsive to students’ cultural background.” However, the obligation and necessity (semi-)modals outline what is required or needed based on research findings, rather than reinforcing rules and regulations.

Positive appreciation: valuation markers are used to encourage improved feedback practices, often by relating them to abstract concepts: “It may be more profitable for students to experience signature feedback practices authentic to the discipline.” By encouraging alignment with disciplinary norms, researchers evoke shared values and contribute to the perceived relevance of feedback. Appreciation markers (better) evaluate feedback quality and its effects: “It is possible that this perceived increase in fairness was caused by the increased transparency in marking…, with students having a better understanding of why they received the overall summative mark.” Important is commonly selected to outline what is deemed essential for effective feedback, such as dialogical interaction and critical (self-)reflection: “Self-assessment interventions with explicit feedback on students’ performance showed a significantly larger effect than those without explicit feedback, indicating that the availability of external feedback provides important scaffolding for successful self-assessment.” Other appreciations (positive) attach value to specific attributes of feedback, such as its psychological and emotional benefits for learners: “Positive emotions documented in the studies involve curiosity, satisfaction, awe, gratitude and positivity.” More practical aspects of feedback are regularly foregrounded as well and regularly assume universal applicability: “A number of interviewees remarked that peer feedback activities provided useful information for writing improvement.”

Argumentation and Focus

Researchers make extensive use of expansive engagement resources such as may, might, can, could, would and will, while considering hypothetical scenarios, as in: “Some students may prefer the feedback or recommendations without specifically mentioning their weak areas…” Here, may showcases the variability in student feedback preferences. Similarly, can, could and would tend to portray feedback as contingent on educators’ and students’ actions, raising opportunities for positive change: “Teachers can tap into behavioral, affective, and cognitive dimensions of student engagement to facilitate active redrafting and revision.” Several researchers propose peer review: “We suggest that peer feedback could be used as an effective alternative to teacher feedback…”.

Combinations of entertain and appreciation: valuation and sharpening resources seem to be highly common as well and contribute to a wider discursive objective of opening up the dialogic space to allow for reflection, while encouraging collective alignment with academic standards (Hood, 2022): “Research and development projects focused on developing teacher and student feedback literacy in tandem would be particularly valuable.” Various entities (e.g. research) and practices, including feedback, are evaluated as valuable in claims supported by positive appreciation, acknowledging the complexity of such processes, whilst also inviting ongoing discussion. This helps link researchers’ individual arguments to the academic community’s collaborative ethos. Imbued with positive attitude, it also enhances persuasiveness and appeals to a shared commitment to improvement. Focus: sharpen resources (rather) specify researchers’ preferences, used as evidence for views expressed regarding feedback: “in collaborative learning contexts, adaptive feedback rather than static seems to effectively compensate for collaboration costs.” Will is mostly selected to predict positive outcomes when following academics’ recommendations: “A well-structured feedback design making good use of different feedback practices for different task purposes will help make a very effective case.” Feedback is frequently presented as actionable, with clear expectations for its role in stimulating academic growth, which is reinforced here by the coupling of a focal entity (feedback design), positive valuation (effective) and upscaling (very).

The scholars tend to adjust the intensity of their authorial stances by amplifying or mitigating positive and negative appreciations. Upscaling graduation resources (increasingly, significantly) are mostly used to underline the importance of observations or research findings: “Therefore, peer and self-feedback were significantly more effective than teacher feedback in enhancing behavioral engagement.” Downscaling devices (relatively, less, least), on the other hand, reduce the strength of their evaluations: “With regard to the students’ presentations not improving as a function of received peer feedback it should be noted that all students gave relatively good trial presentations” and help avoid generalisations: “Self-level feedback was the least effective form of feedback of the four levels, with the information too vague and non-specific to be useful for the learner.”

Main appraisal strategies adopted by researchers

Further Discussion and Conclusion

Register as a Predictor of Linguistic Variation

The findings demonstrate the impact of register variables such as situational context or targeted audience on the selection of appraisal markers in discourse surrounding feedback, establishing register as a powerful predictor of appraisal variation, even within a shared discourse domain. The Reddit sub-register (r/college corpus) is characterised by a prevalence of attitudinal markers and prioritises the expression of emotion, while the academic writing register (research corpus) prefers combinations of engagement and graduation resources for the purpose of critical argumentation and focus. This confirms Biber et al.’s (2024) claim that oral online registers tend to concentrate on personal concerns and opinions, whereas written registers mostly present propositional information, with limited acknowledgement of individual feelings or audience interaction. Nevertheless, education-focused researchers do express a particular implicit attitude towards (un)desirable types of feedback in their journal articles. In the r/college corpus, regular couplings of feedback with attitudinal markers frame comments to more explicitly elicit affectual alignment. However, evoked attitude also plays an important role in this type of discourse, with inferred meanings, emojis, videos, images and the like further contributing to affiliation (Logi & Zappavigna, 2023). These examples of multimodal layering illustrate the impact of mode, as the Reddit platform affords the use of visuals and paralinguistic resources to strengthen interpersonal stance in asynchronous online discussions.

The students’ expansive engagement style stimulates further sharing of feedback experiences among peers. In contrast, academic discourse relies more on evidence-based assessments to create alignment through shared scholarly and pedagogical values, favouring hedging (e.g., may, could) and precision markers (e.g., particularly) to encourage readers’ acceptance of findings.

The participatory nature of the Reddit sub-register further leads to greater adaptation to the immediate context of the social media platform and other students’ posts and comments. Conversely, the research register remains bound by disciplinary conventions, resulting in relatively stable though context-sensitive variability. By blending oral and written appraisal markers, the r/college corpus challenges traditional register dichotomies, with attitude emerging as the main driver of variation, while engagement remains dominant in the research corpus. Both registers employ contractive engagement resources to assert various claims and counter others’ propositions, though with differing degrees of certainty.

These register-specific appraisal marker selections clearly foreground the situated character of evaluative language. The key patterns that have been identified in the r/college corpus describe students’ subjective experiences of feedback, their efforts to align or disalign with peers and their wider communicative goals within the higher education context. Analysing students’ reflections as a digital sub-register has allowed a detailed comparison between institutionalised academic writing, a register that has already attracted a considerable amount of research attention, and a relatively new, oral-like social media text type. In line with Fuoli and Bednarek (2022), who found that evaluative language in interactive online registers, such as Twitter, is influenced by emotional and situational demands, the present study’s findings reinforce the importance of considering contextual parameters. Apart from tenor, register variables such as field and mode also condition writers’ semantic choices and the probabilistic distribution of appraisal markers (Matthiessen, 2015).

Feedback Perspectives

In the two corpora, feedback is conceptualised, critiqued, appreciated and adopted as both a heterogeneous dialogic and sociomaterial practice that tends to be influenced by interpersonal and situational factors such as student expectations, academic norms, institutional context and mutual goals. Students on r/college appear to recognise its critical role in their academic success and express a keen desire for critique that is clear, fair and actionable. These learner preferences seem to correlate with a focus on self-improvement that sees detailed feedback as instrumental in targeting areas of individual growth. However, the students’ reflective standpoint also juxtaposes high expectations with dissatisfaction over perceived shortcomings, such as vague or absent comments (Carless & Winstone, 2020; Rowe, 2017). Emotions appear to play a strategic role, with discussions encapsulating a spectrum of feelings ranging from sadness and frustration to desires for the future and hopefulness. In the r/college corpus, this emotion-loaded language frequently manifests through the combination of various affective expressions and upscaling, such as really low/bad/good, so frustrated/stressed/excited or hella grateful. While concentrating on the emotional impact of feedback, comments frequently shift from individual concerns to more systemic issues. The students’ emotional engagement with the topic affirms the interpersonal nature of feedback as a vital communicative process that moulds their burgeoning academic identity. Rather than being merely reactive, however, their affective language use is strategic in nature, as they purposefully call for fairer, more transparent feedback practices, which implies that their emotionality constitutes a form of rhetorical agency.

The researchers, in contrast, seem to adopt a critical, evaluative standpoint and analyse feedback practices to identify strengths and gaps while proposing evidence-based improvements. They often criticise feedback for being insufficiently directive and culturally unresponsive and hypothesise about the positive impact of better-designed feedback systems, expressing certainty regarding their effectiveness in achieving sustainable learning outcomes. However, while acknowledging the complexity of feedback and its unintentional effects, the researchers’ appraisal choices reveal a high degree of tentativeness, whereas the students’ confident assertions clearly denote what effective feedback should look like. The academic discussions are strongly hedged and tend to focus on the unpredictable effects of feedback on student behaviour and emotions, which invokes the complexity of designing universally effective feedback practices.

Feedback attributes such as clarity, timeliness and scaffolding potential appear central to the academic evaluations, which validates their role in student achievement. Enhanced emotional response and changed learning behaviour, including self-development, emerge as shared goals between students and scholars and can therefore be considered critical feedback objectives. Both groups acknowledge the constructive effects of feedback. Learners emphasise its emotional impact, whereas researchers mainly propose theorised approaches. Rowe (2017) describes the emotional labour that is inherent in feedback, gauging its potential to reduce student anxiety and enhance achievement. Yet, the students are the ones who narrate their lived feedback experiences, without even being aware of engaging in this form of labour, which shows how researchers’ conceptualisations of feedback may not always represent the breadth of its affective complexity.

Conclusion

This study set out to investigate how the use of appraisal resources varies between two feedback-related corpora. The analysis has unveiled how students’ and researchers’ appraisal selections link to implicit underlying values, rhetorical goals and discursive norms in each specific context. By linking Appraisal theory to register and employing LLR-based keyness analysis, the study contributes to a deeper understanding of how interpersonal meanings are patterned between different genres. Context, audience and mode have been found to significantly shape appraisal strategies in feedback-related discourse, as the r/college corpus prioritises affect and shared experience, while the research corpus prefers epistemic caution and disciplinary consensus. Despite these divergences, both datasets show a persistent preoccupation with clarity and actionable feedback. Various shared engagement resources (e.g. may, think) reveal important commonalities in terms of achieving dialogical alignment, albeit realised through the selection of different interpersonal devices.

It is evident that, in the two corpora, feedback is construed as a relational and evaluative practice that encapsulates both emotional and epistemic dimensions, which underlines its twofold role as a discursive site of interpersonal negotiation and critique. Even though students are typically seen as novice participants in the wider debate surrounding ‘best’ feedback practice, they seem to hold clear ideas about how it should be delivered. In the r/college corpus, their emotional language use, typically viewed as merely reactive, functions as a persuasive tool to critique feedback systems and practices, inviting researchers to acknowledge them as full-fledged partners in the feedback design process.

It should be noted that the study has several shortcomings. Since the investigation was limited to English language data, the findings may not be readily generalisable to non-English linguistic environments or institutional contexts. Furthermore, the expression of student stance towards feedback on r/college, while rich in terms of lived learner experiences, is also influenced by other situational factors that are inherent to social media platforms, such as anonymity or user response (e.g. upvoting). Future research might explore how appraisal strategies adopted by students and researchers evolve over time and could incorporate interview or survey data to triangulate learner and academic attitude regarding feedback.

The study’s findings support Carless and Boud’s (2018) conceptualisation of feedback as a dynamic process, in which students actively interpret and respond to evaluative input. In both corpora, effective feedback is understood as an ongoing balancing act between emotional sensitivity and clarity of purpose. Most importantly, however, the findings suggest the value of co-designing improved feedback processes, which would involve a careful unpacking of the layered meanings of common descriptors such as effective, good, clear, bad or useful. Such co-development could help reconcile individual learner expectations with institutional standards, diverse educator goals and the broader aim of encouraging self-efficacy. Based upon the current investigation, feedback that both evokes emotional resonance and provides clear, actionable insights for self-realisation seems to be best positioned to enhance student resilience and academic growth.

Notes

-

While the subreddit is mainly used by students, a few rare contributions appear to originate from tutors. However, the discourse still overwhelmingly represents student concerns and expectations regarding feedback, which form the main focus of the investigation.

References

-

Ajjawi, R., & Boud, D. (2017). Researching feedback dialogue: An interactional analysis approach. Assessment and Evaluation in Higher Education, 42(2), 252–265. https://doi.org/10.1080/02602938.2015.1102863

-

Alam, M., & Singh, P. (2021). Performance feedback interviews as affective events: An exploration of the impact of emotion regulation of negative performance feedback on supervisor–employee dyads. Human Resource Management Review, 31(2), 100740. https://doi.org/10.1016/j.hrmr.2019.100740

-

Argamon, S. E. (2019). Register in computational Language research. Register Studies, 1(1), 100–135. https://doi.org/10.1075/rs.18015.arg

-

Baker, P. (2018). Language, sexuality and corpus linguistics: Concerns and future directions. Journal of Language and Sexuality, 7(2), 263–279. https://doi.org/10.1075/jls.17018.bak

-

Baker, P., Brookes, G., & Evans, C. (2019). The Language of patient feedback: A corpus linguistic study of online health communication. Routledge.

-

Bednarek, M. (2024). Topic modelling in corpus-based discourse analysis: Uses and critiques. Discourse Studies, 0(0). https://doi.org/10.1177/14614456241293075

-

Bednarek, M., Schweinberger, M., & Lee, K. (2024). Corpus-based discourse analysis: From meta-reflection to accountability. Corpus Linguistics and Linguistic Theory, 20(3), 539–566. https://doi.org/10.1515/cllt-2023-0104

-

Biber, D. (1988). Variation across speech and writing. Cambridge University Press. https://doi.org/10.1017/CBO9780511621024

-

Biber, D. (2006). University language: A corpus-based study of spoken and written registers. John Benjamins.

-

Biber, D., Conrad, S., & Reppen, R. (2021). Corpus linguistics: Investigating Language structure and use. Cambridge University Press.

-

Biber, D., & Horowitz, R. (2023). Writing and speaking. In R. Horowitz (Ed.), The Routledge international handbook of research on writing (2nd ed., pp. 124–138). Routledge. https://doi.org/10.4324/9780429437991-10

-

Biber, D., Larsson, T., & Hancock, G. R. (2024). Dimensions of text complexity in the spoken and written modes: A comparison of theory-based models. Journal of English Linguistics, 52(1), 65–94. https://doi.org/10.1177/00754242231222296

-

Biber, D., Reppen, R., Schnur, E., & Ghanem, R. (2016). On the (non) utility of juilland’s D to measure lexical dispersion in large corpora. International Journal of Corpus Linguistics, 21(4), 439–464. https://doi.org/10.1558/jrds.33066

-

Boud, D., & Dawson, P. (2021). What feedback literate teachers do: An empirically-derived competency framework. Assessment & Evaluation in Higher Education, 48(2), 158–171. https://doi.org/10.1080/02602938.2021.1910928

-

Bro, R., & Smilde, A. K. (2014). Principal component analysis. Analytical Methods, 6(9), 2812–2831. https://doi.org/10.1039/c3ay41907j

-

Carless, D. (2019). Longitudinal perspectives on students’ experiences of feedback: A need for teacher–student partnerships. Higher Education Research & Development, 39(3), 425–438. https://doi.org/10.1080/07294360.2019.1684455

-

Carless, D., & Boud, D. (2018). The development of student feedback literacy: Enabling uptake of feedback. Assessment & Evaluation in Higher Education, 43(8), 1315–1325. https://doi.org/10.1080/02602938.2018.1463354

-

Carless, D., & Winstone, N. (2020). Teacher feedback literacy and its interplay with student feedback literacy. Teaching in Higher Education, 28(1), 150–163. https://doi.org/10.1080/13562517.2020.1782372

-

Cunningham, K. J. (2019). How Language choices in feedback change with technology: Engagement in text and screen cast feedback on ESL writing. Computers & Education, 135, 91–99. https://doi.org/10.1016/j.compedu.2019.03.002

-

Cunningham, K. J., & Link, S. (2021). Video and text feedback on ESL writing: Understanding attitude and negotiating relationships. Journal of Second Language Writing, 52, 100797. https://doi.org/10.1016/j.jslw.2021.100797

-

Davies, J. A. (2023). In search of learning-focused feedback practices: A linguistic analysis of higher education feedback policy. Assessment & Evaluation in Higher Education, 48(8), 1208–1222. https://doi.org/10.1080/02602938.2023.2180617

-

Desagulier, G. (2017). Corpus linguistics and statistics with R: Introduction to quantitative methods in linguistics. Springer Nature. https://doi.org/10.1007/978-3-319-64572-8

-

Divjak, D. (2019). Frequency in language: Memory, attention and learning. Cambridge University Press. https://doi.org/10.1017/9781316084410

-

Egbert, J., Biber, D., Keller, D., & Gracheva, M. (2024). Register and the dual nature of functional correspondence: Accounting for text-linguistic variation between registers, within registers, and without registers. Corpus Linguistics and Linguistic Theory, 20(3), 505–538. https://doi.org/10.1515/cllt-2024-0011

-

Field, A. P. (2024). Discovering statistics using IBM SPSS statistics (6th ed.,). SAGE.

-

Fuoli, M., & Bednarek, M. (2022). Emotional labor in webcare and beyond: A linguistic framework and case study. Journal of Pragmatics, 191, 256–270. https://doi.org/10.1016/j.pragma.2022.01.016

-

Fuoli, M., & Hommerberg, C. (2015). Optimising transparency, reliability and replicability: Annotation principles and inter-coder agreement in the quantification of evaluative expressions. Corpora (Online), 10(3), 315–349. https://doi.org/10.3366/cor.2015.0080

-

Gabrielatos, C. (2018). Keyness analysis: Nature, metrics and techniques. In C. Taylor, & A. Marchi (Eds.), Corpus approaches to discourse (1st ed., pp. 225–258). Routledge. https://doi.org/10.4324/9781315179346-11

-

Gravett, K. (2020). Feedback literacies as sociomaterial practice. Critical Studies in Education, 63(2), 261–274. https://doi.org/10.1080/17508487.2020.1747099

-

Gries, S. T. (2021). Analyzing dispersion. In M. Paquot, & S. T. Gries (Eds.), A practical handbook of corpus linguistics (pp. 99–118). Springer International Publishing.

-

Halliday, M. A. K. (1993). Quantitative studies and probabilities in grammar. In M. Hoey (Ed.), Data, description, discourse: Papers on the English language in honour of John McH. Sinclair. Harper Collins.

-

Hattie, J., & Timperley, H. (2007). The power of feedback. Review of Educational Research, 77(1), 81–112. https://doi.org/10.3102/003465430298487

-

Hood, S. (2022). Graduation in research writing: Managing the dual demands of objectivity and critique. In D. Caldwell, J. S. Knox, & J. R. Martin, (Eds.) Applicable linguistics and social semiotics: Developing theory from practice (1st ed.). Bloomsbury Publishing Plc. https://doi.org/10.5040/9781350109322.ch-1

-

Hunston, S. (2013). Systemic functional linguistics, corpus linguistics, and the ideology of science. Text & Talk, 33(4–5), 617–640. https://doi.org/10.1515/text-2013-0028

-

Hyland, K. (2018). Metadiscourse: Exploring interaction in writing. Bloomsbury Publishing.

-

Hyland, K., & Hyland, F. (2019). Feedback in second Language writing: Contexts and issues. Cambridge University Press.

-

Jolliffe, I. T., & Cadima, J. (2016). Principal component analysis: A review and recent developments. Philosophical Transactions of the Royal Society A: Mathematical Physical and Engineering Sciences, 374(2065), 20150202. https://doi.org/10.1098/rsta.2015.0202

-

Kassambara, A. (2017). Practical guide to cluster analysis in R: Unsupervised machine learning (Vol. 1). STHDA.

-

Lander, J. (2015). Building community in online discussion: A case study of moderator strategies. Linguistics and Education, 29, 107–120. https://doi.org/10.1016/j.linged.2014.08.007

-

Larsson, T., Biber, D., & Hancock, G. R. (2024). On the role of cumulative knowledge Building and specific hypotheses: The case of grammatical complexity. Corpora (Online), 19(3), 263–284. https://doi.org/10.3366/cor.2024.0314

-

Levshina, N. (2015). How to do linguistics with R: Data exploration and statistical analysis. John Benjamins Publishing Company.

-

Little, T., Dawson, P., Boud, D., & Tai, J. (2023). Can students’ feedback literacy be improved? A scoping review of interventions. Assessment & Evaluation in Higher Education, 49(1), 39–52. https://doi.org/10.1080/02602938.2023.2177613

-

Llinares, A., & Evnitskaya, N. (2021). Classroom interaction in CLIL programs: Offering opportunities or fostering inequalities? Tesol Quarterly, 55(2), 366–397. https://doi.org/10.1002/tesq.607

-

Lloyd, S. (1982). Least squares quantization in PCM. IEEE Transactions on Information Theory, 28(2), 129–137. https://doi.org/10.1109/TIT.1982.1056489

-

Logi, L., & Zappavigna, M. (2023). A social semiotic perspective on Emoji: How Emoji and Language interact to make meaning in digital messages. New Media & Society, 25(12), 3222–3246. https://doi.org/10.1177/14614448211032965

-

Macnaught, L., Bassett, M., van der Ham, V., Milne, J., & Jenkin, C. (2022). Sustainable embedded academic literacy development: The gradual handover of literacy teaching. Teaching in Higher Education, 29(4), 1004–1022. https://doi.org/10.1080/13562517.2022.2048369

-

Martin, J., & White, P. R. R. (2007). The Language of evaluation: Appraisal in english. Palgrave Macmillan UK. https://doi.org/10.1057/9780230511910. 1st ed.

-

Matthews, K. E., Sherwood, C., Enright, E., & Cook-Sather, A. (2023). What do students and teachers talk about when they talk together about feedback and assessment? Expanding notions of feedback literacy through pedagogical partnership. Assessment & Evaluation in Higher Education, 49(1), 26–38. https://doi.org/10.1080/02602938.2023.2170977

-

Matthiessen, C. M. I. M. (2015). Halliday’s conception of language as a probabilistic system. In J. J. Webster (Ed.), The Bloomsbury companion to M. A. K. Halliday (pp. 203–241). Bloomsbury.

-

Matthiessen, C. M. I. M. (2019). Register in systemic functional linguistics. Register Studies, 1(1), 10–41. https://doi.org/10.1075/rs.18010.mat

-

Meishar-Tal, H., & Levenberg, A. (2021). In times of trouble: Higher education lecturers’ emotional reaction to online instruction during COVID-19 outbreak. Education and Information Technologies, 26(6), 7145–7161. https://doi.org/10.1007/s10639-021-10569-1

-

Molloy, E., Boud, D., & Henderson, M. (2019). Developing a learning-centred framework for feedback literacy. Assessment & Evaluation in Higher Education, 45(4), 527–540. https://doi.org/10.1080/02602938.2019.1667955

-

Nicol, D. (2020). The power of internal feedback: Exploiting natural comparison processes. Assessment & Evaluation in Higher Education, 46(5), 756–778. https://doi.org/10.1080/02602938.2020.1823314

-

O’Halloran, K. L., Tan, S., Pham, D. S., Bateman, J., & Vande Moere, A. (2018). A digital mixed methods research design: Integrating multimodal analysis with data mining and information visualization for big data analytics. Journal of Mixed Methods Research, 12(1), 11–30. https://doi.org/10.1177/1558689816651015

-

Raaper, R. (2018). Students’ unions and consumerist policy discourses in english higher education. Critical Studies in Education, 1–17. https://doi.org/10.1080/17508487.2017.1417877

-

Read, J., & Carroll, J. (2012). Annotating expressions of appraisal in english. Language Resources and Evaluation, 46, 421–447. https://doi.org/10.1007/s10579-010-9135-7

-

Rousseeuw, P. J. (1987). Silhouettes: A graphical aid to the interpretation and validation of cluster analysis. Journal of Computational and Applied Mathematics, 20, 53–65. https://doi.org/10.1016/0377-0427(87)90125-7

-

Rowe, A. D. (2017). Feelings about feedback: The role of emotions in assessment for learning. In D. Carless, S. M. Bridges, C. K. Y. Chan, & R. Glofcheski, (Eds.), Scaling up assessment for learning in higher education (pp. 159–172). Springer Singapore. https://doi.org/10.1007/978-981-10-3045-1_11

-

Schweinberger, M. (2024). When natural language processing meets corpus linguistics: A computational approach to analyzing the Corpus of Oz Early English. In C. P. Amador- Moreno, D. Haumann, & A. Peters (Eds.), Digitally-assisted historical English linguistics (pp. 73–88). Routledge.

-

Sharpe, D. (2015). Your chi-square test is statistically significant: Now what? Practical Assessment, Research & Evaluation, 20(8). https://files.eric.ed.gov/fulltext/EJ1059772.pdf

-

Thorndike, R. L. (1953). Who belongs in the family? Psychometrika, 18(4), 267–276. https://doi.org/10.1007/BF02289263

-

Van Poucke, M. (2025). Negotiating the maze of menopause misinformation: A comparative analysis of stance in health influencer versus medical professional discourse. Atlantic Journal of Communication, 0(0), 1–23. https://doi.org/10.1080/15456870.2025.2453738

-

Winstone, N. E., Nash, R. A., Parker, M., & Rowntree, J. (2016). Supporting learners’ agentic engagement with feedback: A systematic review and a taxonomy of recipience processes. Educational Psychologist, 52(1), 17–37. https://doi.org/10.1080/00461520.2016.1207538

-

Zappavigna, M., & Martin, J. R. (2018). # communing affiliation: Social tagging as a resource for aligning around values in social media. Discourse Context & Media, 22, 4–12. https://doi.org/10.1016/j.dcm.2017.08.001

-

Zhang, Z. V., & Hyland, K. (2018). Student engagement with teacher and automated feedback on L2 writing. Assessing Writing, 36, 90–102. https://doi.org/10.1016/j.asw.2018.02.004

-

Zhang, Z. V., & Hyland, K. (2022). Fostering student engagement with feedback: An integrated approach. Assessing Writing, 51, 100586. https://doi.org/10.1016/j.asw.2021.100586

Funding

Open Access funding enabled and organized by CAUL and its Member Institutions

The author did not receive support from any organisation for the submitted work.

Ethics declarations