Article Content

Abstract

Testimonial justice is the virtue guiding our assignment of credibility to a speaker. In the debate on testimonial injustice, it is often understood primarily as a tool to prevent discrimination and generally the unfounded discounting of the credibility of certain speakers and groups. But the function of the virtue extends far beyond the purpose of countering prejudice: It also serves as a safeguard against dishonest agents of various kinds. Such agents threaten the integrity of socio-epistemic processes, including the institutions of science and their communication with policymakers and the general public. We offer a classification of dishonest agents and translate them into a simple agent-based model inhabited by naive Bayesian agents. In this formal framework, we investigate the differential impact of varieties of dishonesty and the utility of testimonial justice in preventing or mitigating distortions as well as reliably identifying dishonest agents, given mildly benign conditions.

Explore related subjects

Discover the latest articles and news from researchers in related subjects, suggested using machine learning.

- Attribution Theory

- Energy Justice

- Juries and Criminal Trials

- Meta-Ethics

- Morality

- Victimology

1 Introduction

Dishonesty is a pervasive feature of human epistemic activity. It grows from the adverse incentives in many socio-epistemic environments on which human epistemic agents rely, enticing epistemic vices from intellectual sloth to deceitfulness. Unfortunately, inquiry in the history and sociology of science has confirmed what these general observations suggest: The sciences themselves, often considered the pinnacle of human knowledge production, share in our plight as being subject to all kinds of dishonest behavior, distortion and intentional confusion.

Dishonest agents interfere with the epistemic enterprise of science in two major ways: First, they influence the process of knowledge generation itself. This is, for instance, done through the process of publication management, as documented by Sismondo (2009). This process is often subtle and relies more on selective funding of studies and tendentious interpretations rather than outright fraud or other scientific misconduct. But at the heart of it is the desire to distort the stock of scientific knowledge motivated by an illegitimate non-epistemic incentives such as the commercial success of a new drug.

The second way concerns the communication of scientific knowledge to the general public and policy makers who rely on it for decision-making (cf. Irzik and Kurtulmus 2024). Such dishonest interference has been investigated, for example, by Oreskes and Conway (2010) in their analysis of the Tobacco Strategy. It involved also manipulations of the first kind, such as selective funding of favorable or distracting research, but notably also the deployment of questionable scientific expert witnesses in court cases and on advisory boards for policy-making. Once again, this must not be done by outright assertion of falsehood, but can be done by more subtle means that sow doubt and confusion on a topic where otherwise, a sound scientific consensus existed.Footnote1

These observations suggest that an epistemic agent needs, even for supposed knowledge provided by the hallowed institutions of science, a critical capacity to assess the credibility of their source. A fortiori, the same is true for generally less reliable sources of information. That much seems trivial, but as the discussion by Goldman (2011) shows, strategies to assess expert sources are not trivial to come by, especially for laypeople. While we do not strictly focus on the lay-expert problem Goldman discusses, the point generalizes: To assess a source’s reliability is under most conditions a non-trivial problem, in particular when, as it is often the case in the contemporary social environment, we are regularly confronted with sources about which we know next to nothing.

In this paper, we suggest that a form of the virtue of testimonial justice may serve as a safeguard against the influence of dishonest agents under fairly minimal requirements imposed on the epistemic agent.

Testimonial justice, as we will expand on in the next section, has been suggested originally as a remedy for the harms of testimonial injustice brought about by deflated credibility judgments due to identity prejudice (cf. Fricker 2007, Ch. 1.3). We give a formalization of this virtue within a commonly used naive Bayesian model of repeated testimony to investigate the utility of testimonial justice as a defense against dishonest meddling with one’s acquisition of knowledge.

As a side effect, we will also explore some questions about the family of models itself; it has been discussed under the label of expectation-based updating (Merdes et al. 2021), and its utility as a general strategy for handling testimonial reports has been called into question. Thus, we need to carefully specify the conditions under which this updating strategy confers epistemic benefit, measured both in terms of accurate beliefs on a subject matter and the successful recognition of dishonest agents.

The paper proceeds as follows: First, we give an account of testimonial exchanges and the notion of testimonial justice (Sect. 2). Section 3 develops our account of dishonesty and offers a classification of dishonest epistemic agents. With these theoretical considerations as a background, we present the formal model (Sect. 4) and the results of a set of simulation experiments (Sect. 5). Section 6 returns to the issue of scientific dishonesty and discusses some limitations of our formal approach.

2 Testimonial Exchange and the Virtue of Testimonial Justice

Theories of testimonial interactions are a cornerstone of social epistemology (see Lackey 2011).Footnote2 In the most basic sense, testimony is given when an agent utters a statement (called a testimonial report) on some proposition H, to an audience of one or more hearers, who hold the expectation that the utterance is meant to inform their state of belief on H, with the speaker also being aware of that expectation. However, the speaker does not actually have to intend to fulfill the expectation, as will be illustrated by the discussion of dishonest testimony below; but neither a situation in which the speaker is unaware of hearer expectations nor one where the hearers do not hold proper expectations should be considered a testimonial exchange, regardless of surface appearances.

For the purpose of this paper, we consider testimonial interactions in small groups on a small set of propositions. An individual act of testimony is assumed to be dyadic, with one speaker and one listener. Every agent is taking the roles of both speaker and listener, and updates their state of belief conditional on the testimonial reports of the others. To note, the testimonial exchange does not include any further production of external evidence, but merely represents the purely social process of discussion within the group.Footnote3

We can interpret this either as an idealization to focus on the social aspect of knowledge acquisition, or consider cases in which external evidence gathering is not commonly performed to vet testimony. Such situations may occur in conference discussions, but also if we consider testimonial exchanges that include both experts and laypeople, the latter of which are limited in their capacity to gather additional evidence. Yet another application could be jury deliberation, where after hearing the case, there is specifically no more input to the process of collective judgment formation.

A crucial part of any testimonial exchange is the assessment of the credibility of a speaker. In the epistemic context, credibility can be understood as reliability (see cf. Bovens and Hartmann 2003, Ch. 3), and hence is a measure of how likely someone is considered to utter true statements. One can further differentiate reliability in this sense in competence and honesty, where a competent agent might still tell falsehoods due to a lack of honesty, whereas an honest agent may do so out of incompetence.

From the point of view of a listener, evidence can be specific enough to differentiate between dishonesty and incompetence. For instance, a known conflict of interest in a speaker is evidence for possible disingenuousness, but not incompetence. However, in the limited context we describe, where the speaker only receives testimonial reports to base their judgment of reliability on, the differentiation is difficult to support.Footnote4 From the speaker’s point of view it makes sense in our context to distinguish between the two dimensions of unreliability: A speaker may be more or less reliable contingent on the evidence they were capable of collecting, and independent of their own state of belief, the accuracy of which constitutes their competency at a given point in time, behave in a variety of dishonest ways in their speech.

Given this description of testimonial exchanges, it seems easy to formulate the concept of testimonial injustice (cf. Fricker 2007, 17ff.): The hearer of a testimonial report is acting unjust if they do not assign the speaker their actual reliability as their credibility. If we wished to follow Fricker, the focus would be on deflated assignments in particular (though this is a controversial issue, (cf. Davis 2016). Furthermore, to her the paradigmatic case of testimonial injustice is grounded in identity prejudice, whereas we focus on what she calls ad hoc testimonial injustice—we could say, a more spontaneous phenomenon, not requiring a foundation of social norms and institutions to emerge.

But things are not quite so simple. There are many cases in which an agent is through no fault of their own in no position to properly assess a speaker’s reliability (see Fricker 2007, 100). This may be due to bad conditions in the surrounding society, but more to the point, it can be the result of lacking or misleading evidence on the reliability of a given speaker. For our purposes, it will be sufficient to modify the explication as follows: An agent commits a testimonial injustice against a speaker, if their assignment of credibility is not in accordance with the available evidence.

This explication does still not properly capture all aspects of the full conception of testimonial injustice. It does not state when and to what degree an agent is responsible for their lack of evidence on a speaker’s reliability. It is also silent on the question as to whether there is an obligation to start one’s learning process at a particular level—as we will put it in the model, whether there are constraints on the priors. Nevertheless, for the purposes of our inquiry this characterization suffices.

Thus, testimonial justice is the virtue that enables an agent to make just assignments of credibility and avoid unjust ones, conditional on the available evidence and expressed in terms of source reliability. Minimally, it requires the agent to converge in the long run, given enough representative evidence, to an assignment of credibility that aligns with the agent’s reliability. Given this characterization, two remarks are in order. First, an agent’s reliability may of course change over time. For instance, an eye witness testimony could be more reliable shortly after the events than years in the future. Or an agent may simply become more informed on a given topic or get rid of an incentive to lie. The assumption that an agent possesses an underlying property of reliability is therefore to be qualified temporally. Second, a similar point holds true on variations in topics; an agent might be a highly reliable source of information on quantum mechanics, but entirely useless to inform oneself about current political events. Thus, the ascription of reliability ought to be topic-sensitive as well, so that an agent need not commit to a singular global assignment of credibility.

The virtue of testimonial justice is commonly offered as a safeguard against discriminatory treatment and prejudicial assignment of credibility (Fricker 2007, Ch. 4.1). But, given the above formulation, it may also play a quite different role, namely to protect against dishonest speakers. To put it negatively, an agent who does not possess the virtue may not only unduly deflate the credibility of certain groups on the basis of, for instance, identity prejudice, but also assign excess credibility to agents who are intentionally giving testimonial reports that disagree with their genuine belief. This is quite different from other worries about excess credibility, represented by Medina (2011), who remarks on the relation between positive prejudices that confer excess credibility assignments with negative prejudices that deflate credibility. The concern here is instead with appropriately assessing reliability in a testimonial exchange, given the possibility of dishonest speakers. It speaks to the epistemic interest of the listener, rather than the source.Footnote5 Thus, we next proceed to a characterization of dishonesty.

3 Dishonesty

Dishonesty can be understood in contrast to truth-telling. For our purposes, an agent is considered truth-telling when they provide testimonial reports in accordance with their beliefs. To note, this characterization implies that a truth-teller may utter false statements, as long as they genuinely hold a false belief about the content of their utterance. Thus, a dishonest agent is one who does not speak in accordance with their belief; in the following, we will distinguish three different types of dishonesty.

But before we do so, it is worth noting that there is another way of determining dishonesty: A dishonest agent could also be considered someone who intends to bring about false belief in their audience, implying that an honest agent would intend to induce true belief. Being a truth-teller is but one strategy to induce true belief, and one that is not always optimal. Vice versa, being dishonest in the first sense is not always an optimal strategy to induce false belief.

It is important to distinguish between these two notions of dishonesty; we will, in the following, construct the categories of dishonest agents in relation to their genuine expression of belief, but refer to their motivations when discussing possible explanations of dishonest testimony.Footnote6

In the following, we consider three types of dishonest agents, which we label the propagandist, the bullshitter and the pathological liar.

The propagandist is an agent who utters support for a proposition H regardless of their own state of belief. Thus, their testimony is entirely unmoored from their actual state of belief. The label is chosen to suggest a motivation for such behavior, namely the intention to induce belief in H in an audience. As with truth-telling, steadfastly uttering H may not always be the best strategy to achieve this end, so it is important to distinguish between the defining behavior and one possible explanation for it.

In the context of science, some individuals, but in particular corporate entities have been identified exhibiting such behavior. As Oreskes and Conway (2011) note, the tobacco industry according to internal documents was aware of the detrimental health effects of smoking while publicly denying those very facts.Footnote7

The bullshitter is similar to the propagandist in that they testify on H without regard for their beliefs. But other than the propagandist, they do not have a strong preference for asserting H or . Instead, their behavior appears random from the point of view of the audience. Propagandizing and bullshitting can thus be understood as the two ideal endpoints of a continuum: On one end is an agent who steadfastly supports H, on the other one who supports and rejects H with equal likelihood, leaving an intermediary space of agents who provide random utterances with more or less bias towards or against H. What all agents on this continuum have in common is the irrelevance of their own state of belief for their utterances.Footnote8

Motivationally, though, the bullshitter is usually quite distinct, justifying the separation in its own category. The bullshitter—a label alluding to Frankfurt (2005), even though we will not represent all subtleties of his notion of bullshit—can be motivated by a desire to simply say something, and choose to just say anything as the most effortless option. But they may also strive for the approval of an audience, or intend to sow doubt in or confuse their listeners. In our formal analysis, we focus on instances of bullshit that do not require the agent to know anything about the state of belief of their interlocutors, in order to maintain better commensurability of results. But motivations contingent on the hearer’s state of belief can still be partially captured by a randomizer, assuming the dishonest agent is highly uncertain about their interlocutor’s preferences.

The pathological liar is a quite different character, insofar as they are very much speaking conditionally on their belief. As a kind of mirror image of the truth-teller, they assert whatever is opposed to their genuine belief; so if they strongly belief that H is true, they will report that , and vice versa.

The label pathological is applied to this type of dishonest agent, because one obvious, if seemingly absurd, motivation to act like this is to lie for the sake of lying, as a sort of parody of valuing truth-telling. But there are several other possible motivations for such behavior, some of which might be more readily intelligible as strategies to achieve rationally interpretable, if maybe nefarious, ends. For one, the behavior may be deployed as an alternative way of sowing confusion or doubt. As disagreement is commonly seen as at least some evidence for moderating one’s credence, an intentionally false statement can be used to sow doubt among rational agents who fail to perceive the dishonesty of the speaker. There is evidence of this behavior presented in Oreskes and Conway (2011), and it is clearly most effective in an environment where there is a presupposition of reliability. And while skepticism is an intellectual virtue that scientists pride themselves upon, it is also true that the status of scientists tends to afford a lot of trust. This trust is precisely what the pathological liar can most effectively exploit to bring about doubt or confusion.

As a side note: While we only consider the negative effects of dishonest agents within an epistemic community, it is worth noting that some strategies that manipulate the sciences from outside do not depend on dishonest scientists (cf. Holman and Bruner (2017)). Instead, the course and discourse of a scientific field can also be manipulated by selectively funding and promoting scientists that happen to honestly take a position desired by the outside entity.

Given the testimonial scenarios, the virtue of testimonial justice and our classification of dishonesty, we turn next to a formal representation.

4 Model Description

4.1 Bayesian Testimony

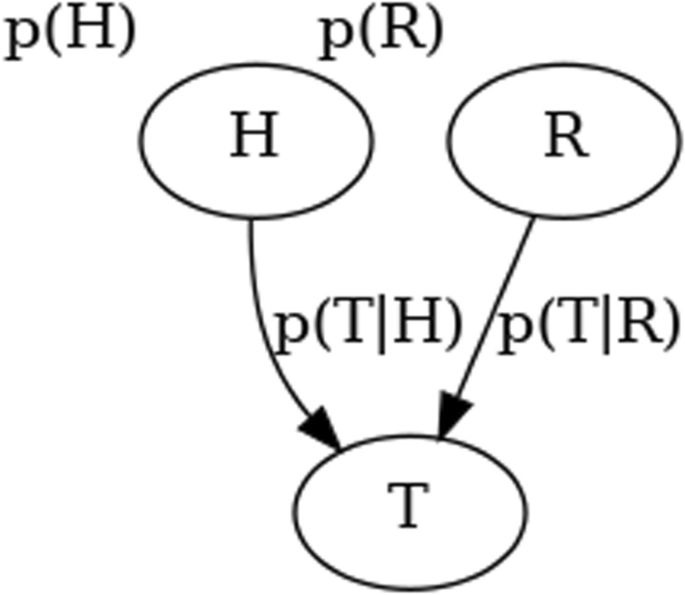

Our starting point is a fairly simple model of testimony introduced by Bovens and Hartmann (2003, Ch. 3) and further investigated and modified by Merdes et al. (2021). It describes the reception of testimony by a listener with three binary variables: A proposition of interest, H, on which the speaker is testifying; the reliability of the source R, which can take on the values of either perfectly reliable or providing random reports, claiming that H with probability a. Finally, the actual testimonial report T, which once again states either true or false. The report is the only variable the value of which becomes certain to the listener, and they infer the posteriors for p(H) and p(R).Footnote9 The original model assumes the conditional independence relations depicted in the Bayes net in Fig. 1. The conditional probabilities, as in the original, are defined as

Bayes net depicting the model variables and their conditional independence relations

When we extend the model to include multiple reports, we follow the de-idealization and computational simplification by Merdes et al. (2021), that is, we assume the agent will process the reports sequentially, rather than properly fully rebuilding the overall network with every new report. This preserves much of the originally motivating ideas of the model, but implies two significant differences: (1) the sequential processing introduces order effects, thus rendering the agents no longer perfectly rational in the Bayesian sense.Footnote10 (2) More quantitatively important, the sequential procedure tempers the penalty the original model deals out for inconsistency: Due to the binary nature of R, if the same source provides two contradicting reports on the same proposition, it must, with certainty, be a randomizer in the strict model. While the sequentialized model still rewards consistency (meaning that a more consistent source will, ceteris paribus, be considered more reliable), it does not move to the assignment of certainty to . This is a desirable property for our purposes, as it is to be expected that due to exchanges with other agents, a source legitimately changes its mind. The sequentialization allows for this without having to construct a much more complex model, at the cost of giving up on full Bayesian rationality.

Our overall argument does not depart from a premise of fully rational agents, and we also agree with Merdes et al. (2021) that the assumptions of the sequential model on the cognitive capabilities of the agents are less strict. For instance, the amount of memory the agents need to dedicate is constant in the sequential model, whereas it grows linearly with the number of reports in the strict model. Thus, a wider range of psychological agents is capable of implementing the adapted model’s updating algorithm.

One further qualitative property of the model is worth noting: The estimation of reliability depends on the compatibility of the report with prior belief in H. This is also true the other way around, but more obviously plausible—certainly, the change in my beliefs given a report will depend on my beliefs about the reliability of the source of that report—but the other direction of dependence may seem counter-intuitive or even irrational at first glance. But on closer inspection, it is quite reasonable: A listener simply uses their current state of knowledge regarding H to assess the source’s reliability. If we imagine a case where a listener is quite certain that H is true, they can use this information to infer that someone who disagrees with it is less likely to be reliable.

For an example, consider my strong belief that there is anthropogenic climate change. When I come across someone who objects to this belief, that gives me at least some reason to reduce my estimation of their reliability. Importantly, within the model framework, it will never have me dismiss the source’s report entirely or reduce my belief in their reliability to 0 unless I have somehow come to believe H with certainty. But it is rationally warranted on the basis of my evidence to discount my estimation of a source’s reliability when they voice statements very unlikely to be true according to my current state of belief. Doing otherwise would be to ignore some of the available evidence.

Very briefly, we want to mention that there is another model of expectation-based updating on testimonial reports. This model, developed by Olsson and Vallinder (2013) represents reliability as a continuous, rather than a binary variable, ranging from perfectly reliable to perfectly anti-reliable. Merdes et al. (2021) have observed that the models produce surprisingly similar results in terms of accuracy with respect to p(H), given this seemingly important difference. However, when it comes to learning reliability, there is an important qualitative difference: In our terminology, the Bovens-Hartmann model construes an unreliable agent essentially as a bullshitter, whereas the Olsson-et-al model also allows it to be judged as a pathological liar.

There are two reasons for us to stick with the sequentialized Bovens-Hartmann model despite this potential advantage: (1) The Olsson-et-al model comes with a number of technical difficulties relating to numerical integration, that not only increase computation times but more importantly threaten the reliability and comparability of results.Footnote11 (2) More substantially, we believe the assumption that sources could be anti-reliable is quite unreasonable under many empirically likely and practically relevant circumstances.

To clarify this, imagine someone who considers a source as anti-reliable. This might be someone who believes that whatever the IPCC publishes on climate change, the negation of it becomes more likely to be true. If the IPCC is approximately accurate in their statements and hence consistent, it will over and over confirm the agent’s beliefs, putting them further and further down in a cognitive hole from which their very algorithm for belief revision prevents them from escaping. An agent following the sequential Bovens-Hartmann process instead ends up simply ignoring the reports from this source, leaving more of an opening to be moved away from their belief by other speakers.Footnote12

For these reasons, the sequential Bovens-Hartmann model is more adequate to answer our questions regarding dishonesty and testimonial justice. But we should keep in mind what paradigm of an unreliable source underlies the model when analyzing the results.

4.2 Modeling Dishonesty

Next, we need to represent the three types of dishonest agents. This is done by defining a response function , . Informally, it associates agent i’s state of belief on H with a binary response, as presupposed by the testimony model. This allows us to easily define the propagandist and the pathological liar:

describing a propagandist in favor of H. The function is constant exactly because the proagandist is unmoored in their reports from their own genuine beliefs. This looks different for the pathological liar.

For the bullshitter, we need to define t as a random variable such that

that is, with equal probability i will report to confirm or reject H, once again, regardless of . In principle, this model can be easily extended to have differential probabilities for the report options, by setting

For our purposes, setting a to 0.5 is a useful setting, as we use the bullshitter as the ideal type of an agent who does not care at all about the content of what they are saying. In other contexts it might be sensible to adapt the number to represent an agent who sits somewhere in the middle between a pure propagandist and a pure randomizer, an agent that does not have a strict strategy, but a bias to report one way rather than another. So, even if this case is not one we investigate here, the model can easily be parameterized to represent it.

Similarly, while we are interested in an ideal-typical pathological liar, it is easy to modify the response function such as to represent a mixture of random and strict behavior:

In a simple instantiation, would be an agent who is proportionally less likely to report H to their degree of belief in H. By switching to a different f, any desired bias towards or against H can be introduced, as long as f ranges over [0, 1].

We will stick for the current investigation with the three simple response functions for propagandist, bullshitter and pathological liar, but it should be remarked that the modeling framework possesses the expressive power to capture broader phenomena. Having thus defined the behavioral models for both hearer and speaker, we turn to the minimal social embedding that is used for the simulation experiments.

4.3 Social Dynamics

The model of the social environment is intended to be minimal, to represent a type of testimonial exchange that does not depend on explicit institutions. This is meant to identify a set of minimal conditions to bring about certain phenomena, but is much more austere than most actual social conditions. We do not deny the significance of institutional and collective action, nor their necessity to deal with certain social epistemic problems (see Anderson (2012) for a discussion). But this does not obviate the need for individual virtue.

The environment consists of a group of agents which have uniform communication capabilities. They are equally likely to communicate and choose uniformly at random to which agent to communicate. No further network structure is imposed on the group to direct communication, and no limitations on communication is imposed (such as a threshold of assertion, cf. Olsson and Vallinder (2013)).

The agents are not collecting any non-testimonial evidence during the simulated testimonial exchange. All their non-socially available evidence is represented in their prior degrees of belief. This choice reflects the intent to focus on social processes and the problems that arise specifically from them.

Furthermore, the agents hold beliefs on a non-empty set of propositions. This choice is informed by previous research on a similar testimonial model by Merdes et al. (2021), who noticed difficulties with agents effectively learning reliability in a single-proposition environment, even though they include continuous non-social evidence gathering. To enable social learning of reliability, we extend the model to include additional propositions that agents report on, some of which they have strong, and by assumption, accurate, beliefs on and hence, can use them as a benchmark to vet interlocutors.Footnote13 This benchmarking is implicit in the model, as agents cannot elicit reports actively, but as we will show in our results, at least for certain areas of the parameter space, these assumptions enable the learning of both reliability and beliefs in the propositions under consideration.

Thus, the model runs as follows: At each time step, an agent and a proposition are chosen uniformly at random from the respective sets. A report is generated from the agent conditional on their type (truth-teller, propagandist, bullshitter or pathological liar) and current state of belief with respect to the chosen proposition. This report is then communicated to another randomly chosen agent, which updates its state of belief for both the proposition and the reliability of the source.

5 Simulations and Results

5.1 Parameter Choices

Before we get into the specifics of our two simulation experiments, there are some general choices to be set up. Given the model specification, there is still a parameter space too broad to explore in a single study. We vary the following independent variables: Type and number of dishonest agents, number of propositions under discussion and initial credences.

Furthermore, we need a variation of the hearer model to represent agents lacking testimonial justice against which our supposedly more virtuous agents have to measure up. It is constructed simply by taking the agent we defined and remove the update of p(R). The reliability assignments of such an agent are fixed, and hence it forms the paradigm of an agent that is not epistemically just in the sense we specified. If such an agent would happen to land on the right value by chance, it might be considered to have naive testimonial justice (Fricker 2007, 92f.). This case is highly unlikely and the agent would still be lacking the full virtue, hence we will not further consider this possibility.Footnote14

To instantiate the model, one needs to set priors for p(R), p(H), the size of the group and the number of testimonial reports exchanged. As the default prior for p(R), we set 0.4. This value can be interpreted as being mildly skeptical of any unknown source. It is a sensible starting point, because it requires some learning to treat a source as either reliable or unreliable. According to exploratory experiments, the results are not sensitive to small changes in this value, but the effects we observe will become less and less pronounced when the value approaches 0 or 1.

For p(H), we distinguish between the proposition of interest, the prior for which we set to 0,51 and the additional reference propositions. 0.51 represents the assumption that the necessary information is present in the group to converge to the value correct by assumption (), while leaving space for the dishonest agents to successfully dissuade the group from the correct answer, The reference propositions are either informative, in which case they are set to 0, 9 or non-informative, being set to 0.5. The logic is that informative propositions are ones the agents are highly confident of and rightly so—it represents the reliable stock of knowledge in the group. Non-informative propositions, on the other hand, do not help; they are used merely to distinguish between effects in the model that occur purely due to the presence of additional propositions to discuss and the informational content of those presents and their value as an epistemic resource for the agents.

Group size is chosen on the basis of previous research on similar models, computational tractability, and the idea that the model can, in the most direct manner, represent a small group discussion for which this is a plausible size. It leaves enough space to vary the number of dishonest agents and nothing depends on the precise number. For larger groups, however, one would need to be more cognizant of possible network effects which are not modeled in our small-group version. The number of reports exchanged is approximately derived from the group size. There is close to one report for each pair of agents. The actual set of reports is generated randomly from this possible set of dyadic exchanges, but the number ensures, according to exploratory experiments, a saturation of the group’s exchange process.

Finally, each parameter configuration is run 1000 times and the average is reported. With the technical points out of the way, we can turn to the variation of interest; our dependent variables will first be the accuracy of p(H), measured by the squared error and second the reliability differentiation between honest and dishonest agents, done by a qualitative comparison of the values of p(R). Let us begin with the former.

5.2 Improving Accuracy

We start with investigating the difference between expectation-based updating and agents of fixed trust in terms of accuracy. If testimonial justice functions as a safeguard against dishonest agents, we ought to be able to see higher levels of accuracy (lower levels of error) for the updating agents than for the fixed ones under at least some relevant conditions. This assumes that an agent operating on expectation-based updating is more testimonially just than a fixed agent. Under our explication of testimonial justice, this is the case, as being able to adapt one’s credibility assignment is a necessary condition for the virtue. Therefore, higher accuracy for updaters shows that being more testimonially just makes one more accurate (again, under some relevant conditions).

Before we go into the simulations, a note on the use of reference propositions is in order. It has been shown before that updating reliability is not always epistemically beneficial (Merdes et al. 2021). These negative results seem to depend on two conditions: (1) The difference between reliable and unreliable agents is subtle. They do not have any dishonest agent, but it is merely the case that some of the agents due to bad luck come to hold false beliefs and then communicate them. This can happen to honest agents as well, and it is inherently difficult to recognize this type of agent as unreliable within the framework. (2) The simulation environment is limited to a single proposition to be investigated, such that the agents cannot rely on reference or benchmark propositions they are already comparatively well-informed about to anchor their judgment. For the purposes of their argument, these assumptions are sensible, as they intend to refute quite fundamental claims regarding expectation-based updating. But we do not claim that expectation-based updating as a realization of testimonial justice always confers accuracy benefits; therefore, we move to a section of the modeling space that leaves room for the strategy to work, by introducing dishonest agents and allowing for additional propositions, as already detailed in the previous section.

Given these remarks, we turn to our simulation experiments. We compare the accuracy (measured by squared error of belief in the proposition of interest—not including the state of belief on the reference propositions) of sequential Bovens-Hartmann agents to that of agents with a fixed p(R). The comparison is, again, made at the end of an extended testimonial exchange.Footnote15

First, we show that, while different in certain respects, our model can reproduce the negative finding of Merdes et al. (2021), namely that with a single proposition and no reference to anchor judgments, expectation-based updating fails to improve accuracy in the face of dishonesty.

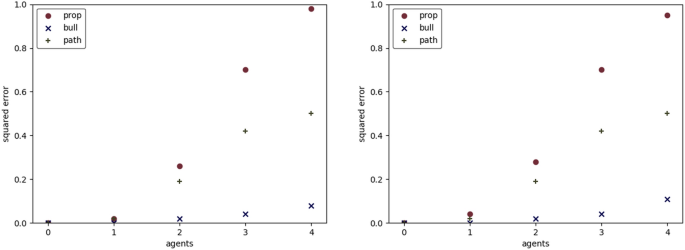

We vary the number of dishonest agents to show their negative effect on accuracy, which is measured as squared error (meaning that higher values signify less accuracy). Figure 2 depicts the case with no additional reference propositions, and as would be expected, there is no significant accuracy benefit, replicating the previous result. We also see the differences in impact between our various types of dishonest agents, which we discuss in more detail below.

Results for varying numbers of dishonest agents (0–4) in 10 agent population. p(R) =0.4, p(H)=0.51) initially, 300 reports exchanged. Every data point shows an average of 1000 runs, the measure is squared error. Fixed agents (left) versus updaters (right)

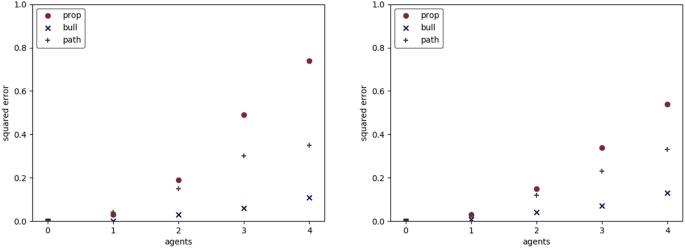

However, if we add highly informative reference propositions, the benefits of being willing to learn become readily apparent (Fig. 3). To note, this condition also seemingly improves the accuracy of the fixed agents. But this is merely a side effect of the same number of reports being allocated to a larger number of propositions. Because of this spreading out of reports, there is less convergence, and to the degree that convergence is driven by the distortions of the dishonest agents, this implies higher accuracy. The updating agents which we interpret as possessing testimonial justice also benefit from this effect, but as the graph shows, they become much less inaccurate than the fixed agents. What remains to be shown to complete our argument, is, that the expectation-based updaters do indeed learn to differentiate between honest and dishonest agents—which is not directly implied by the improvement in accuracy.Footnote16

Parameters as Fig. 2, but with reference propositions assumed to be true and held at an initial degree of belief of 0.9. Fixed agents (left) versus updaters (right)

Before we turn to a closer inspection of reliability, while the trends in distortion effects on accuracy are similar between the types of dishonest agents, we should comment on the differences. The propagandist is the most effective, because of the preference of the model for consistency. And the propagandist is nothing if not consistent. We note that this is plausible only as long as no reliable reference from outside the conversation enters: If that happens we should expect a rational, honest agent to change their mind at least some of the time. Thus, perfect consistency in the sense of the propagandist would no longer embody the kind of consistency we expect from rational agents. But within the confines of the modeled scenario, this style of dishonesty is most effective at distorting convergence to accurate belief.

The bullshitter turns out to be quite ineffective. An observant reader may have already suspected as much, as this is the type of dishonest agent the hearer model is assuming in its construction. But not only can the agents cue into the high level of inconsistency that the bullshitter exhibits, but also even when they are trusted, they do not effectively pull away from accurate beliefs: They are just as likely to offer a report guiding towards as guiding away from accurate belief. Again, if we modify the operation environment, this strategy is more harmful to accuracy; in particular, the bullshitter can drive the discourse towards the neutral point of 0.5 when their utterances form a sufficiently large part of the overall reports exchanged, without being recognized as clear partisans. Thus, they would be more successful in an environment where dishonest agents are expected to be highly consistent compared to honest ones.

The pathological liar turns out to sit in the middle with respect to the efficacy of its distortions. This is explained by the fact that its behavior in actual executions of the model also falls in between the other two types: It tends to behave more inconsistent towards the beginning, but upon its belief converging becomes increasingly consistent. Theoretically, it is also possible that this pattern turns into a cycle: When the pathological liar is successful in turning its environment against what they actually belief, they will then receive numerous reports to change their mind—and remember, the dishonest agents learn just as honest ones do, they only differ in their reporting behavior. Thus, the mediate effect of influencing others with its lies would be to turn around its own belief, and hence eventually its reports, starting the cycle over again. Within our model parameters, such cycles are highly unlikely, hence we mention them merely as a theoretical possibility.

In the following analysis of reliability learning, we focus on the propagandist as the dishonest agent of choice, because of his pronounced effect. This is to direct attention to the strongest effects of dishonesty, and within the world of the model, asks for a safeguard against the most effective type of dishonesty.

5.3 Detecting Dishonesty

First, we need to further explicate what we expect from an agent who possesses, within the logic of the model, testimonial justice. Our understanding is informed by the interest in dishonest agents: The primary end of testimonial justice is thus to distinguish dishonest agents from honest ones. In the terms of the model, an agent is considered virtuous if they achieve substantially greater levels of trust in honest than in dishonest speakers.

To test our agents for this capacity, we ran a number of simulation experiments the results of which are depicted in Table 1. Each cell contains the average level of reliability assigned to a randomly chosen, either honest or dishonest agent. The parameterization is still as laid out in the beginning of this section. The left half of the table contains results for highly informative reference propositions, the right half values for fully non-informative ones. Through the rows, the numbers of propositions and dishonest agents are varied. The dishonest agents within these simulations are, for the reasons noted above, of our propagandist type.

For starters, a few general observations. Adding non-informative propositions has no significant impact on the detection of dishonest agents. Also as expected, for a given number of reference propositions, a larger number of dishonest agent diminishes the reliability gap and hence worsens differentiation. Differentiation is, however, recovered at least in part with enough informative reference propositions, i.e. given enough additional epistemic resources to anchor judgments to, expectation-based updating maintains a relatively high level of differentiation.

We interpret these results as showing that agents using expectation-based updating to assess reliability achieve testimonial justice in the properly qualified sense, given that either the epistemic context is sufficiently benign (very few dishonest agents) or there are enough epistemic resources available (here in the form of reference propositions). There are clearly limits to what expectation-based updating can achieve as a strategy to achieve testimonial justice, but these limitations align with cases of non-culpable injustice: When an agent’s social environment is too distorted by dishonesty and there is no anchor outside the particular social group, we cannot expect an agent to exhibit adequate judgments of reliability—there is simply no basis for good judgment.

This qualitative assessment of differentiation may seem too simplistic, but it is the most reasonable approach within our framework of inquiry. Any more quantitative measure would associate a meaning to the actual number that cannot be cashed in, because there is no direct mapping to real-world magnitudes. Nevertheless, it is illuminating to briefly consider some further options. One additional question we raised before in the theoretical section is the temporal dimension of testimonial justice and credibility assignment. We look at the endpoint of a simulation run, because we do not associate a specific interpretation of time to the evolution of the model. But of course it is in principle an interesting question how quickly the agents learn. In particular, this may give rise to a trade-off often discussed in social epistemology, where fast learning may tend to decrease accuracy (in this case, of credibility assignment)- In a purely epistemological discussion, accuracy of belief trumps speed of convergence as a value; but in the current context, this turns into a contest between more justice today versus a higher level of justice at some point in the future.Footnote17

Furthermore, we focus on the recognition of dishonesty, rather than overall reliability. Again, given our topic, this is a reasonable restriction, but in general, full testimonial justice encompasses a consideration of competence. In the model, it is generally the case that a dishonest agent should be ignored, whereas the trustworthiness of an honest agent is contingent on their state of belief, which in turn depends on the current state of the group.Footnote18 The circumstances where an honest agent is likely to be unreliable correlate with situations in which the cause of truth-seeking is already lost, hence it makes sense to assign high levels of trust even though these agents can also be mistaken and hence spread false beliefs. Due to these facts, it is a sufficient approximation of testimonial justice to assign substantially lower trust to dishonest than honest agents, whereas the precise levels of p(R) need not be assessed.

Thus, we have now completed our argument: Agents using expectation-based updating are more accurate than our comparison class of agents under the relevant circumstances. At the same time, we have shown that these agents are more testimonially just. Hence, (approximate) testimonial justice functions as a safeguard against the distortions of dishonest agents.

6 Conclusion

In this paper, we have offered support for two claims: First, we have shown that it is possible for a type of naive Bayesian agent to approximately learn reliability under rather minimal assumptions. This does not contradict the general result by Merdes et al. (2021), because the claim is limited to a subset of the parameter space that is somewhat favorable to the agents. But it still qualifies the claim that sequential, expectation-based updating confers no epistemic benefits. In particular, it supports the idea that in epistemic domains which realize the assumption of strong benchmark propositions, it provides a useful tool to boundedly rational epistemic agents.

Second, the results support the claim that testimonial justice may not only operate as a safeguard against prejudicial judgments of credibility that unfairly discount certain speakers’ testimony, but also presents protections against dishonesty. This fits well with Fricker’s reference to an epistemic state-of-nature argument (cf. Fricker 2007, Ch. 5.1), as it offers a self-regarding reason to be epistemically just, expanding the scope of this claim. As one might intuitively believe already, assigning appropriate credibility to a source is in the best epistemic interest of an agent: To deflate it exposes one to loss of information, to inflate it exposes one to overconfidence and makes one the mark for dishonest agents of all sorts.Footnote19

This might raise concern about Fricker’s suggestion that the virtue of testimonial justice may demand a disposition to assign inflated credibility judgments to the members of certain groups when in doubt. But these concerns are not supported by the argument we present here, as it would be consistent with our results to recommend differential priors for reliability conditional on group membership. Only if the dishonest speakers were disproportionally members of such groups would a problem arise. If nefarious agents, such as corporate entities interested in the distortion of science, were aware of the tendency to extend the benefit of the doubt to certain groups, they may be incentivized to choose their speakers from those very groups. But unless such a process is assumed, our results do not refute Fricker’s prescription.

If we return with all of these to the issue of dishonesty in science, one main lesson can be drawn: Having a solid stock of knowledge to test a potential source of knowledge against is a critical resource that enables one to detect potentially dishonest agents. Thus, if someone expresses views on related topics that are inconsistent with well-known facts, we can use this as evidence against the honesty of our interlocutors. This is, in a nutshell, what it means to update based on expectation; what the model highlights, is, that this process only works reliably to the advantage of an epistemic agent if there are other propositions in play than the one currently under discussion.

As with any exercise in formal modeling, it is important to acknowledge some limitations. First, while other propositions were in play, we did not assume explicitly that they were correlated by their content. Implicitly, we assume connections in content, because we model the dishonest agents to be dishonest across the set of propositions, but do not model a correlation due to technical complexity.

Second, as noted above, we do not include further input other than testimony from the group. This means specifically, that no further empirical data can be collected. It follows that we model only processes that realize at least approximately such a condition; for instance, short-time discussions or communications with non-experts who do not have the opportunity to gather empirical data. Depending on the understanding of such data (for instance, how reliable it is and how well its reliability can be gauged compared to social processes), such input could play a similar role to benchmark propositions in our model.

The agents also do not make use of any interpersonal cues of dishonesty, which might be given away by body language or facial expression. This is grounded in the assumption that many of the exchanges do not take place in person, but for instance, by exchange in writing.

More significant to the domain of science, agents do not have information on institutional backgrounds of their sources. This makes credentials unavailable (which are the foundation of one of Goldman’s suggested sources of information to compare experts, (see Goldman 2011), but it also hides many sources of potential bias, such as funding sources. In part, such information may be available except for situations of blind review, but as Sismondo (2009) points out, not all contributions to a paper may be equally acknowledged, leaving the recipient of information in the dark, not knowing what they do not know. Thus, we consider it a minimal assumption not to use such background information in the model.

Despite of these limitations in its representative capacity, the underlying logic of the model captures important aspects of the epistemic process of testimony, and thus, its conclusions should offer some insight not only on the varieties of dishonesty and the interpretation of testimonial virtue, but also some tentative suggestions for strategic behavior in the face of potential dishonesty in one’s sources.

Notes

-

They describe, for instance, how differences in the concepts of causation in common use and epidemiology allowed sowing doubts in the public about the link between smoking and cancer in court.

-

We assume here that testimony and the assignment of reliability is an epistemic process the rationality of which does not depend on interpersonal relations of the agents. A brief overview of such positions is also provided by Lackey (2011).

-

This setup is similar to the one simulated by Hegselmann and Krause (2002), but the updating mechanism in their model is quite different. Confidence, which roughly translates to credibility assignment in our model, is set as a parameter rather than being learned and the model does not use conditionalization but qualified averaging of beliefs.

-

This only holds true if the propositions in question are not related to ascriptions of credibility. If instead some of the propositions refer, for instance, to the psychological states of the speaker, it may become reasonable to introduce the differentiation.

-

Concerns of epistemic injustice arise in other circumstances as well, in particular regarding the appropriate distribution of attention (Smith and Archer 2020), but those problems are beyond the scope of this paper.

-

For simplicity, the focus is on literal speech; though as Williams (cf. 2002, Ch. 5.4) notes, strategies to induce false belief may often rely on plays on literal and non-literal meaning, equivocation and the like.

-

For a formal account of the methods applied in the tobacco strategy, see also Weatherall et al. (2020).

-

We may further complicate this category by considering agents who testify randomly, but conditioned relative to their state of belief; thus, they could be more likely to voice H given they have high confidence in the truth of H or vice versa. For the purpose of this paper, we limit our analysis to the more ideal cases.

-

We use the common shorthand p(H) for .

-

Note, that we are not speaking of effects such as the Bayesian anchoring described by Hartmann and Rafiee Rad (2020).

-

This hinges in part on the fact that in the model, a reliability of 0.5 is mathematically a fixed point, but arbitrarily small deviations in either direction move the agent away from this fix point; hence, potentially very small numerical deviations in the calculations can have substantial effects on model behavior.

-

We also suspect that there is an issue with intuitions in favor of the more expressively powerful model: While, as our concept of a pathological liar underlines, we can certainly imagine an actually anti-reliable source, real listeners who come to believe that a source is anti-reliable may not learn , but instead a much more specific claim that is not warranted by rationality within the model framework. For instance, I may come to believe that the CIA behaves as a pathological liar. But I need to be quite careful in what I infer from this in conjunction with the agency’s statements. For instance, if they claimed that the Russian Federation destroyed the Nordstream 2 pipeline, this does not support the counterclaim that the USA did it, but merely the rather unspecific proposition that it must have been someone else than what the anti-reliable source tells me. And this is already making the problematic concession that this source plausibly operates as an anti-reliable source, and not, for instance, as a propagandist in our technical sense.

-

This is also a fundamental difference to another agent-based model of manipulative epistemic agents suggested by Holman and Bruner (2015), see also Holman (2021). Their model is based on a model of the agents solving a bandit problem and communicating on a network (introduced to philosophy by Zollman 2007). While quite interesting in its own right, this model has been implemented only with a single proposition; the recognition of dishonest agents depends on non-social data collection. Thus, the target of these studies is related, but distinct from the current investigation.

-

The idea of such fixed-trust agents for comparison is not new, it appears already in Hahn et al. (2018). In the original context it is meant as a neutral point of comparison, whereas we use it to represent a prejudiced agent.

-

As a side note, the framing conditions are such that the exchange generally reduces accuracy, as we are interested in the utility of testimonial justice in safeguarding against the influence of dishonest agents.

-

This result also indicates that without additional epistemic resources, merely introducing more severely unreliable sources in our dishonest agents does not suffice to elicit benefits from expectation-based updating.

-

For a discussion of the trade-off in epistemology, cf. Zollman (2007).

-

In theory, the story is more complicated in the case of the pathological liar, but as we argued when we introduced this character, dismissing their testimony rather than trying to use it is still reasonable and exposes the hearer to less epistemic risk.

-

This is not the only disadvantage of inflated credibility assignments; it seems that such inflation implies a deflation of other judgments by implication, though we have not formally argued for this claim here.

References

-

Anderson, E. 2012. Epistemic justice as a virtue of social institutions. Social Epistemology 26 (2): 163–173.

-

Bovens, L. and S. Hartmann. 2003. Bayesian epistemology. Oxford: Oxford University Press.

-

Davis, E. 2016. Typecasts, tokens, and spokespersons: A case for credibility excess as testimonial injustice. Hypatia 31 (3): 485–501.

-

Frankfurt, H. G. 2005. On Bullshit. Princeton: Princeton University Press.

-

Fricker, M. 2007. Epistemic injustice: Power and the ethics of knowing. Oxford: Oxford University Press.

-

Goldman, A. I. 2011. Experts: Which ones should you trust? In Social Epistemology: Essential Readings, 109–133. Oxford: Oxford University Press.

-

Hahn, U., C. Merdes, and M. von Sydow. 2018. How good is your evidence and how would you know? Topics in Cognitive Science 10 (4): 660–678.

-

Hartmann, S., and S. Rafiee Rad. 2020. Anchoring in deliberations. Erkenntnis 85: 1041–1069.

-

Hegselmann, R. and U. Krause. 2002. Opinion dynamics and bounded confidence: models, analysis, and simulation. Journal of Artificial Societies and Social Simulation (JASSS) 5 (3).

-

Holman, B. 2021. An ethical obligation to ignore the unreliable. Synthese 198 (Suppl. 23): 5825–5848.

-

Holman, B., and J. Bruner. 2017. Experimentation by industrial selection. Philosophy of Science 84 (5): 1008–1019.

-

Holman, B., and J.P. Bruner. 2015. The problem of intransigently biased agents. Philosophy of Science 82 (5): 956–968.

-

Irzik, G., and F. Kurtulmus. 2024. Distributive epistemic justice in science. The British Journal for the Philosophy of Science 75 (2): 325–345.

-

Lackey, J. 2011. Acquiring knowledge from others. In Social epistemology: Essential readings, 71–91. Oxford: Oxford University Press.

-

Medina, J. 2011. The relevance of credibility excess in a proportional view of epistemic injustice: Differential epistemic authority and the social imaginary. Social Epistemology 25 (1): 15–35.

-

Merdes, C., M. von Sydow, and U. Hahn. 2021. Formal models of source reliability. Synthese 198: 5773–5801.

-

Olsson, E. J., and A. Vallinder. 2013. Norms of assertion and communication in social networks. Synthese 190 (13): 2557–2571.

-

Oreskes, N., and E. M. Conway. 2010. Merchants of doubt: How a handful of scientists obscured the truth on issues from tobacco smoke to global warming. London: Bloomsbury.

-

Sismondo, S. 2009. Ghosts in the machine: Publication planning in the medical sciences. Social Studies of Science 39 (2): 171–198.

-

Smith, L., and A. Archer. 2020. Epistemic injustice and the attention economy. Ethical Theory and Moral Practice 23 (5): 777–795.

-

Weatherall, J. O., C. O’Connor, and J. P. Bruner. 2020. How to beat science and influence people: Policymakers and propaganda in epistemic networks. The British Journal for the Philosophy of Science, 71 (4): 1157–1186. https://doi.org/10.1093/bjps/axy062.

-

Williams, B. 2002. Truth and Truthfulness. An Essay in Genealogy. Princeton: Princeton University Press.

-

Zollman, K. J. 2007. The communication structure of epistemic communities. Philosophy of science 74 (5): 574–587.

Acknowledgements

I thank the members of the DFG network Simulations of Scientific Inquiry, especially Patrick Grim for their commentary on an early version of this work.

Funding

This research is part of a project No. 2022/45/P/HS1/03948 co-funded by the National Science Centre and the European Union Framework Programme for Research and Innovation Horizon 2020 under the Marie Skłodowska-Curie grant agreement No. 945339.

Ethics declarations

Conflict of interest

The author has no Conflict of interest to declare.

Additional information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

Reprints and permissions

About this article

Cite this article

Merdes, C.J. Dishonest Testimony and the Virtue of Testimonial Justice: A Naive Bayesian Analysis. J Gen Philos Sci (2025). https://doi.org/10.1007/s10838-024-09702-8

- Accepted

- Published

- DOI https://doi.org/10.1007/s10838-024-09702-8

Keywords

- Testimony

- Epistemic justice

- Bayes

- Epistemic virtue

- Truthfulness