Article Content

Abstract

Context

Potential employers can easily access job candidates’ photos online and attempt to infer a candidate’s fit or alignment based on their dress style. In this context, for candidates from marginalized groups like Indigenous people, traditional clothing holds cultural significance as it serves as a lively expression of belonging, participation in ceremonies, and resistance.

Objective

This exploratory study aims to empirically demonstrate whether dress manipulation in a picture affects the perceived competence of equally qualified candidates for a position like a software developer in which this cue should not be crucial.

Method

We conducted a quasi-experiment based on a survey. It involved job candidates (photo models) and participants (evaluators) from IT companies located in Ecuador. The analysis was performed by fitting a linear mixed-effects (LME) model based on dress style, gender and race/ethnicity of the candidates as well as evaluators’ gender and experience in hiring. Also, a thematic analysis was conducted.

Results

Findings show that dress manipulation hardly influences the evaluators’ evaluation of candidates’ competence, as no statistically significant differences were found in our sample. Most of the unexplained variance (64.461%) stems from variability in scores across evaluators. Likewise, the thematic analysis revealed notable evaluator discrepancies indicating varying judgments and outcomes that suggest idiosyncrasies, which are not noise or error.

Conclusions

This study demonstrates the value of contextual factors —such as gender, race/ethnicity and cultural background— in software engineering studies and calls for valuing individual software developers and their human aspects. Perceived competence extends beyond hiring situations as it can influence initial trust and cooperative behaviors among software development team members when interacting with unfamiliar collaborators.

Explore related subjects

Discover the latest articles and news from researchers in related subjects, suggested using machine learning.

- Cultural Psychology

- Intercultural Communication

- Race and Ethnicity

- Social Perception

- Stereotype

- Cross-Cultural Management

1 Introduction

Recruiting the right people becomes a critical activity in software development since it is not only a technical and knowledge-intensive activity but also a human-centric and collaborative one that could benefit from the social attributes of the people involved in it (Vasilescu et al. 2015; Dagan et al. 2023). Indeed, standardized inclusive recruitment practices are a critical component for high-performing teams to achieve excellence (Coleman et al. 2021). The benefits of diversity encompass improving organizational reputation and performance, driving innovation through diverse perspectives, and fostering productivity and creativity (Kanij et al. 2022). However, diversity is multidimensional, emerging from a variety of sources, including demographic attributes that differentiate people such as gender, age, and ethnicity, as well as other dimensions such as role, expertise, and personality traits (Menezes and Prikladnicki 2018; Silveira and Prikladnicki 2019). Studies have revealed the challenges faced by software developers from underrepresented groups (Baltes et al. 2020; Rodríguez-Pérez et al. 2021; van Breukelen et al. 2023; Oliveira et al. 2024), including explicit bias (Blincoe et al. 2019), implicit bias (Wang and Redmiles 2019; Matthiesen et al. 2023), and discrimination (Thomas et al. 2018; Campero 2021, 2023).

In this context, there are limited studies on the hiring process that have been conducted with a focus on diversity (Lunn and Ross 2021). Filkuková and Jørgensen (2020) explored how people perceive the same individuals differently based on their facial expressions in a photo and how this perception affects the perceived competence of candidates for the position of software developer. However, global evaluations have the potential to alter perceptions of even relatively unambiguous stimuli about which an individual has sufficient information to render a confident judgment (Nisbett and Wilson 1977). Overall, employers are increasingly using social media screening, also called cyber vetting, as part of their employment process (Jacobson and Gruzd 2020). Even in countries like the United States and Canada where attaching a photo to a job application is not recommended, pictures that candidates have on the website of their previous employer or online social networks like Instagram, Facebook, Twitter, and LinkedIn can still affect a hiring decision (Filkuková and Jørgensen 2020).

Previous research has also shown that a job application can have different success rates depending on the candidate’s picture regardless of whether the picture is in the CV or available on a social network (Baert 2018) since people can make judgments based on a photo after a minimal exposure time of 100 ms (Willis and Todorov 2016). Although technical training is relevant, it does not, in itself challenge race- and gender-biased preconceived notions of what technically skilled practitioners look like and what constitutes “merit” in the tech workplace (Abbate 2021). These biased standards have served to perpetuate disparities in both hiring and promotion practices.

Overall, hiring is not a value-free and neutral process in which the best candidate is selected (Jacobson and Gruzd 2020). Hiring discrimination has been well-documented based on social identities like gender, race, ethnicity, age, disability, and religion (Baert 2018). Particularly, in the case of male-dominated occupations like software developer, women often change their dress to adopt a more masculine or gender-neutral look to conform to standards of belonging and signal competence (Alfrey and Twine 2017; van Breukelen et al. 2023). Indeed, clothing has been recognized as an element of personal branding that can help software developers advance their careers (Nagy 2019). It suggests that dress manipulation could impact impression formation within this occupation. Despite studies have examined the perceived competence of women (Imtiaz et al. 2019; Veit et al. 2022), little is known specifically about the impact of stereotypes on the perceived competence of software professionals from other underrepresented groups.

To the best of our knowledge, previous studies have not explored the effect of dress manipulation on the perceived competence of software practitioners from underrepresented groups like Indigenous populations. This study is an execution of our registered report (Sánchez-Gordón et al. 2023).Footnote1 We conducted a quasi-experiment based on a survey involving software developers (evaluators) tasked with rating the perceived competence of 24 job candidates based on their photos. Candidates were software developers who self-identified as Andean Indigenous or Mestizo (mixed race). We controlled for candidates’ age by focusing on a specific age range, as well as, their nationality and cultural/historical context (Martin et al. 2017; Harris 2021) by focusing on IT companies located in Ecuador for both evaluators and candidates. To manipulate dressing style, we utilized traditional clothing, as it serves as an identity marker that uniquely identifies Andean indigenous people and provides non-indigenous people like Mestizos with a readily available means to classify indigenous individuals as out-group members. Therefore, dress style manipulation included traditional Andean and non-traditional clothing as treatment. The following research question guides this study:

RQ: Does a choice of dress style in a photograph influence software professionals’evaluations of an Ecuadorian software developer’s competence?

Evaluators’ unconscious biases can lead to decisions that harm candidates from underrepresented groups and benefit candidates who fit the majority-based stereotype. In countries with large urban indigenous populations, such as Peru, Ecuador, Bolivia, and Mexico, the percentage of indigenous persons occupying high-skill jobs, like software developers, is consistently smaller than the percentage of non-indigenous people (Freire et al. 2015). Mestizo is the majoritarian group in Latin America, in particular, Ecuador has 77% (INEC 2023) with high levels of Native American ancestry (up to 51%), followed by less European (up to 33%) and African (up to 13%) ancestry (Yang et al. 2005). Although Latin America has a long history of discrimination against Indigenous people since the beginning of the colonial period (United Nations 2007), discrimination based on phenotype -individual’s appearance like height, hair color, skin color, and eye color- is an underemphasized aspect of raceFootnote2 in the region, particularly in areas with a high Indigenous population (Ravindran 2021). It suggests that Mestizos can share similar facial traits with those of Indigenous ancestry which results in a limited ability to distinguish between both groups.

Previous studies also show that clothing is a powerful non-verbal communication tool that activates stereotypes that can be either positive or negative (Livingston and Gurung 2019; Wang et al. 2022). For example, grooming and dress style can influence evaluator appraisals of competence (Wang et al. 2022). These biases can be extremely powerful in changing perceptions especially those based on race/ethnicity as individuals tend to have strong reactions to “stereotypical clothing” which is clothing associated with specific population groups (Livingston and Gurung 2019). In the case of Andean Indigenous people, they wear traditional clothing for many reasons, e.g., to express belonging, enter ceremonies, and show resistance. Therefore, our motivation for this RQ is to understand whether manipulating dress style affects the software professionals’ perceptions of software developers’ competence. We would like to test the null hypothesis 1 ({H}_{0}^{1}): Evaluators perceive no difference in competence between job candidates wearing traditional and non-traditional clothing in professional photos. The alternative hypothesis 1 ({H}_{a}^{1}): Evaluators perceive differences in competence between job candidates wearing traditional and non-traditional clothing in professional photos.

Apart from gender discrimination, previous studies show that stereotypes against minorities in the tech industry based on race/ethnicity are persistent since there has been almost no change in racial equality and ethnic diversity in the industry (Chattopadhyay et al. 2021). At the core of systemic inequalities such as racism, the cognitive and affective responses of stereotypes and traits are present (Harris 2021). Often, the reasons for stereotypes and their inaccuracies are related to cumulative cultural evolution rooted in historical conditions (Martin et al. 2017; Harris 2021) Therefore, we would like to explore the candidates’ gender and race to test the null hypothesis 2 ({H}_{0}^{2}):The gender and race of candidates do not moderate the effect of evaluators’ perceptions of candidates’ competence. The alternative hypothesis 2 ({H}_{a}^{2}): The gender and race of candidates moderate the effect of evaluators’ perceptions of candidates’competence.

In addition to implicit associations, an evaluator may have related to race and gender which can unintentionally influence the way candidates are perceived and evaluated, Filkuková and Jørgensen (2020) found that women were perceived as less competent than men, particularly among participants lacking prior experience in hiring, driving this effect. Moreover, we would like to explore whether shared gender group membership shapes the extent to which evaluators perceive candidates’ competence. Therefore, similarly to {H}_{0}^{2}, we would like to explore evaluators’ gender and hiring experience to test the null hypothesis 3 ({H}_{0}^{3}): The gender and hiring experience of evaluators do not moderate the effect of evaluators ’ perceptions of candidates’ competence. The alternative hypothesis 3 ({H}_{a}^{3}): The gender and hiring experience of evaluators moderate the effect of evaluators’ perceptions of candidates ’ competence.

The contribution of this exploratory study is based on the participation of software professionals from local IT companies who evaluate the competence of a set of candidates for the job of a software developer, specifically 24 faces of software professionals.

The remainder of this paper is organized as follows. Section 2 presents background and related work. Section 3 presents the execution of the plan presented in our registered report, while Section 4 reports the data analysis and results. In Section 5, we discuss the deviations and limitations of this study and outline the key implications that our study has for the research community. Finally, Section 6 draws some conclusions. To facilitate replication and future work in the area, we have prepared a replication package (Sánchez-Gordón et al. 2024), which includes 197 valid responses of 432 responses received, the scripts for the quantitative analyses, the qualitative scheme code, and the survey materials.

2 Background and Related Works

The study of behavioral aspects in software engineering has been a subject of interest for researchers since the early days of the discipline (Curtis 1984). However, the term Behavioral Software Engineering (BSE) was coined years later along with a definition proposed by (Lenberg et al. 2015). More recently, Fagerholm et al. (2022) conducted an analysis exploring the fundamental role that cognition plays in most software engineering activities. These authors emphasized that social cognition is a cross-cutting concept related to “the cognitive activity that accompanies and mediates social behaviour, including acquisition of information about the social environment, organisation and transformation of this information in memory, and its effects on the individual’s behaviour”. Despite the current fragmentation in the state of the art due to a lack of use of cognitive concepts, Fagerholm et al. (2022) found that social cognition has gained attention in seven publications between 2008 and 2020 but these lack specific psychological theories. Apart from these publications, as mentioned above, Filkuková and Jørgensen (2020) conducted the closest study to our registered report. They took three photos of 20 software professionals using the following facial expressions: a smile, a neutral expression, and a thinking expression, with a hand touching one’s chin. A total of 238 employees from IT companies were assigned to one of the three sets of photos to evaluate the candidates’ competence for software developer jobs. This study used a single item based on a 7-point scale (1 = not competent at all; 7 = very competent). Their findings suggest that application photos have an impact on one’s perceived competence of software developers but competence is not conceptualized by drawing from cognitive science.

Research on social cognition has shown that people perceive social groups and individuals based on perceived warmth and competent they are, a model known as the Stereotype Content Model (SCM) (Fiske et al. 2002). These two dimensions of social judgment play a significant role in how perceivers form impressions of targets and shape their social interactions. Warmth reflects the survival need of knowing the intentions of targets (positive or negative, perceived competition) whereas competence is the consequent ability to enact those intentions (status) (Fiske et al. 2007). From an evolutionary point of view, warmth is judged before competence, and those dimensions not only impact impression formation but also underlie group stereotypes formed by combining high versus low levels of these two dimensions. However, some studies (Belmi and Pfeffer 2018; Min and Hu 2022) have shown that preferred dimensions are context-dependent. Since software developers are often reviewed and compensated based on their team’s performance, reward interdependence is expected to affect their cognition, leading them to value competence over warmth (Belmi and Pfeffer 2018). In support of that, a survey on occupational stereotypes found that systems and software developers were perceived by professionals, mostly recruiters, as having high competence but low warmth (Strinić et al. 2022). Overall, social groups are perceived as competent if they are high in status, e.g., educationally or economically successful (Fiske et al. 2002). In this sense, previous research has revealed that the race/ethnicity of people moderates such effects for Black Americans (Fiske et al. 2009). It is also expected that this stereotype, called subtyping by class, plays a role in other groups who are not high in status like Andean Indigenous.

Perceived competence has been measured in different context. Cuddy et al. (2004) examined how female subtypes are subjected to affectively mixed prejudices. They developed two four-item scales that assessed perceived competence (capable, efficient, organized, skillful) and perceived warmth (good-natured, sincere, warm, trustworthy) on a seven-point scale ranging from “not at all” to “extremely”. Later, Cuddy et al. (2009) proposed a warmth and competence survey to examine the SCM model across cultures. This survey uses a five-point Likert scale (1 “not at all”–5 “extremely”) for warmth (friendly, warm, good-natured, and sincere) and competence attributes (competent, confident, capable, and skillful). More recently, Strinić et al. (2022) examined occupational stereotypes related to preselected occupations within a professional sample that included systems and software developers. They used an adapted competence subscale from Cuddy et al. (2009) by replacing “talented” and “ambitious” with “confident” and “capable” as proposed in their earlier study Strinić et al. (2021). Moreover, Wang et al. (2022) explored the interaction effect of grooming and dress style on hirability. Building on Cuddy et al. (2004) and Correll et al. (2007), they developed a composite measure of perceived competence using seven-point scales that ranged from “not at all” to “extremely” capable, efficient, skilled, intelligent, independent, self-confident, aggressive, and organized.

Understanding real-world judgments calls for studying impressions of more realistic and complex stimuli. In this sense, most psychological literature suggests that people draw inferences based on facial appearance judging mainly based on gender, age and ethnicity (Van Vugt and Grabo 2015). Beyond the target face, another feature of the target identity that deserves consideration is the target’s dress. Research has shown that dress affects behavioral responses in crucial aspects such as employment opportunities and occupational success (Konrath and Handy 2021; Wang et al. 2022). These biases can be extremely powerful in changing perceptions specially those based on race/ethnicity as individuals tend to have strong reactions to “stereotypical clothing” which is clothing associated with specific population groups (Livingston and Gurung 2019). Target face and target dress are intimately linked but markedly different, since target’s dress is more state-like and malleable whereas the target face is more stable, trait-like, and resistant to change (Hester and Hehman 2023). Perceptions of individuals from different cultural backgrounds are associated with specific cues that may reinforce stereotypes and influence trust. For example, one perceiver might have the cultural knowledge needed to associate wearing traditional clothing as a signal of indigenous identity. Because they hold positive attitudes toward people who identify as “Indigenous” they form a positive first impression of the target. On the contrary, another perceiver might hold negative stereotypes like subtyping by class. In this scenario, this perceiver endorses negative stereotypes about indigenous identity, but due to the lack of recognizable traditional clothing and phenotypic similarities between the targets, these stereotypes do not come into play. As a result, the perceiver relies on alternative cues to judge the target.

3 Plan Execution

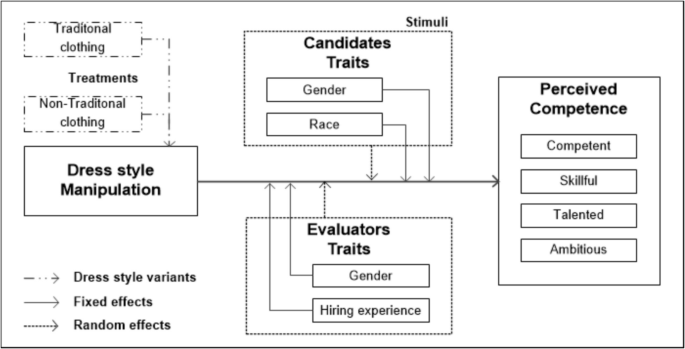

The plan execution followed our registered report (Sánchez-Gordón et al. 2023) with some deviations, which are described in Section 5.2. To guide and focus our study, we develop a theoretical model as shown in Fig. 1. Competence is a second-order construct since it encompasses four dimensions: competence, skillfulness, talent, and ambition as proposed by Strinić et al. (2022). Then, we conducted a survey study to collect data that was subsequently analyzed using mixed models that included both fixed effects and random effects.

Research model of this study adapted from (Sánchez-Gordón et al. 2023)

3.1 Participants

We considered a convenience sample strategy to collect responses from employees of IT companies located in Ecuador since cultural background influences the formation and development of stereotypes. We contacted them by phone and then an email invitation was sent. Almost all agreed to collaborate in this study by extending email invitations to their employees. They comprise around 200 employees. Anticipating a low response rate, we went beyond the original recruitment strategy outlined in our registered report. We sent additional email invitations to a targeted group of 735 former students from the Universidad Técnica del Norte located in the northern region of Ecuador. In addition, we sent invitations to 65 personal contacts located in Ecuador. As a result of these efforts, we sent 1,000 invitations and received a total of 432 responses (a response rate of approx. 31%).

To reduce the influence of social desirability bias, participation was voluntary and anonymous, with no cash incentives provided. We also aimed to minimize the likelihood of purposefully misreporting by informing participants beforehand that the data would be solely used for research purposes.

3.2 Survey

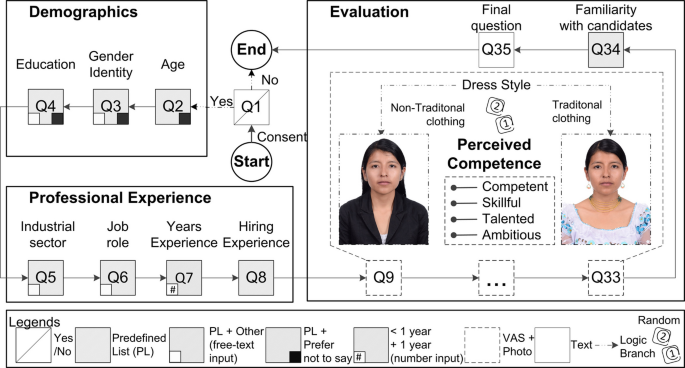

As per the registered report, we created an anonymous questionnaire self-reported web-based questionnaire using a survey tool called QuestionPro. Questions were grouped into three main parts i) demographic information, ii) professional experience, and iii) evaluation. Figure 2 provides an overview of the questionnaire, while the complete survey is available in the replication package (Sánchez-Gordón et al. 2024).

Overview of the questionnaire and an example of stimulus material (non-traditional/traditional clothing) along with the Visual Analogue Scale (VAS) used to measure candidates’ perceived competence

The first two parts included single-choice selection questions based on predefined lists (PL, see more details in Table 1). The first part is related to basic demographic information concerning participant age (Q2), gender identity (Q3), and education (Q4). These questions included the option “prefer not to say”, while the last two also offered a free-text input for the “other” option in education and the “I prefer to describe myself” option for gender identity. The second part is related to the professional experience including job role (Q6), years of experience (Q7) and the evaluator’s experience in hiring (Q8; 3-point scale: Occasionally, Frequently and Never). Different from the registered report,Footnote3 we formulated a question about the industrial sector of the organization that participants work in (Q5).

In the third part of the survey, a stimulus based on photographs of 24 candidates was included to evaluate candidates’ perceived competence. This approach was inspired by previous research on the impact of three distinct facial expressions on the perception of competence in software developers (Filkuková and Jørgensen 2020). Each candidate’s perceived competence (Q9-Q33) was evaluated based on their photograph and a Visual Analogue Scale (VAS), which provided continuous rating options as shown in Fig. 2. The VAS comprised a horizontal line with descriptive anchors at opposing ends ranging from “not at all” to “extremely” (100 points). Participants were randomly assigned across treatments and assessed all 24 candidates across four dimensions: competence, skillful, talent, and ambition.

To understand the effect of familiarity with candidates, we included a question (Q34; 3-point scale: no, I don’t remember, and “yes”) to ask participants if they knew any of the candidates. Moreover, we went beyond what was outlined in the registered report and included the following final open-ended question (Q35): “Would you like to add some additional information or share some other perspective on how to evaluate candidates for software developer positions?”.

Stimuli Creation

All 24 photo models are employees of IT companies located in Ecuador. They were former students from the Universidad Técnica del Norte who did not receive the invitation email to participate as evaluators in this study. To constitute a balanced sample, models were selected based on race (Indigenous and Mestizo) and gender (man and woman). This resulted in four groups of six models each (6 × 4). To mitigate the effect of age in hiring, all photo models were within the age range of 22 to 34 years, except for one of the indigenous candidates who was 44 years old. To manipulate the dress style of the candidates, all models appeared in both experimental conditions (traditional Andean/non-traditional clothing). Moreover, the use of traditional accessories was restricted solely to traditional clothing to reduce possible biases. Each picture is a facial photograph, head and shoulders of the models only, and portrait orientation as shown in Fig. 2.

The photos were taken in a room without windows, ensuring consistent lighting conditions throughout. Moreover, all models were instructed to look straight at the camera and then adopt a neutral expression since we are only interested in gender and race comparisons and any other differences in evaluating different models were not considered. All pictures except for two indigenous candidates were taken in the same place at a specific time of the day by the same camera digital with a maximum resolution of eight megapixels. To ensure consistency for the remaining two candidates, two additional locations with similar conditions were established although another camera was used. After the photo sessions, the models were given digital copies of their images, but they were not informed of this beforehand.

Pilot Test

We created a checklist focused on the clarity, completeness, relevance and response time of the survey. Then, we invited three software professionals, rather than the two specified in our registered report, to respond to the survey and provide feedback based on the checklist. We analyzed their responses and made some minor changes to address their feedback. Then, we also deviated from the registered report by inviting two more professionals to test the improved survey. As a result, no further modifications were necessary following their feedback. These responses were not included in the analysis of the results.

Data Collection

The data collection process was conducted between February and April 2024. Before participation in the online survey, participants were asked to review a consent document and “agree” to the contents of it. Then, they were randomly assigned across treatments, i.e., half of them to each one: experimental group and control group. The control group received the candidates’ photos wearing non-traditional clothing whereas the experimental group received the candidates wearing traditional clothing. Next, participants were informed that all the candidates they would see were equally qualified for a position as a software developer. After that, the images of 24 candidates were presented along with the scale to rate their perceived competence.

The time to do each evaluation was not limited so participants had as much time as needed to complete it. However, participants cannot fall back to the previous picture once they had moved on. To ensure that all variables, except for the candidates’ clothing, remained constant between the two groups, we kept a consistent sequence of candidates. This means the same candidates were presented in the same order. For instance, a picture of the indigenous male [C1] was always followed by a photo of the Mestizo female [C2], then the Mestizo male [C3], and finally the Indigenous female [C4]. Each candidate was thus assigned a unique identifier, ranging from [C1] to [C24].

4 Data Analysis and Results

Following the data collection, we proceeded to the analysis stage which was performed only for those valid responses according to the criteria established in the data cleaning. This resulted in 197 valid responses which provide an overview of both demographic information and competence scores within our sample. Then, we prepared the data for model development and tested our hypotheses by fitting the model.

Data Cleaning

We deleted all the incomplete responses from the 432 responses received. As a result, there were 240 complete responses (a completion rate of approx. 55%). Note that, due to some participants dropping, the percentage of participants could not be kept the same in the control (124, 52%) and experimental (116, 48%) groups which was our initial intention. Fifteen survey responses were removed from the dataset, eight because they were submitted by individuals located in other countries, and seven because respondents are not currently working in the software industry, including four students. Although six responses were received from scholars, they identified themselves as software professionals, thus they were retained. Different from the registered report, twenty-eight responses were removed based on their average value scores, which were either below or above 10 (see details in 5.2 section). The final comment in some responses served to further justify their exclusion. Additionally, participants who reported less than one year of experience or indicated familiarity with certain candidates were retained for further analysis. This resulted in a total of 197 responses to our analysis.

We also received 89 answers to the final comment, an open-ended question, but we removed 11 of them since they indicated that no additional information needed to be provided, except one answer that lacked context to understand it. Therefore, we leave 78 answers for analysis.

Demographic Information Overview

All 197 participants self-identified as current software professionals. Table 1 shows an overview of the frequency of responses. The majority of participants identified as men (n = 159, 81.7%), while 38 (19.3%) identified as women. Note that while the percentage of female representation is relatively low, it is close to the average percentage (20.8%) in the Faculty of Engineering and Applied Sciences (FICA) at the Universidad Técnica del Norte, according to the last academic year (2023–2024) official statistics (Universidad Técnica del Norte 2024). Overall, women remain underrepresented in the science, technology, engineering and mathematics (STEM) workforce, making up only 28.2% of the total according to the Global Gender Gap Report 2024 (World Economic Forum 2024). The age of most participants (139) ranged from 26 to 45 years old. Moreover, most of the participants reported an engineering degree (130) followed by a master’s degree (55), and the remaining (12) reported having other degrees.

Most participants (163) reported having more than one year of experience (mean = 7.59, SD = 6.39, range, 1–35) with one participant’s data missing, and 34 reporting less than a year. Looking into the different industrial sectors, 76 participants worked in ICT, followed by Financial (33), Education (23), Government (10), Telecommunications (8) and other sectors (47) such as FinTech, Pharmaceutical, and Manufacturing. The most reported job role was software developer (88), although analyst (37), project manager (29), tester (7), and architect (5) were also represented. In the category others (31), we identify middle managers (8), academics (6) and other ICT-related roles (17).

Among the respondents who have hiring experience, 74.11% (146) reported occasional (128) and frequent (18) involvement, while 25.89% (51) stated they had never been involved. Finally, the majority of the respondents (111) reported that candidates did not seem familiar to them, while the remaining (86) reported they were somehow familiar with the candidates.

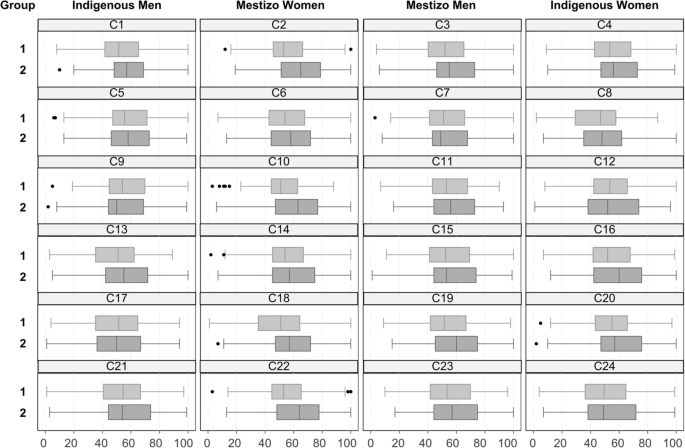

Competence Scores Overview

The competence data points consisted of 4,728 scores (n = 24 × 197) for each item as shown in Table 2. The control group (1) received non-traditional clothing, while the experimental group (2) received traditional clothing as treatment. The control group comprises 104 evaluators (n = 2,496) whereas the experimental group contains 93 evaluators (n = 2,232). On average, candidates were perceived as 54.29 competent (SD = 22.20, range, 0–100), 53.76 skillful (SD = 21.79, range, 0–100), 54.02 talented (SD = 22.15, range, 0–100) and 56.36 ambitious (SD = 22.90, range, 0–100).

Table 3 shows the perceived competence by gender of both the evaluators (higher-order) and candidates (lower-order). Overall, male evaluators provided more favorable competence scores. In addition, female candidates in the experimental group were perceived as more competent than male regardless of the evaluators’ gender. However, female evaluators perceived female candidates as slightly less competent than male candidates in the control group while the opposite is observed in the experimental group, differences in means are −1.2008 and 1.9843, respectively. The range of perceived competence values is displayed in the “Min.” and “Max.” columns.

Figure 3 depicts the boxplots of perceived competence for candidates, [C1] to [C24], grouped into four categories: Indigenous Male, Mestizo Female, Mestizo Male, and Indigenous Female. Evaluators assessed the candidates’ competence under one of two treatment conditions: the control group (1), linked to non-traditional clothing, and the experimental group (2), linked to traditional clothing. An unexpected finding was that 22 candidates received a more favorable competence score in the experimental group than in the control group, except for an indigenous man [C9] and an indigenous woman [C12]. However, note that the differences in means were less than one for [C12] and two other candidates [C7, C15], with values of −0.30, 0,23, and 0.76 respectively. For [C9], the difference in means was −2.95 indicating that his perceived competence might have been affected by another contextual factor like age (44 years), despite not receiving the lowest scores in any of the treatment conditions. Moreover, we observe that three mestizo women [C2, C10, C22] received the highest scores in the experimental group.

Box plots of perceived competence for the 24 candidates in the between-group analysis. The treatment for the control group (1) is non-traditional clothing and traditional clothing for the experimental (2) group

As the same target (i.e., the candidate depicted) was presented in our study as static (photo) stimuli in both experimental conditions, we expected that observed ratings (competent, skillful, talented, ambitious) given to the same target (candidate) across different evaluators (all evaluators are different variables with observations competent, skillful, talented, ambitious) should be highly correlated but correlations appeared rather low (from on average r = −0.004, p = 0.3454 to on average r = 0.09, p < 0.001), while the correlations of observed ratings given by the same evaluators across candidates (all candidates are different variables with observations competent, skillful, talented, ambitious) vary considerably (from on average r = −0.004, p = 0.2743 to on average r = 0.925, p < 0.001).

4.1 Statistical Modeling Analysis

Data Preparation

We deleted the consent form confirmation (Q1) field since respondents could not continue without checking these boxes (the response is always “YES”). The raw data was coded into a common quantitative coding scheme available online in the replication package (Sánchez-Gordón et al. 2024). Table 4 provides a short description of the coding scheme including the factor name, the variable name used in our model, the survey section and the related survey questions, ID encoding used in our model, potential values, and their respective types. Specifically, {\mathbb{N}} denotes a natural number, {\mathbb{O}} indicates ordinal data, and {\mathbb{Z}} indicates an integer for categorical data.

To facilitate the analysis, the following three categories were regrouped. Experience (Q7) was regrouped to less than 1 year (0) and more than 1 year (2). Hiring experience (Q8) had 3 categories and was regrouped to “no” (no (1) and I don’t remember (2), 1) and “yes” (yes (3), 2). Familiar (Q34) had 3 categories and was regrouped to “no” (never, 1) and “yes” (occasionally (2) and frequently (3), 2). After gaining some insight concerning the data and the factors, we focus on statistical model development.

Model Development

Standard linear regression models assume independence between the measurements, which is not suited for the analysis of the data with hierarchical structures. To resolve this issue mixed models were developed (Stroup 2012). These models are particularly well suited for the analysis of data with repeated measurements on clusters that involve two sources of variation, within and between clusters (Demidenko 2013). They can also control correlations between measurements (Demidenko 2013). A mixed-model approach seems appropriate as our data represents a repeated-measurement design with each evaluator assessing multiple candidates and each candidate being assessed by multiple evaluators. According to Stroup (2012), a mixed model shares the general form of the Eq. (1):

where \beta is a vector of fixed-effect parameters and \text{X} is the design matrix for fixed effects whereas \delta \sim \text{MV{N}}\left(0,\Sigma \right) is a vector of random-effects and {Z} is the design matrix for random effects, while \varepsilon \sim \text{MV{N}}\left(0,\text{I}\cdot {\sigma }_{\varepsilon }^{2}\right) are random errors. Further, it is assumed that all \delta and \varepsilon are independent.

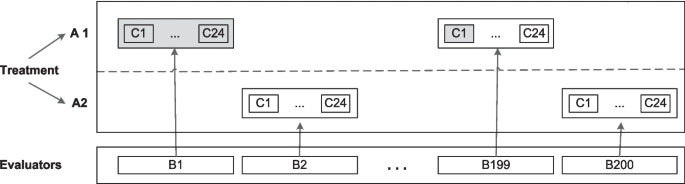

In this study, the factor related to the treatment is a fixed effect, i.e., dress style. Figure 4 shows the plot plan, with evaluators (B) nested within a factorial combination (repeated measures) of the other two factors, treatment (A) and candidate (C), i.e., AxB(A)xC. This combination of crossed and nested factors is a split-plot design (Stroup 2012). Perceived competence represents a two-treatment paired design (traditional and non-traditional clothing) where the observation unit is not distinct from units of replication, i.e., the candidates and the nested effects. The interaction between a fixed effect and a random effect is random due to the inclusion of a random component.

Plot plan for the addressed design of the experiment (200 evaluators are exemplified)

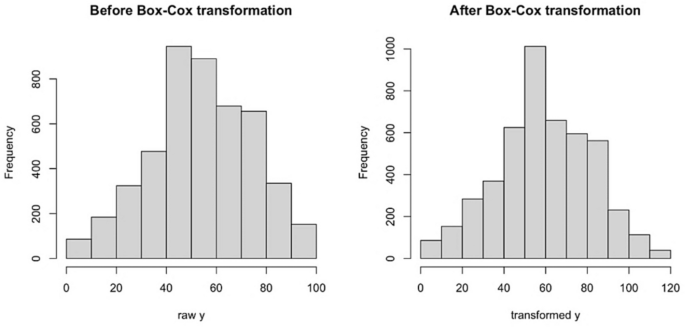

Due to the strong right skewness of the collected average scores (see Fig. 5), the response variable was the Box-Cox transformed average competence (Avg_competence), defined as {\gamma }_{i}=\text{BoxCox}(\frac{1}{4}{\sum }_{j=1}^{4}{y}_{ji},\lambda =1.03), where optimal \lambda was found using boxcox function from MASS (version 7.3–60.2) package in R (Venables and Ripley 2002; Ripley et al. 2024). The Box-Cox function is a power transformation that makes the data closer to the normal distribution, which is assumed in the linear mixed effect model.

Histograms of the raw and transformed outcome variable

Furthermore, the vector of fixed effects {X}_{i} for observation i\in \{1,\dots ,n\} consists of variables {{\tau }_{i},c}_{1i},{c}_{2i},{c}_{3i},{b}_{1i},{b}_{2i}, defined in Table 4, as well as the two and three-way interactions between components of {{\varvec{c}}}_{{\varvec{i}}} and {{\varvec{b}}}_{{\varvec{i}}}. The vector of random effects {\rm Z}_{i} is chosen for the addressed split-plot design and consists of a random intercept for all evaluators based on {b}_{0,i}, a random intercept for all candidates based on {c}_{0,i}, a random effect of all candidates based on {c}_{0,i} for each level of treatments {a}_{i}, and random slope for accounting for the order of candidates nested within evaluators {c}_{0,i}|{b}_{0,i}.

The resulting special case of the Eq. (1) is associated with the linear mixed effect model defined for simplicity per observation i\in \left\{1,\dots ,n\right\} as follows (2):

where \beta=({\beta }^{\tau },{\beta }^{c}, {\beta }^{b},{\beta }^{cc},{\beta }^{bb},{\beta }^{ccc},{\beta }^{ccb}) is the vector of fixed effects, \varepsilon \sim \text{MV{N}}\left(0,\text{I}\cdot {\sigma }_{\varepsilon }^{2}\right) are random errors, \delta \sim \text{MV{N}}\left(0,\Sigma \right) are random effects. Further, \varepsilon and \delta are all pairwise independent. The model was implemented using the open-source statistical software R 4.3.2, utilizing the lmer (R package lmerTest, version 3.1–3) function for Linear Mixed Effects (LME) models (Kuznetsova et al. 2020). This corresponds to the following lmer formula (3).

where Avg\_competence is the dependent variable; Treatment, familiar, cgender, crace, hiringExp and gender are fixed effects; (1 | EID) and (1 \left|CID\right) are the random effect for each evaluator and candidate, respectively, whereas (1 | CID:Treatment) is the random effect of all candidates for each level of treatment. Moreover, (0 + CID | EID) is read as “no intercept and candidate by evaluator”. It is a random slope for all candidates, grouped by each evaluator.

The results for fixed effects are summarized in Table 5. The main effects of the predictors were assessed. All variables were tested to see if they have an individual statistical significance using a t-test. The obtained statistical results for fixed effects possessed degrees of freedom between 96 and 4319, and the only significant on a 0.05 level of significance effect appeared to be the intercept with a t-statistics of 2.914 on 343 degrees of freedom corresponding to a p-value of 0.004. Thus, the results indicate a non-significant treatment effect at the 0.05 significance level and non-significance for all other addressed fixed effects.

The summary of the random effects is given in Table 6, indicating that most of the unexplained by fixed effects variance is due to high unexplained by the fixed effects variability of scores across the evaluators accounting for 64.461% of total variance in the data. The residual variance (33.282%) represents unexplained variability in the outcome that is not accounted for by the fixed and random effects in the model. Other sources of randomness have lesser contributions to the total variance.

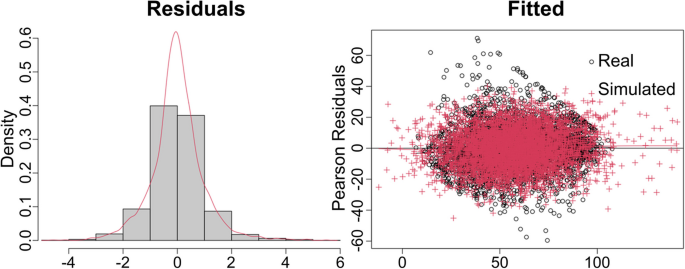

Diagnostics of the addressed model have also been performed. The residuals are symmetric and bell-shaped, however exhibit somewhat heavier than standard normal tails. They are also slightly heteroskedastic, yet we consider the model acceptable given the evaluated p-values not contributing to significant effects anyway. Moreover, when data was simulated from the fitted model, and then refitted to the original model similar behavior of the residuals was obtained, see Fig. 6. Likewise, ANOVA analysis against the null model with the same random effects but without any fixed effects gives a p-value of 0.6873 (ANOVA in R; χ2(26) = 22.025), corroborating the joint non-significance of fixed effects.

Model diagnostics. On the left, black is the histogram, red is the density plot of the standardized residuals. On the right, black are real data residuals, and red are residuals based on simulation from the fitted on real data model obtained through sim.residplot function from GLMMmisc (version 0.1.1) R package (Johnson 2016)

To account for the model misspecifications and robustness of the drawn conclusions regarding significance of the fixed effects and selection of the random effects, extended model selection of alternative structures of the random effects combined with optimal selection of the fixed effects has also been performed. To do so, we used backward stepwise selection of random-effect terms followed by backward selection of fixed-effect terms in linear mixed models using likelihood ratio tests with a significance level of 0.05 through step function in lmerTest (version 3.1–3) R package (Kuznetsova et al. 2020). Here, the extended full model corresponds to the following R formula (4).

and the final selected model was (5)

essentially confirming our conclusions regarding non-significance of the fixed effects and confirming the choice of random effects. Also, it is worth mentioning that as another robustness check, we ran the analysis without the Box-Cox transformation. This analysis confirmed all conclusions obtained with the transformed data and is available in the replication package.

Hypotheses Testing

When fit with the collected data, the extended model (4) did not propose significantly better than the full model in (3) in terms of likelihood ratio tests (ANOVA in R; χ2(7) = 2.2867, p = 0.9423). In turn, the full model (3) did not perform better than the null model (5) in terms of the likelihood ratio test (ANOVA in R; χ2(26) = 22.025, p = 0.6873).

For {H}_{0}^{1}: Evaluators perceive no difference in competence between job candidates wearing traditional and non-traditional clothing in professional photos, thus the {H}_{0}^{1} hypotheses cannot be rejected according to the t-test for the addressed linear mixed effect model. Specifically, the treatment effect is 4.692 (SD = 2.781, t = 1.687) corresponding to a p-value of 0.093. Power analysis run by powerSim function from simr (version 1.0.7) R package (Green and MacLeod 2016) estimates power for predictor Treatment to be 55.00%, 95% confidence interval 31.53%—76.94%.

For {H}_{0}^{2}: The gender and race of candidates do not moderate the effect of evaluators’perceptions of the candidates’competence, thus the {H}_{0}^{2} hypotheses cannot be rejected according to the t-test for the addressed linear mixed effect model. Specifically, the main effect of crace is 7.419 (SD = 6.347, t = 1.169) and of cgender is 11.022 (SD = 6.341, t = 1.738) corresponding to p-values of 0.245 and 0.085 respectively. Furthermore, none of the interactions involving crace or cgender are found significant, see Table 5 for detailed information about the effects, their standard deviations, t-statistics and p-values.

For {H}_{0}^{3}: The gender and hiring experience of evaluators do not moderate the effect of evaluators’perceptions of the candidates’competence, thus the {H}_{0}^{3} hypotheses cannot be rejected according to the t-test for the addressed linear mixed effect model. Specifically, the main effect of gender is −10.829 (SD = 11.886, t = −0.911) and the main effect of hiringExp is −10.289 (SD = 15.803, t = −0.651), corresponding to p-values of 0.363 and 0.516. Furthermore, none of the interactions involving race or hiringExp are found significant (see details in Table 5).

Thus, all main hypotheses could not be rejected. Although we ran the model with the hiring experience variable disaggregated, the results of the hypotheses testing were no different. Moreover, {{\varvec{H}}}_{0}^{2} and {{\varvec{H}}}_{0}^{3} are not rejected in any of the subgroups defined by interactions of order 2 and 3. The results demonstrate that we could not find associated hiring biases to be significant, corroborating in favor of fairness in the formation of impressions about competence during candidate evaluations within the addressed population. However, caution should be exercised as the conclusions are based on the assumption that the responses of the participants are unbiased, the sample of participants is representative of the hiring personnel in the population, i.e. both random participants and invited as well as non-response are not associated with the biases in terms of evaluation. Finally, we assume that non-completed surveys are also orthogonal to the biases in evaluation.

4.2 Thematic Analysis

Thematic analysis was chosen for data analysis in this study since we aim to identify and analyze emerging patterns within the collected data. The unique identifier (EID) was employed for the 78 valid responses containing text in the final question, as each text corresponds to a single participant (evaluator). After reading the data, we identified specific text segments and labelled them. By applying constant comparison, we compared emerging codes with earlier codes and then the related codes were categorized into themes to reduce overlap between codes. Throughout our analysis, we record thoughts and ideas about the codes using free-form notes. It was useful to group subthemes and identify higher-order themes. Moreover, as the participants were Spanish speakers, we used a backward translation strategy to ensure the accuracy of the translation. This involves translating the content back from the target language into the original language.

Initially, two authors began the analysis using Excel. However, one of them later transitioned to NVivo, a qualitative data analysis platform, to leverage its specialized features and acquire a deeper understanding of the data. These authors engaged in discussions until a consensus was reached on the creation of codes, subthemes, and themes. To facilitate conflict resolution, a third author was involved as needed. The emerging coding scheme includes five themes, twelve sub-themes and sixty-eight codes. This qualitative coding scheme is available online in the replication package (Sánchez-Gordón et al. 2024). As the data originally was in Spanish, the analysis we conducted in that language, and then, we translated the codes and quotes into English to enable reporting and wider dissemination.

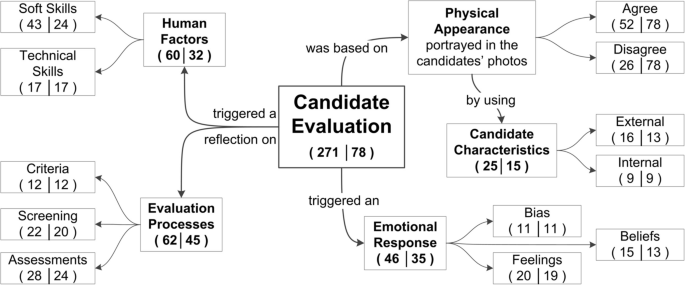

Table 7 illustrates an instance of the thematic analysis exemplified by a quote from the participant [EID 176949503] in the control group. Note that a single quote was categorized under multiple codes. Therefore, the frequencies are computed for related categories along with the unique identifier (EID), e.g., Codes (n = 3│1). As a result, we labelled 271 text segments from the 78 comments provided by the evaluators.

In what follows, we provide an overview of the demographic information of the participants and our findings grouped into the five themes that emerged from the data: Physical Appearance, Candidate Characteristics, Emotional Response, Evaluation Processes, and Human Factors as shown in Fig. 7.

Overview of the thematic analysis results. Frequencies are calculated based on the occurrence of each category and the number of participants supporting each one (#category│#unique EID)

Demographic Information Overview

This information is reported along with the relative difference (ratio) in the number of participants between the thematic analysis and the previous one. For instance, there is a 60.4% decrease in the number of participants calculated as the difference between 78 and 197, divided by 197.

In the thematic analysis, the majority of participants identified as men (n = 64, 59.7%), while 14 (63.1%) identified as women. Note that the gender distribution of this sub-sample is similar to the previous analysis, 21.8% and 23.8%, respectively. The age of most participants (59, 57.5%) ranged from 26 to 45 years. Moreover, most of the participants reported an engineering degree (45, 65.3%) followed by a master’s degree (28, 49%), and the remaining (5, 58.3%) reported having other degrees.

Most participants (68, 58.2%) reported having more than one year of experience (mean = 7.25, SD = 6.02, range, 1–26) with about a third (10, 70.5%) reporting less than a year. Looking into the different industrial sectors, about a third (23, 69.7%) of participants worked in ICT, followed by Education (15, 34.7%), Financial (14, 57.5%), Government (5 vs 50%), and other sectors (21, 61.8%) including Telecommunications. The most reported job role was software developer (31, 64.7%), although analyst (12, 67.5%), project manager (16, 44.8%), tester (3, 57.1%), and architect (1, 80%) were also represented. In the category of others (15, 51.6%), we identify middle managers (3, 62.5%), academics (2, 66.6%) and other ICT-related roles (10, 41.1%). Among the respondents who have hiring experience, 76.9% (60, 58.9%) reported occasional (53, 58.5%) and frequent (7, 61.1%) involvement, while 23% (18, 64.7%) stated they had never been involved. Finally, the majority of the respondents (42, 62.1%) reported that candidates did not seem familiar to them, while the remaining (36, 58.14%) reported they were somehow familiar with the candidates.

This overview suggests a representative sub-sample that can provide insights into the earlier results of our statistical analysis. Figure 7 shows themes and sub-themes, with frequencies calculated based on the occurrence of each category and the number of participants supporting each one (#category│#unique EID), for example, Human factors (60│32). Further details are available in the supplementary material.

Physical Appearance

We identified that nearly one-third of the 78 participants disagreed with using the physical appearance portrayed in the candidates’ photos to rate their competence. For instance, [EID 178964317] claimed “I don’t think it’s right to judge developers based on their appearance”. The remaining participants agreed to rate candidates’ perceived competence. Moreover, although candidate evaluations prompted most participants —regardless of their level of agreement— to reflect on evaluation processes and candidates’ human factors, this effect was most common among those who disagreed.

Candidate Characteristics

This category comprises 15 participants and includes both external and internal characteristics used as clues to rate the perceived competence. For instance, [EID 177898791] stated “Based on a photo, one cannot evaluate [if candidates are] skillful, competent, or talented without having a parameter such as [their professional] experience, but one can evaluate [if they are] ambitious since everyone applies for the position to either change their job or improve their economic situation”. As expected, all participants who disagreed with the evaluation did not specify the candidate characteristics they used as clues, except for two participants in the experimental group [EID 178232883, 177859633]. They also mentioned external characteristics such as facial expressions and gaze, as well as internal characteristics like personality and trustworthiness. For example, [EID 178232883] claimed “From the visual point of view, the only thing I can focus on is [their] serious [candidates’ facial] features, [I believe] their gaze can also denote personality traits” whereas [EID 177859633] stated, “I chose based on their facial expressions, I gave the faces that I was confident in or those were the most serious the highest score”.

Among the 52 participants who agreed, only 13 provided insights into the candidates’characteristics that they used. One participant admitted [EID 178616122] “I have relied somewhat on ages, and the personality portrayed in the photo” while another mentioned [EID 176953086] “The clothing and the gaze are factors that I paid more attention to”. Therefore, we categorized age and dress style as external characteristics. Additionally, other participants tried to find clues about internal characteristics like personality traits [EID 178232883], determination [EID 179011283], empathy [EID 178622065], and confidence [EID 177567155].

Emotional Response

Throughout our analysis, we noticed emerging evidence suggesting that some participants (35) felt emotionally affected. This category was less evident than the others and emerged last. Sometimes, participants expressed their feelings and biases, whereas others shared their beliefs. For example, [EID 176957155] expressed a moral judgment and belief in meritocracy by asserting “[Candidates] are not judged on their appearance, only on [their] talent”. We noticed that all moral judgement emerged in the experimental group, except for [EID 177624461] who humbly admitted “I believe that my evaluations are of little value since the photo is not enough to derive a correct or fair assessment”.

We also observed that only eight participants in the control group identified familiarity bias as a factor influencing the candidates’ perceived competence, even though 36 out of 197 participants reported some degree of familiarity with the candidates. For instance, [EID 177040294] stated “The evaluation is very subjective, and since I know many candidates, I already have preconceived criteria about them, and possibly my responses may be biased”. In the experimental group, only one participant [EID 177012250] acknowledged this bias but not as a factor impacting perceived competence. Instead, the code pretendian emerged, as she claimed, “I would like to know why they wore Indigenous clothing if the evaluated [candidate], at least the one I know, is not Indigenous”. This caught our attention, as another participant [EID 177510659] affirmed “The photos are repeated, and they are not of the ethnicities” while reporting that candidates did not seem familiar to him. Since the photos are not duplicated, this suggests a cross-race effect, which refers to the phenomenon by which own-race faces are better recognized than faces of another race (Wong et al. 2020). Additionally, one participant, [EID 177670817], raised concerns about racist hiring practices by stating, “I disagree with attaching photos to CVs, as it can lead to deselection due to racism”. He also expressed discomfort, adding, “I felt a little upset participating in this survey because people should not be judged”.

Finally, 19 participants reflected on their feelings about the score, expressing that they found it challenging, subjective , inaccurate, unfair, subjective, and superficial. Additionally, another participant, [EID 177841940], suggested variability in the provided competence scores by noting, “The evaluation can vary according to their [candidates] communication skills”.

Evaluation Processes

This category includes the largest number of participants (45). Twelve participants mentioned aspects related to the criteria used. For instance, [EID 176925951] signalled job characteristics by saying “One of today’s abilities being analyzed is the ability to work in a team and proficiency in the English language” whereas [EID 177022155] added,”[I would like to] know in what they perform better”. Furthermore, a need to include participants from all racial backgrounds was highlighted by [EID 177900856] in the experimental group, who stated, “I don’t think only indigenous people [should be] evaluated, everybody should be evaluated”. Supporting this, another participant, [EID 177042505] in the control group suggested, “Add African American, Indigenous, foreign (Latin American and European nationalities) participants”.

Participants also expressed an interest in conducting candidate screening based on additional information. Fourteen participants in the control group mentioned Job-relevant information, Professional experience, Professional growth, Professional profile and Resume. [EID 178255299] illustrated it by saying “I need more information about the candidates. For example, I’d like to know more about their professional profile, their future aspirations as developers or programmers, things like that”. In the experimental group, we also identified six participants who mentioned all the previous additional information except for the Professional profile. Finally, we noted that twenty-four participants suggested in-depth candidate assessments. While some participants explicitly mentioned well-known approaches, i.e., Interviews, Knowledge-based tests, and Psychometric tests, others were less specific and stated other methods. In addition, we identified a need for Live interactions, for example, [EID 178285906] argued “To be able to evaluate the characteristics, I need to have a conversation; it’s easier for me that way”.

Human Factors

This category emerged from the comments regardless of participants’agreement level with using candidates’ photos to rate the perceived competence. Although Human Factors is closely related to the Evaluation Processes theme, we created a separate theme due to its relevance. Thirty-two participants explicitly mentioned a set of technical skills and soft skills they would like to evaluate before rating the perceived competence of the candidates. While only 9 participants mentioned both soft skills and technical skills, 15 others focused only on soft skills and an additional 8 emphasized only technical skills. For instance, [EID 176976210] claimed “What is really fundamental is their [candidate] technical preparation, their experience in software development projects, and their way of interacting with people”. While [EID 178615166] highlighted “When evaluating candidates from my perspective, I pay more attention to how they respond when presented with a question and how they communicate”, and [EID 177859104] added, “The evaluation should be in-person, with rigorous examinations in multiple programming languages”. Although we observed that some participants referenced soft skills or professional capability in a general sense, we identified a specific set of 21 soft skills with communication, goal orientation, learning agility, and teamwork frequently mentioned. Finally, apart from technical knowledge, the significance of English proficiency and specialized Knowledge about a regulatory framework were specifically emphasized by [EID 176925951] and [EID 176945502], respectively.

4.3 Summary

{H}_{0}^{1}: Evaluators perceive no difference in competence between job candidates wearing traditional and non-traditional clothing in professional photos, {H}_{a}^{1}: Alternative. Our results show that there is no sufficient evidence in support of the alternative hypothesis, and therefore the {H}_{0}^{1} hypothesis cannot be rejected. Although we observed notable evaluator discrepancies, a positive trend in the competence scores was also observed for almost all professionals wearing traditional clothing. This is confirmed by a significant random slope within each evaluator (ANOVA in R; χ2(1) = 223.82, p < 0.0001). Our findings align with previous studies in psychology like (Hehman et al. 2017; Sutherland et al. 2020), which have highlighted that inferences of competence are driven by the characteristics of the perceiver.

{H}_{0}^{2}: The gender and race of candidates do not moderate the effect of evaluators’perceptions of the candidates’competence, {H}_{a}^{2}: Alternative. Our results show non-significant interactions/moderation of candidates’ gender and race, and therefore the {H}_{0}^{2} hypothesis cannot be rejected. However, the top five competence scores were given to one male and four female professionals wearing traditional clothing.

({H}_{0}^{3}): The gender and hiring experience of evaluators do not moderate the effect of evaluators’perceptions of the candidates’ competence, {H}_{a}^{3}: Alternative. Our results show non-significant interactions/moderation of evaluators’ gender and hiring experience, and therefore the {H}_{0}^{3} hypothesis cannot be rejected. However, we observed that male evaluators tended to assign higher competence scores when compared to their female counterparts. Additionally, female evaluators exhibited a trend of perceiving female candidates as more competent when they were dressed in traditional clothing compared to other clothing choices.

We also conducted a thematic analysis of the final open question in our survey, with responses from 78 out of 197 participants. Of these, 43.5% displayed signals of being emotionally affected and 66.7% agreed to rate candidates perceived competence. However, only some of them (13, 25%) reported using external and internal characteristics as clues to rate the perceived competence. During the candidate evaluation, participants reflected on the criteria used and expressed a need for further information about the candidate, particularly human factors like technical and soft skills. They also showed an interest in evaluation processes and expressed an interest in conducting candidate screening based on additional information and suggested in-depth candidate assessments. It suggests a tendency to fairness in impression formation related to competence which supports the statistical analysis results.

5 Discussion

To answer our research question, Does a choice of dress style in a photograph influence software professionals’ evaluations of an Ecuadorian software developer’s competence? We developed a theoretical model and analyzed collected data using mixed models. Our findings suggest that the choice of dress style in a photograph did not influence software professionals’ evaluation of the software developers’ competence. However, while the effects in our sample do not reach statistical significance, there are indications that stereotypes may play a role. Moreover, the observed patterns of means and emotional responses seem to be aligned with the expectancy violation theory.

Software developer is a male-dominated occupation and despite being culturally embedded, gender stereotypes are fairly universal across Western countries (Birkelund et al. 2022). However, previous studies have revealed that contextual factors play a role in understanding unfair hiring practices (Lippens et al. 2023). Therefore, looking at the Indigenous population in northern Ecuador, we observed that 7.7% of the Ecuadorian population self-identified as Indigenous according to the last census data (INEC 2023) whereas 13.33% of students in the Faculty of Engineering and Applied Sciences at the Universidad Técnica del Norte self-identified as indigenous during the last academic year (Universidad Técnica del Norte 2024). It indicates a healthy representation of the indigenous population within this regional software industry, thereby offering a novel context compared to previous research on this topic.

In our sample, 74,1% (146 out of 197) participants were involved in the evaluation process of job candidates with 34 reporting management positions, i.e., project manager (27) and middle manager (7). This indicates that 112 non-managers have had the opportunity to raise their voices, including 20 of the less experienced participants. Based on our sample, the evaluation of job candidates seems a fair enough democratic process in terms of participation at least in this context. Supporting this, Filkuková and Jørgensen (2020) found that 50% of the participants were previously involved in hiring employees. While their study focused on comparing different facial expressions across the same set of IT professionals from a Norwegian institution, female candidates were still perceived as less competent than males as our findings in the control group. However, that study did not examine the effect of evaluators’ gender and candidates’ race.

Although effects from the dress manipulation (treatment) were observed, they did not reach statistical significance, resulting in a hypothesis {H}_{0}^{1} that cannot be rejected. We expected that candidates in the control group could strongly benefit from the treatment, especially Indigenous women candidates. However, we found the opposite pattern. Almost all candidates received a more favorable competence score in the experimental group than in the control group regardless of evaluators’ gender and hiring experience. Against our expectation, however, there also was by trend, more positive competence scores for Indigenous except for the negative effects on the perceived competence of the oldest candidate (C9, 44 years). This candidate received one of the third-highest competence scores (M = 56.64, SD = 19.07) within the control group. However, when compared to the experimental group (M = 53.69, SD = 21.05), he had the most negative difference score. Further analyses also confirmed that there was no significant interaction between candidates’ gender and race, nor was there a significant interaction between evaluators’ gender and hiring experience. Therefore, the {H}_{0}^{2} and {H}_{0}^{3} hypotheses cannot be rejected.

The thematic analysis results suggest that 60 out of the 197 participants in both experimental conditions try to limit the use of social cognition. This observation could partially counter the potential presence of social desirability bias. Since participants were aware of being observed, two forms of bias may have occurred. First, a selection bias where participants with racist or intolerant views may have avoided the study. Second, a social desirability bias among those who felt comfortable participating led them to express opinions aligned with what could be perceived as the “desirable” stance, such as supporting inclusivity towards underrepresented groups like Indigenous professionals. However, if social desirability would lead to more favorable evaluations, competence scores should have been more favorable for females in the control group, and the opposite was observed since software developer is a male-dominated occupation. In the experimental group, social desirability would lead to more favorable evaluations, but female candidates receive more extremely positive evaluations compared to male candidates. This observation suggests that when candidates violate stereotypes linked to a salient group category, such as race (Indigenous), the perceiver’s experience of unexpectedness may lead to more extreme overall evaluations.

Revisiting our findings, we observe that evaluators in both control and experimental groups were explicitly informed that all the candidates they would see were equally qualified for a position as a software developer, but evaluators perceived candidates differently. In the control group, evaluators perceived gender-based stereotypes as category-based expectancies, leading to lower competence scores for female candidates compared to male candidates. However, male evaluators’ ratings were only slightly more negative. In the experimental group, candidates of all genders received more positive competence evaluations when they violated stereotyped expectations for their race group (Indigenous), resulting in higher competence scores. However, dress manipulation induced more extreme evaluations of the competence scores of the candidates who violated stereotyped expectations. As a result, female candidates received even more favorite competence scores compared to male candidates. Although same-race bias could exist, it is unlikely to explain our findings as cross-race (evaluator-candidate) pairs are more prevalent in our sample than same-race pairs. Indeed, these observations suggest that stereotype expectancy effects provide a more straightforward explanation for observed patterns than social desirability. Our findings are consistent with the predictions of expectancy-violation theory (Burgoon 2015) but further research is needed to examine the effect of expectancy violations and minimize the viability of social desirability. Expectancy-violation theory posits that cross-race candidates frequently face lower expectations, and when they surpass these expectations, they are evaluated more positively (Moore et al. 2016).

Based on the correlations, we also observe notable evaluator discrepancies indicating varying judgments and outcomes that suggest systematic idiosyncrasy in line with evidence of striking perceiver differences found for competence/dominance in (Sutherland et al. 2020). Indeed, according to Hehman et al. (2017), dimensions related to inferences of character, such as dominance/competence, are driven more by perceiver characteristics. These authors also concluded that these idiosyncrasies are not noise or error, instead, they represent an important phenomenon in their own right but the extent of which varies depending on the domain of judgment. In this sense, our findings provide empirical evidence of this phenomenon within the SE context. Cross-cultural agreement on competence also reflects this pattern (Zebrowitz et al. 2012; Sutherland et al. 2017). It suggests that perceived competence might be less visually obvious (Hehman et al. 2017). In support of that, our thematic analysis results indicate that participants may have different candidate characteristics in mind, or they may even be envisioning different latent constructs to which different candidate characteristics apply. To one software professional, clues of competence may be seen in facial expressions, grooming or dress style, to another, it may be perceived as displaying confidence, determination or empathy. It supports the significant role that variability in both the perceiver (evaluator) and the target (candidate) plays in this context. Therefore, competence seems variable because these judgements are highly contextual as suggested by Sutherland et al. (2020). Indeed, we observed not only varied emotional responses based on participants’ beliefs, biases, and feelings but also diverse perspectives on evaluation processes and human factors they consider important for job candidates. A closer examination of the pretendian category underscores the challenge of discerning between Mestizo and Indigenous candidates in our sample based solely on facial traits, as only one of the 93 participants in the experimental group raised this concern.

An alternative explanation for the positive trend observed in the experimental group may be attributed to physical attractiveness. Dress manipulation can vary physical attractiveness as suggested by Lennon (1990). This, in turn, might be used as a cue for competence, ultimately leading to higher perceptions of competence. In this situation, the magnitude of the attractiveness effect is lower in studies with high than low job-relevant information (Nault et al. 2020). Our study had a decontextualized design to ensure experimental control, with little job-relevant information provided. Moreover, after stimulus creation, the Ecuadorian authors noted an effect related to clothing attractiveness but our pilot study did not compare candidates’ photos based on physical attractiveness. Even though the most studied concepts in dress manipulations have been dress, status, and attractiveness, attractiveness is the main social signal associated with physical appearance (Johnson et al. 2008). The perception of target’s attractiveness can be influenced by the target’s dress, which is almost inevitably interpreted in conjunction with the target face (Hester and Hehman 2023). Moreover, research on attractiveness discrimination has demonstrated that attractiveness could lead to higher perceptions of competence (Nault et al. 2020). The effects of attractiveness are related to a variety of real-world outcomes that can favor the more attractive over the less attractive. However, attractiveness and age, unlike competence, are clearly visible on the face (Rhodes 2006). Therefore, we cannot dismiss that the positive trend observed in the experimental group could be due to physical attractiveness. Although most participants (138/197, 70%) evaluated the candidates’ perceived competence based on their photos without apparent disagreement, only 15 of them provided details about the candidates’ characteristics.

Findings in our study are inconclusive, taking into account the sample we used. In the context of Ecuador, a country in which mestizo is the majoritarian group, the identification of a software professional with a specific indigenous communities is not influencing the perceived competence of the individual. However, in a highly global world in which global software development can be considered a global practice, this perception may vary in cases in which the observer is not a citizen of the sourcing country. Although authors aim to expand their work to study this phenomenon, one of the practical takeaways for software professionals is the need to include nationals from the sourcing countries to alleviate possible biases in selection processes. A second takeaway is related to the selection of clothes for professional photos. According to our study, and at least in a setup similar to the one used in our study, we can think that professional photos showing pertinence to indigenous communities are not influencing in perceived competence of these professionals, so, potential candidates can choose identitarian photos as professional photos without the risk of being biased in their competence.

Field experiments using photos need to ensure they can accurately evaluate photos for the range of factors that have been shown to affect judgments. The impact of different design features entails experimental design (within- vs. between evaluators designs), gender of both evaluators and candidates, presentation order of stimulus, and stimulus quality. Beyond non-odd photos, stimulus quality entails contextual scenario of the stimulus (plain white background), targets’ angle of view (fixed), facial expression (neutral expression), body region (head-to-shoulder framing), stimulus context (portrait), pose on photos (posture is straight and upright, with target shoulders relaxed and down). Although our research design considered all these features, we did not control the duration of exposure to the static stimuli. Moreover, there is a possibility that the responses received were influenced by some characteristics unintended by the researchers but conveyed by the photo such as attractiveness, personality traits, warmth, and trustworthiness. A takeaway for researchers is that attaching photos to CVs in applications for jobs not only introduces unobservable characteristics but also there are characteristics of a candidate that could be signalled in a photo or personal profile and play a role in hiring decisions. Moreover, it is worth noting that perceived competence is relevant beyond the hiring context. For example, accordingly Wang and Zhang (2019), competence as a dimension in the SCM model has significant impacts on the initial trust and cooperative behaviors of global software engineering team members when interacting with unfamiliar foreign collaborators. Given the collaborative nature of software development, the perceived competence of newcomers as unfamiliar collaborators represents another scenario in which social cognition may come into play.

5.1 Future Work